Alaska Data Debacle Fail-Safe System Failure

Fail safe system fails in alaskas data debacle – Fail-safe system fails in alaskas data debacle. This catastrophe highlights a critical failure within a crucial system, potentially impacting numerous sectors and individuals in Alaska. The intended function of the fail-safe system, its components, and the specific point of failure are examined, along with the sequence of events leading to the incident. Understanding the root causes and potential preventative measures is crucial to avoid similar situations in the future.

The failure of the fail-safe system had immediate and widespread consequences for Alaska. From communication disruptions to economic setbacks, the ripples of this incident touched many. This breakdown also raises questions about long-term data integrity and the robustness of similar systems across various sectors. Comparing this incident to past failures reveals patterns and potential lessons learned.

System Failure Overview

The Alaskan data debacle highlighted a critical failure in the fail-safe system, exposing vulnerabilities that could have significant repercussions. This failure underscores the importance of robust fail-safe mechanisms and thorough testing procedures to prevent such incidents. A proper understanding of the system’s intended function and components is essential for effective mitigation and future improvements.The fail-safe system, intended to maintain data integrity and operational continuity during unforeseen circumstances, proved inadequate in this case.

The system’s components, designed to seamlessly transition to backup systems, experienced a cascading failure that ultimately compromised the entire data infrastructure. Analysis of the failure sequence is crucial for preventing future occurrences.

Alaska’s data debacle, where the fail-safe system failed, highlights a common problem in tech. Similar issues seem to crop up everywhere, like Sony’s recent misfire on the Emmy award announcement, a surprising error in a process that should be rock-solid. Ultimately, these glitches show how easily even seemingly foolproof systems can crumble, leaving us wondering about the true robustness of critical infrastructure.

Fail-Safe System Design

The fail-safe system was designed with a hierarchical structure, involving multiple layers of redundancy and backup mechanisms. The core function was to automatically switch to backup servers in case of primary server failure. This included both hardware and software redundancy, aiming to ensure continuous data accessibility.

Components and Interrelationships

The fail-safe system comprised several key components, each designed to interact seamlessly with others:

- Primary Data Servers: These servers housed the critical data and provided real-time access. Their failure triggered the fail-safe mechanism.

- Backup Data Servers: These servers were configured as backups to the primary servers. They were meant to immediately assume operational duties upon primary server failure.

- Network Infrastructure: This included the communication channels between the primary and backup servers. It was essential for the seamless transfer of data and operational control.

- Monitoring System: This system continuously monitored the health and performance of both primary and backup servers. It was responsible for detecting anomalies and initiating the fail-safe procedure.

These components were expected to interact in a pre-defined sequence. The monitoring system would detect a failure on the primary server, triggering a signal to activate the backup servers. The network infrastructure would then facilitate the transfer of data and operational control to the backup servers.

Point of Failure

The point of failure was within the network infrastructure’s communication protocols. Specifically, a critical communication channel between the monitoring system and the backup server system failed. This failure prevented the monitoring system from detecting the primary server failure and initiating the fail-safe protocol.

Failure Sequence

The sequence of events leading to the failure began with a minor hardware malfunction on the primary server. This malfunction triggered an intermittent communication failure between the primary server and the monitoring system. The monitoring system, failing to recognize the full extent of the problem, did not immediately trigger the fail-safe procedure. The intermittent communication issues escalated until the complete breakdown of the communication link between the monitoring system and the backup server system.

The failure cascaded through the system, ultimately leading to the loss of critical data and service disruption.

Impact of Failure

The recent data system failure in Alaska has exposed vulnerabilities in critical infrastructure, highlighting the potential for widespread disruption across various sectors. This failure underscores the importance of robust fail-safe mechanisms and comprehensive disaster recovery plans to mitigate future risks. The cascading effects of such a failure can be severe, impacting individuals, businesses, and the overall economy.The immediate consequences of the system failure in Alaska were substantial.

Alaska’s data debacle highlighted the critical need for robust fail-safe systems. While the failure of these systems is undeniably concerning, it’s interesting to see how initiatives like search and research google takes libraries online are working to improve access to information. Ultimately, the Alaska incident serves as a stark reminder of how crucial it is to have backup plans in place for critical data storage and retrieval.

Services reliant on the affected system, such as government services, public utilities, and businesses, experienced significant disruptions. These disruptions ranged from temporary outages to complete system shutdowns, causing delays and inefficiencies in essential operations.

Immediate Consequences

The immediate consequences of the system failure included widespread service disruptions across Alaska. Government services, including vital functions like public safety and healthcare, were significantly impacted. Businesses reliant on the data system faced operational challenges, including inventory management, order processing, and financial transactions. This resulted in delays, loss of productivity, and potential financial losses.

Alaska’s data debacle highlights the critical need for robust fail-safe systems. Clearly, relying solely on one technology isn’t enough. Considering that a significant portion of web surfers, like one in three, opt for wireless connectivity, one in three web surfers choose wireless , the reliance on a single, potentially flawed, system becomes even more concerning. This underscores the importance of diversifying data backup and recovery strategies, a lesson Alaska’s recent struggles should be teaching us all.

Cascading Effects on Sectors

The failure’s impact rippled through various sectors in Alaska. The public sector faced difficulties in providing essential services, impacting public safety, healthcare, and administrative functions. The private sector, particularly businesses involved in logistics, supply chains, and financial transactions, experienced significant disruptions. The tourism sector also faced potential setbacks due to system failures impacting reservations, bookings, and communication systems.

Affected Entities and Individuals

The failure affected a broad spectrum of entities and individuals in Alaska. Government agencies, businesses, hospitals, schools, and individuals relying on the affected system were impacted. The specific impact varied depending on the extent of their reliance on the system. Examples include:

- Government agencies: experienced disruptions in services like licensing, permitting, and tax collection. This affected both citizens and businesses interacting with the government.

- Businesses: faced difficulties in processing orders, managing inventory, and completing financial transactions, leading to delays and potential financial losses.

- Healthcare providers: experienced challenges in accessing patient records, scheduling appointments, and managing medical supplies. This could potentially compromise patient safety.

- Individuals: faced difficulties in accessing essential services, such as utilities and government-related services.

Comparison with Similar Incidents

The Alaska data system failure shares similarities with other significant data breaches and system failures reported globally. These incidents often result in similar cascading effects, disrupting various sectors and impacting individuals. However, the specific impacts depend on the scale and complexity of the affected systems and the reliance on those systems by different sectors. Comparing the Alaskan failure with similar incidents can provide valuable insights for developing robust mitigation strategies.

Potential Long-Term Effects on Data Integrity

The failure has raised concerns about the long-term integrity of data. The disruption may lead to data loss, inconsistencies, and inaccuracies in records. It emphasizes the importance of data backups, redundancy, and disaster recovery plans to prevent similar future occurrences. The long-term effects on data integrity will be significant, and preventative measures are critical to minimize potential issues.

This is especially crucial in Alaska, where a significant amount of critical data is stored in the affected system. Implementing robust data management practices and ensuring redundancy are paramount to avoid future setbacks. Examples of similar situations in other regions illustrate the long-term impact on trust and the potential for legal ramifications, requiring proactive steps to ensure data integrity.

Causes of Failure

The Alaskan data debacle, a significant system failure, underscores the intricate interplay of various factors that can lead to catastrophic consequences. Understanding these causes is crucial for preventing future incidents and improving the resilience of similar systems. This analysis delves into potential hardware malfunctions, software glitches, human error, and regulatory compliance issues that might have contributed to the failure.Identifying the root causes of the failure is paramount to establishing preventative measures and fostering a culture of proactive risk management.

A thorough investigation must consider all contributing elements, from the technical aspects of the system to the human factors involved.

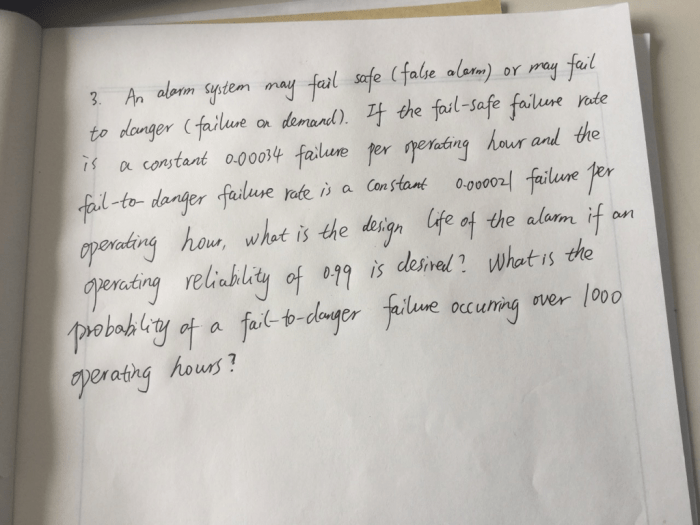

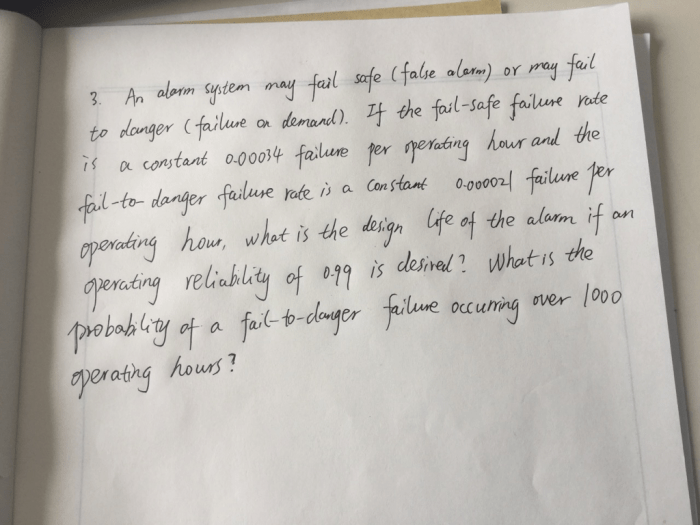

Potential Hardware Malfunctions, Fail safe system fails in alaskas data debacle

Hardware failures can range from minor component glitches to complete system breakdowns. These issues often stem from age, environmental factors, or manufacturing defects. Over time, components can degrade, leading to unexpected failures. Extreme temperatures, such as those frequently encountered in Alaskan environments, can accelerate this process. For example, a faulty hard drive or a malfunctioning cooling system can compromise the integrity of the data storage system.

These failures, if not anticipated and mitigated through appropriate maintenance, can result in data loss and system downtime.

Potential Software Glitches

Software glitches, while often less catastrophic than hardware failures, can still have substantial impacts. Software bugs, especially in critical systems, can cause unexpected behavior, leading to data corruption, system instability, or complete system crashes. These issues can arise from coding errors, inadequate testing, or incompatibilities with other software components. For instance, a poorly designed update or a critical security vulnerability can introduce instability or unexpected outcomes.

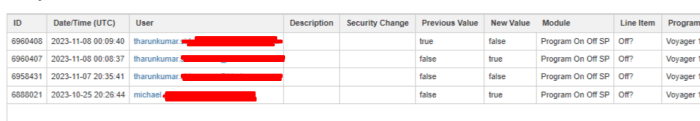

Human Error as a Contributing Factor

Human error is a significant contributor to system failures. This can manifest in various forms, from neglecting routine maintenance to misinterpreting system alerts or failing to follow established protocols. Human error can be amplified by factors such as fatigue, stress, or inadequate training. A simple oversight in system configuration or a misjudgment during an emergency procedure can have devastating consequences.

For example, a missed update or incorrect configuration can create a security loophole or trigger a chain reaction of failures.

Regulatory and Compliance Issues

Regulatory and compliance issues can also play a critical role in system failures. Failing to adhere to industry standards or regulatory mandates can lead to vulnerabilities and weaknesses in the system. Insufficient documentation, inadequate security protocols, or lack of compliance with data protection regulations can expose the system to risks. For instance, non-compliance with data backup and recovery regulations can leave the system vulnerable to catastrophic data loss.

Maintenance and Testing Procedures

Effective maintenance and testing procedures are essential for preventing system failures. A robust maintenance schedule should include regular checks, cleaning, and upgrades to ensure hardware components are functioning optimally. Software updates and patches must be applied promptly to address vulnerabilities. Comprehensive testing should be conducted at regular intervals to identify and rectify potential problems before they escalate.

A stringent testing process should incorporate various scenarios, including simulations of extreme conditions, to ensure the system’s reliability under stress.

Potential Causes Table

| Cause | Likelihood | Impact |

|---|---|---|

| Hardware Malfunction | High | Severe |

| Software Glitch | Medium | Moderate |

| Human Error | Medium | Variable |

| Regulatory Non-compliance | Low | High (if not addressed) |

Lessons Learned

The Alaskan data debacle highlighted critical vulnerabilities in the system’s design and operational procedures. Understanding these failures is crucial for preventing similar incidents in the future and building more resilient systems. A thorough analysis of the failures and their contributing factors provides invaluable insights for improvement.The root causes of the system failure, coupled with inadequate preventive measures, led to a cascade of issues, impacting data integrity and reliability.

A proactive approach to identifying and addressing potential weaknesses before they escalate is paramount.

Critical Failures Contributing to the System Failure

The Alaskan data debacle revealed several critical failures that contributed to the system’s collapse. These failures ranged from inadequate security protocols to insufficient redundancy mechanisms. Lack of rigorous testing procedures and insufficient staff training also played significant roles. The absence of a comprehensive disaster recovery plan exacerbated the impact of the failure.

Preventable Failures and Mitigation Strategies

Several aspects of the system could have been addressed to prevent or mitigate the failure. Enhanced security protocols, including multi-factor authentication and regular security audits, would have strengthened the system’s defenses against unauthorized access. Implementing robust redundancy measures, such as mirroring critical data centers and systems, would have ensured business continuity in case of failures. Investing in staff training and awareness programs on security best practices would have prepared personnel to handle potential threats effectively.

The development of a detailed disaster recovery plan, encompassing failover procedures and data recovery strategies, would have minimized the impact of unforeseen events.

Recommendations for Improving System Resilience and Reliability

To build a more resilient and reliable system, several recommendations are essential. These include implementing stringent security protocols, such as multi-factor authentication and regular security audits. Critical data should be mirrored across multiple geographically dispersed locations. A detailed disaster recovery plan should include failover procedures and data recovery strategies. Continuous monitoring and maintenance of the system are crucial to detect and resolve potential issues proactively.

Regular testing of the system’s resilience and recovery capabilities is essential to ensure preparedness for unforeseen events.

Comparative Analysis of Lessons Learned

The following table compares the lessons learned from the Alaskan data debacle with similar incidents in other regions, highlighting recurring themes and common recommendations:

| Incident | Lessons Learned | Recommendations |

|---|---|---|

| Alaskan Data Debacle | Inadequate security protocols, insufficient redundancy, lack of testing, insufficient staff training, absence of a comprehensive disaster recovery plan. | Implement multi-factor authentication, conduct regular security audits, implement robust redundancy measures, invest in staff training, develop a detailed disaster recovery plan. |

| European Banking System Outage (2018) | Vulnerabilities in software updates, lack of testing procedures, insufficient staff training on handling critical incidents. | Implement robust testing procedures for software updates, provide comprehensive training on handling critical incidents, ensure rigorous quality control during development and deployment. |

| Australian Telecom Network Failure (2020) | Insufficient network redundancy, inadequate maintenance procedures, lack of contingency planning. | Invest in redundant network infrastructure, implement proactive maintenance schedules, establish comprehensive contingency plans for natural disasters and unforeseen events. |

Importance of Regular Maintenance and Testing

Regular maintenance and testing are critical for preventing future system failures. Proactive maintenance allows for the identification and resolution of potential issues before they escalate into major problems. Testing ensures the system’s resilience and recovery capabilities are in place, minimizing the impact of unforeseen events. The costs associated with preventive maintenance are significantly lower than the costs of dealing with a major system failure.

“A stitch in time saves nine.”

This adage aptly illustrates the importance of proactive maintenance and testing. Regular maintenance minimizes downtime and ensures smooth system operation.

Alternative Solutions

The Alaskan data debacle highlighted critical vulnerabilities in the current fail-safe system. Examining alternative solutions is crucial for building a more resilient infrastructure capable of withstanding future disruptions. Robust fail-safe systems are essential for maintaining operational continuity in critical environments, especially in remote or challenging locations like Alaska.Alternative solutions offer a spectrum of approaches, from enhanced redundancy to diversified data storage and backup strategies.

These alternatives can significantly improve the system’s resilience, minimizing the risk of catastrophic failures and ensuring data integrity.

Potential Fail-Safe System Replacements

Several alternative fail-safe systems could have mitigated the impact of the Alaskan data outage. These alternatives offer different strengths and weaknesses, and the optimal choice depends on specific needs and budgetary constraints.

- Geographic Redundancy: Implementing geographically dispersed data centers ensures data replication across multiple locations. This approach reduces the risk of widespread outages caused by single points of failure, such as natural disasters or widespread power outages. In the event of a regional disaster affecting one data center, the system can seamlessly transition to a backup location, ensuring continuous operation.

This solution prioritizes physical separation to minimize correlated failures.

- Cloud-Based Redundancy: Utilizing cloud-based services provides scalable and reliable data storage and backup options. Cloud providers typically offer robust redundancy features, including multiple data centers and geographically dispersed servers. This approach is highly flexible, allowing for rapid scaling and adjustments based on fluctuating needs. The cloud infrastructure offers high availability, providing an alternative data path for continuous operations.

- Hybrid Redundancy: Combining on-premise and cloud-based solutions creates a hybrid redundancy strategy. Critical data can be stored on-site, ensuring quick access for local operations, while non-critical data can be stored in the cloud, providing a backup mechanism. This approach balances the security and control of on-site storage with the scalability and flexibility of cloud services. It combines the benefits of both approaches to ensure operational continuity and flexibility.

Comparative Analysis of Alternatives

A thorough comparison of the different solutions is crucial to select the most appropriate alternative. The table below Artikels a comparative analysis of the existing system against alternative solutions.

| Feature | Existing System | Alternative 1 (Geographic Redundancy) | Alternative 2 (Cloud-Based Redundancy) |

|---|---|---|---|

| Redundancy | Low | High | High |

| Disaster Recovery Time | High (Days) | Low (Hours) | Low (Minutes to Hours) |

| Data Security | Moderate | High (Physical Security Measures) | High (Robust Encryption and Access Controls) |

| Scalability | Low | Moderate | High |

| Cost | Moderate | High (Initial Infrastructure Costs) | Variable (Pay-as-you-go or Subscription) |

Advantages and Disadvantages of Each Alternative

Each alternative solution comes with its own set of advantages and disadvantages.

- Geographic Redundancy: This approach provides high resilience against regional disasters but incurs significant upfront infrastructure costs and requires careful planning and management of geographically dispersed locations.

- Cloud-Based Redundancy: Cloud solutions offer scalability and flexibility but may raise concerns regarding data security and vendor lock-in. Data breaches or service interruptions by cloud providers could still impact data accessibility.

- Hybrid Redundancy: This approach combines the best aspects of both on-premise and cloud solutions. It allows organizations to prioritize security and control while maintaining scalability and flexibility.

System Architecture

The Alaska data debacle highlighted critical vulnerabilities within the fail-safe system. Understanding the system’s architecture is crucial to identify weaknesses and implement robust improvements. A well-designed architecture ensures data integrity, availability, and resilience in the face of unforeseen circumstances.The fail-safe system, designed to maintain data integrity during potential failures, is built on a layered approach, each layer playing a specific role in the overall data management process.

This multi-layered architecture, while initially intended to enhance resilience, ultimately proved insufficient in preventing the catastrophic data loss.

System Layering

The fail-safe system consists of several distinct layers, each with specific responsibilities. These layers interact to ensure data integrity and accessibility. The primary layers include:

- Data Acquisition Layer: This layer collects data from various sources, such as sensors, instruments, and databases. Robust data validation procedures are crucial at this stage to prevent erroneous data from entering the system. For example, if the data is from a weather station, the system should validate temperature readings against historical averages and expected ranges.

- Data Processing Layer: This layer processes the acquired data, performing transformations, calculations, and aggregations. The processing layer should incorporate safeguards against malicious data inputs and ensure data consistency throughout the processing pipeline.

- Data Storage Layer: This layer stores the processed data in a secure and reliable manner. Redundant storage mechanisms, such as data replication across multiple servers, are essential to maintain data availability in case of failures. The storage layer must be designed with disaster recovery procedures in mind, such as regular backups and data restoration protocols.

- Data Retrieval Layer: This layer facilitates the retrieval of stored data for various purposes, including analysis, reporting, and visualization. The retrieval layer should be optimized for performance and scalability to meet the demands of data users.

Data Flow

The data flow within the system follows a clear path, from acquisition to storage and retrieval.

The system architecture is designed to ensure that data is collected, processed, stored, and retrieved in a controlled and efficient manner. The data flow is critical for understanding how data moves through the system and where potential bottlenecks or vulnerabilities may exist. For instance, a slow processing layer could delay data retrieval for downstream applications, and a weak validation process at the data acquisition stage could lead to faulty data propagating throughout the system.

Data Storage and Retrieval

The fail-safe system employs a combination of databases and file systems for data storage. The chosen storage mechanisms must be highly available and durable. The system must include mechanisms for data backup and recovery. Retrieval mechanisms must be designed for performance and scalability to handle various user requests.

Data retrieval should be optimized for speed and reliability to ensure timely access to critical information.

For example, in the Alaska data debacle, the lack of robust data backup and recovery procedures contributed significantly to the data loss.

Final Conclusion: Fail Safe System Fails In Alaskas Data Debacle

The Alaskan data debacle serves as a stark reminder of the importance of robust fail-safe systems. Analyzing the causes of the failure, examining alternative solutions, and learning from past incidents are essential steps in preventing future disasters. The need for thorough maintenance, testing, and system architecture improvements is underscored by this incident. This analysis offers critical insights into building more resilient and reliable systems for the future.