Beyond Prompting: Using Agent Skills in Data Science

The Architecture of AI Skills in Technical Environments

At its core, a "skill" is defined as a reusable package of instructions, scripts, templates, and examples designed to guide an AI through a specific, recurring workflow. The technical foundation of a skill is the SKILL.md file. This document serves as the primary interface between the user and the AI, containing essential metadata such as the skill’s name, purpose, and detailed operational instructions. By bundling these instructions with supporting scripts or data examples, users can ensure that the AI adheres to specific standards and accuracy requirements across different sessions.

The primary advantage of utilizing skills over traditional "long-context" prompting lies in efficiency and resource management. In tools like Claude Code or Codex, the main context window is a finite resource. Loading an entire set of complex instructions for every possible task can lead to "context bloat," reducing the AI’s performance and increasing the likelihood of hallucinations. By using skills, the AI only needs to process lightweight metadata initially. The full weight of the instructions and resources is only loaded when the AI identifies that the specific skill is relevant to the user’s current task. This "just-in-time" delivery of instructions mirrors modular programming principles, where libraries are only imported when necessary.

Chronology of Automation: From Manual Visualization to AI Orchestration

The evolution of these workflows is best illustrated by the transition of data visualization practices over the last decade. A prominent case study involves a continuous data visualization project that began in 2018. Originally, this workflow was entirely manual, centered on tools like Tableau to practice Business Intelligence (BI) techniques.

By 2024 and 2025, the workflow began integrating AI to handle the repetitive aspects of data processing and storytelling. The manual routine, which typically required approximately one hour per week, involved four distinct stages: searching for a dataset, cleaning the data, generating a visualization, and publishing the results with an accompanying narrative.

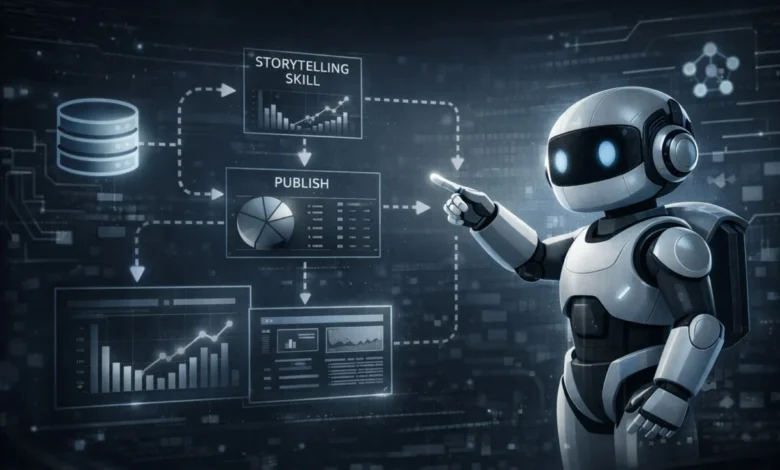

In the current technological landscape, this timeline has shifted toward an "AI-first" workflow. While dataset selection remains a human-driven, creative task, the subsequent steps have been modularized into two distinct AI skills: a "storytelling-viz" skill for insight generation and chart creation, and a "publishing" skill for blog integration. This transition has reduced the end-to-end processing time from 60 minutes to less than 10 minutes, representing an 83% increase in efficiency.

Technical Synergies: Combining Skills with Model Context Protocol (MCP)

A critical component of this modern workflow is the Model Context Protocol (MCP). While a "skill" provides the logic and instructions for a task, MCP acts as the bridge between the LLM and external data sources or tools. In a recent demonstration, the combination of a BigQuery MCP and a visualization skill allowed an AI to query live data from a Google BigQuery database and immediately apply the "storytelling-viz" logic to the results.

This synergy solves a common problem in AI-driven data science: the "last mile" of implementation. Without MCP, the AI might generate the correct code but lack the ability to execute it against a live database. Without the "skill," the AI might access the data but fail to apply the specific design principles or storytelling nuances required by the user. When used together, MCP facilitates smooth external access, while the skill ensures the process follows a rigorous, pre-defined methodology.

The Iterative Development of Technical Skills

Building a robust AI skill is rarely a single-step process. Technical experts suggest a three-phased approach to skill development that ensures the output meets professional standards.

Phase 1: Strategic Planning and Bootstrapping

The initial phase involves defining the "tech stack" and the criteria for "good" output. Modern AI tools, such as Claude Code, can actually assist in this creation process. A user can describe a workflow, and the AI will "bootstrap" the initial SKILL.md file, essentially using a skill to create another skill. However, this first version typically only covers about 10% of the desired complexity, often producing generic results that lack professional polish.

Phase 2: Integration of Domain Knowledge and External Research

To bridge the gap from a generic output to a professional-grade visualization, the skill must be enriched with specific domain knowledge. This includes feeding the AI personal style guides, preferred color palettes, and historical examples of successful work. Furthermore, the skill is improved by incorporating external research. By asking the AI to analyze visualization strategies from established design sources or existing public skill repositories (such as those found on skills.sh), the developer can bake industry best practices directly into the skill’s DNA.

Phase 3: Empirical Testing and Refinement

The final phase of development is rigorous testing. In the case of the "storytelling-viz" skill, testing across more than 15 diverse datasets revealed specific areas for improvement. These iterations led to concrete instruction updates, such as:

- Implementing "insight-driven" headlines rather than generic titles.

- Enforcing specific font families (e.g., "Geist Mono") for a consistent brand identity.

- Automatically including data source citations and caveats to ensure transparency.

- Recommending specific chart types (e.g., interactive scatter plots vs. static bar charts) based on the data’s dimensionality.

Industry Implications and the Shift in Data Science Roles

The move toward modular AI skills reflects a broader trend in the technology sector: the shift from "code generation" to "agentic orchestration." Industry analysts suggest that the role of the data scientist is evolving from a primary coder to a curator of AI instructions.

Organizations are beginning to see the value in "Skill Libraries" as a form of institutional knowledge. When a senior data scientist develops a highly effective way to analyze churn or visualize supply chain disruptions, that expertise can be codified into a skill. This allows junior analysts to leverage the same high-level logic and design standards, ensuring consistency across the enterprise.

Furthermore, the standardization of skills via the SKILL.md format suggests a future where AI-assisted workflows are as portable and version-controlled as software code. This "Skills-as-Code" movement aligns with DevOps and DataOps principles, allowing teams to track changes in AI behavior over time and collaborate on the "best" way to perform specific analytical tasks.

Analysis of the Human Element in an Automated Era

Despite the high level of automation achieved through Skills and MCP, the human element remains the most critical factor in the data science lifecycle. The automation of the "how" (the coding, the formatting, the basic cleaning) allows the human practitioner to focus more intensely on the "why" and the "what."

The case study of the weekly visualization project highlights this balance. While 80% of the process is now handled by AI, the practitioner continues the habit not to practice the tool, but to sharpen data intuition and storytelling. The AI acts as a force multiplier, handling the technical overhead while the human focuses on discovery and interpretation.

In a professional context, this means that while AI can surface an insight—such as the correlation between annual exercise time and caloric burn—the human remains responsible for the final validation of that insight and the ethical considerations of how that data is presented. The future of data science is not the replacement of the scientist by the AI, but the elevation of the scientist to a director of modular AI agents.

Conclusion and Future Outlook

The emergence of AI Skills and the Model Context Protocol marks a turning point in technical productivity. By transforming repetitive tasks into reusable, refined packages, data scientists can ensure that their AI collaborators operate with the precision of a seasoned professional. As repositories like skills.sh continue to grow and the protocols for AI-tool interaction become more standardized, the barrier to entry for complex, automated data workflows will continue to fall. For the individual practitioner and the enterprise alike, the mastery of these modular frameworks is no longer an optional efficiency—it is a foundational requirement for the AI-driven era of data science.