The Swedbank Outage: A Wake-Up Call for Change Management in Financial Services

The financial services industry is grappling with a critical re-evaluation of its change management processes following a significant outage at Swedbank in April 2022. The Swedish Financial Supervisory Authority (Finansinspektionen) recently issued a substantial SEK 850 million (approximately USD 85 million) fine to the bank, highlighting systemic failures in how IT changes were managed. This incident, which temporarily affected nearly a million customers by displaying incorrect account balances and preventing essential payments, serves as a stark reminder that traditional change management practices may no longer be sufficient in today’s rapidly evolving technological landscape.

The Incident: A Cascade of Errors

The Swedbank outage, which commenced on April 12, 2022, plunged thousands of customers into uncertainty. For a period, account balances were inaccurate, and the ability to conduct transactions was severely hampered. The root cause, as identified by Finansinspektionen, was an unapproved change to the bank’s IT systems. This unauthorized modification, rather than a malicious act, stemmed from a failure within Swedbank’s internal change management protocols.

While the regulatory judgment does not delve into the highly technical intricacies of the system failure, it clearly delineates the procedural breakdown. The investigation revealed that Swedbank had not adhered to its established change management process, a cornerstone of IT risk mitigation in the financial sector. The severity of the consequences—affecting a significant portion of the bank’s customer base and potentially jeopardizing financial stability for individuals—underscored the critical nature of these controls.

Regulatory Scrutiny and the Fine

Finansinspektionen’s decision to impose a substantial fine, while stopping short of revoking Swedbank’s banking license, reflects a calibrated response to the breach. The regulator stated, "It is therefore not relevant to withdraw Swedbank’s authorisation or issue the bank a warning. The sanction should instead be limited to a remark and an administrative fine." This phrasing indicates that while the breach was serious enough to warrant significant financial penalty and a formal reprimand, it did not reach a threshold that would necessitate the ultimate sanction of license withdrawal.

The SEK 850 million fine, while considerable from an individual perspective, represents a fraction of Swedbank’s overall financial standing. However, the reputational damage and the internal reckoning likely to follow such an event are arguably more impactful. This incident is undoubtedly a significant wake-up call for the bank’s leadership and IT operations teams responsible for ensuring robust risk and change controls.

Questioning the Efficacy of Traditional Change Management

The Swedbank case reignites a long-standing debate within the technology and finance sectors: the true effectiveness of traditional, often manual, change management processes. These processes typically involve extensive documentation, rigorous testing cycles, and formal approval from Change Advisory Boards (CABs) before any IT modification is implemented in a production environment.

The core argument against these antiquated methods is that strict adherence to process does not inherently guarantee safety or security. In complex, dynamic IT environments, ticking boxes on a checklist or obtaining multiple signatures can become a bureaucratic exercise that masks underlying risks. The emphasis shifts from ensuring the integrity and security of the change itself to ensuring compliance with the procedural steps.

Insights from the UK Financial Conduct Authority (FCA)

Further evidence supporting this critique comes from research conducted by the UK’s Financial Conduct Authority (FCA). A comprehensive analysis of over a million production changes revealed provocative findings about the efficacy of conventional change management. The FCA’s investigation highlighted the role of Change Advisory Boards (CABs) as a key assurance control. However, the data indicated that CABs approved an overwhelming majority of changes—over 90%—with some firms reporting that not a single change had been rejected in an entire year.

This statistic strongly suggests that CABs, in many instances, are not effectively functioning as a critical gatekeeper for risk mitigation. Instead, they appear to be rubber-stamping changes, often to avoid the repercussions of process non-compliance. The FCA’s research implies a systemic issue where the appearance of control, rather than actual risk reduction, becomes the primary objective. This is driven by a desire to avoid significant fines, which in jurisdictions like the UK and the US can be levied not only against organizations but also against individuals. The mantra becomes, "If I followed the process, it’s not my fault." However, this approach fails to address the fundamental question: are the systems truly secure?

The Research of Forsgren, Humble, and Kim

The findings resonate with established research in the DevOps community. Dr. Nicole Forsgren, Jez Humble, and Gene Kim, in their seminal 2018 book "Accelerate: Building and Scaling High Performing Technology Organizations," presented data-driven insights into software development and operational practices. Their research indicated that external approvals, such as those from a change manager or a CAB, were negatively correlated with key performance indicators like lead time, deployment frequency, and restore time. Crucially, these approvals showed no correlation with a reduced change fail rate.

In essence, the research posits that relying on external approval bodies for IT changes not only fails to enhance the stability of production systems but actively slows down the deployment process. The conclusion is stark: these traditional approval mechanisms are, in practice, worse than having no change approval process at all. This challenges the long-held assumption that layers of human oversight inherently lead to safer IT operations.

The Real Problem: Unaddressed Risk, Not Just Change

If traditional change management is ineffective, what then is the root cause of IT incidents like the Swedbank outage? The answer, according to emerging best practices, lies not in managing the change itself, but in effectively managing the risk associated with that change.

The FCA’s research offers a compelling alternative: frequent, smaller releases and agile delivery methodologies. The authority found that organizations deploying smaller, more frequent releases experienced higher change success rates compared to those with longer release cycles. Similarly, firms effectively utilizing agile delivery methodologies were less likely to encounter change-related incidents.

This suggests a paradigm shift: instead of focusing on extensive documentation and manual approvals for large, infrequent changes, the focus should be on making changes inherently less risky. This involves breaking down large deployments into smaller, manageable units that can be deployed more frequently. This approach reduces the "blast radius" of any potential failure.

The implication for Swedbank is profound. Even if the bank had meticulously followed its established processes, the fundamental flaw might still have been insufficient risk management. A fine, in this context, would have been levied for failing to implement robust risk mitigation strategies, regardless of procedural adherence.

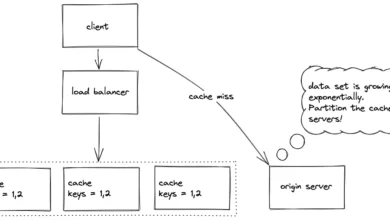

The "Streams Feeding the Lake" Analogy

To better understand this concept, consider the analogy of software changes as streams feeding into an environment, a "lake." Traditional change management attempts to control what flows into the lake by placing a gate in the stream. However, it often fails to monitor the lake itself. If changes can be made to the production environment without detection or proper validation within the lake, then the gate in the stream is only addressing one aspect of the risk.

The only way to ensure that no undocumented or unauthorized changes enter the production environment is through continuous runtime monitoring. This provides visibility into the state of the "lake" and can detect anomalies or unauthorized modifications.

Echoes of Knight Capital

The Swedbank incident bears striking resemblances to the infamous Knight Capital Group trading glitch in 2012. In that event, a malfunctioning trading algorithm, deployed with an incomplete understanding of its impact on live systems, led to catastrophic market volatility and massive financial losses for Knight Capital. The SEC’s investigation into the Knight Capital incident, like the Swedbank case, highlighted a critical lack of observability and traceability in how changes were implemented and managed in production. In both instances, an insufficient understanding of applied changes prolonged and amplified the scale of the outages.

This raises a critical, and unsettling, question: how many other unapproved or risky changes have been made to production systems that, by sheer luck, did not result in a significant outage? Without robust monitoring and traceability, it is exceedingly difficult to know.

Why Does Outdated Change Management Persist?

If traditional change management is demonstrably ineffective and even counterproductive, why does it remain so prevalent, particularly in the financial services sector? The answer lies in the historical evolution of software development and the inherent challenges of modernizing legacy systems.

Historically, software changes were infrequent, large, and inherently risky. Think of annual upgrades or monthly patch cycles. These significant batches of changes necessitated extensive testing, qualification, formal change management processes, and carefully scheduled maintenance windows. Checklists were developed to mitigate risks and ensure quality.

In the era before widespread adoption of test automation, continuous delivery pipelines, DevSecOps practices, and sophisticated rollback mechanisms, these traditional methods were the primary means of managing IT risk. However, the financial services industry is replete with complex legacy systems and outsourced IT operations. Implementing modern, agile practices in such environments is often technically challenging and economically prohibitive.

This reality forces many financial institutions to cling to outdated processes, not necessarily because they are effective, but because they provide a semblance of compliance and a means to deflect blame. The presence of legacy software, the inherent risks associated with outsourcing IT functions, and the sheer volume of changes in highly dynamic, distributed next-generation systems all contribute to a complex risk landscape. Perhaps it is time to acknowledge that these factors constitute a significant systemic risk within the financial sector itself.

Risk Management That Actually Works

The path forward lies in fundamentally re-evaluating how IT risk is managed. While checklists can play a supportive role, true risk reduction in IT operations hinges on making changes inherently less risky and moving towards smaller, more frequent deployments. This can be achieved through:

- Automating Change Controls: Integrating automated checks and validations directly into the deployment pipeline.

- Automating Documentation: Generating necessary documentation as a byproduct of automated processes, rather than a manual undertaking.

- Implementing Robust Monitoring and Alerting: Establishing systems that can detect unauthorized changes, performance anomalies, and potential security breaches in real-time.

This approach aligns with a DevSecOps philosophy, which aims to harmonize the speed and agility of software delivery with the stringent demands of cybersecurity and audit/compliance requirements. By focusing on technical solutions that build quality and security into the development and deployment process, organizations can move beyond the limitations of procedural compliance and achieve genuine risk reduction.

The Swedbank incident serves as a critical inflection point. It compels financial institutions to confront the limitations of their current change management practices and embrace a more modern, data-driven, and risk-centric approach to IT operations. The ultimate goal must be to ensure the stability and security of critical financial systems, not just the adherence to a checklist.