Python Decorators for Production Machine Learning Engineering: Enhancing Reliability, Observability, and Efficiency

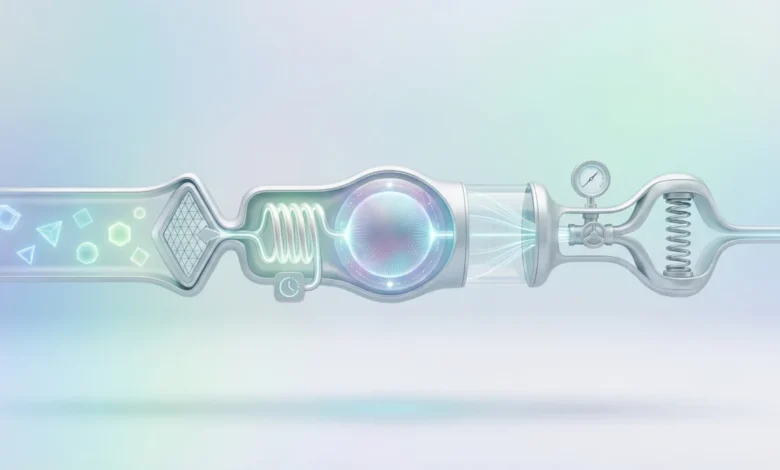

The deployment of machine learning models into live production environments represents a critical juncture where theoretical performance meets real-world operational demands. While the development of sophisticated ML algorithms often garners significant attention, ensuring these models function reliably, efficiently, and observably at scale presents a distinct set of challenges. Python decorators, often seen as mere syntactic sugar for simple function modifications, emerge as a powerful and elegant solution to address these complex production-grade requirements. They offer a modular, maintainable, and scalable approach to implementing cross-cutting concerns that are essential for the resilience and stability of machine learning systems.

The Evolving Landscape of Production ML Challenges

The journey of an ML model from a Jupyter notebook to a production service is fraught with complexities that extend far beyond model accuracy. In a production setting, models interact with external services, consume finite computational resources, and process data streams that are subject to drift and anomalies. These real-world conditions introduce a myriad of potential failure points: network latencies, API throttling, memory leaks from massive tensors, unexpected input data formats, and the perennial need for systems to fail gracefully and recover autonomously, often outside business hours.

According to a 2021 survey by Algorithmia (now part of DataRobot), a significant percentage of machine learning models — as high as 60% for some organizations — never make it to production, or fail shortly after deployment due to operational challenges. This stark reality underscores the gap between model development and successful MLOps (Machine Learning Operations). Traditional software engineering practices, such as wrapping every external call in try/except blocks or scattering logging statements throughout the codebase, quickly lead to boilerplate code, reduced readability, and increased maintenance overhead when applied to the dynamic and data-intensive nature of ML systems. This is precisely where the decorator pattern shines, centralizing operational logic and abstracting it away from the core business logic of the model inference.

Decorator Patterns for Operational Excellence in MLOps

The five decorator patterns discussed below are not merely academic exercises; they represent battle-tested strategies that address common, recurring pain points in production machine learning. By externalizing concerns like error handling, data validation, caching, resource management, and monitoring, decorators empower ML engineers to build more robust, efficient, and transparent systems.

1. Automatic Retry with Exponential Backoff: Fortifying External Service Interactions

Production machine learning pipelines are inherently distributed, relying heavily on interactions with various external services. This might include calling a feature store for real-time data, querying an embedding service, or invoking another microservice for pre-processing. These external dependencies are prone to transient failures: network hiccups, service overload, temporary unavailability, or even "cold starts" in serverless environments that introduce unexpected latency spikes. Without a robust retry mechanism, such transient issues can lead to cascading failures, service degradation, and excessive alerting.

The @retry decorator, often implemented using libraries like tenacity or backoff, offers an elegant solution to this pervasive problem. Instead of manually embedding try/except blocks with complex retry logic, delay calculations, and jitter in every function call, engineers can simply apply this decorator. It allows for configurable parameters such as max_retries, backoff_factor (which dictates the exponential increase in delay between retries), and a tuple of specific retriable_exceptions. When a decorated function raises one of the specified exceptions, the wrapper automatically pauses, waits for an exponentially increasing duration, and attempts the call again. If all retries are exhausted without success, the original exception is then re-raised, allowing for higher-level error handling.

The strategic advantage of exponential backoff is its ability to prevent the "thundering herd" problem, where multiple clients simultaneously hammer a recovering service, prolonging its downtime. By introducing increasing delays, it gives the external service a chance to stabilize. For model-serving endpoints that occasionally experience timeouts or brief periods of unavailability, this single decorator can significantly improve system uptime and reduce the frequency of false-positive alerts, transforming potential outages into seamless recoveries. Industry experts emphasize that robust error handling, including intelligent retry mechanisms, is foundational for any high-availability ML service.

2. Input Validation and Schema Enforcement: Guarding Against Data Quality Degradation

Data quality issues are arguably the most insidious and common failure mode in machine learning systems. Models are trained on features with specific distributions, types, and ranges. In production, upstream data pipelines are dynamic, and changes can inadvertently introduce null values, incorrect data types, out-of-range values, or unexpected data shapes (e.g., a feature vector suddenly having a different number of dimensions). By the time these issues manifest as poor model predictions or outright errors, the system may have been serving suboptimal or incorrect outputs for hours, impacting business metrics and user trust.

A @validate_input decorator acts as a crucial gatekeeper, intercepting function arguments before they reach the core model logic. This proactive defense mechanism can be designed to perform a variety of checks: verifying that a NumPy array matches an expected shape, ensuring all required dictionary keys are present, or confirming that values fall within acceptable numerical ranges. When validation fails, the decorator can either raise a descriptive error immediately, preventing corrupted data from propagating downstream, or return a safe default response, ensuring service continuity even under adverse data conditions.

While a lightweight implementation checking basic array shapes and data types can prevent many common issues, integrating with more sophisticated validation libraries like Pydantic or Pandera (for DataFrame validation) can elevate this decorator’s power. Pydantic, for instance, allows for defining complex data schemas using Python type hints, automatically validating incoming data against these schemas. This pattern is not just about error prevention; it’s about maintaining the integrity of the model’s inputs, thereby preserving the integrity of its outputs. MLOps practitioners increasingly advocate for "data contracts" enforced at various stages of the pipeline, and this decorator serves as a critical enforcement point at the model inference boundary, offering a proactive defense against the "garbage in, garbage out" problem.

3. Result Caching with TTL: Optimizing Resource Utilization and Latency

In real-time prediction serving, it is common to encounter repeated inputs. A user might refresh a recommendation page multiple times within a short session, or a batch job might reprocess overlapping feature sets for different entities. Running the same computationally expensive inference repeatedly for identical inputs is a wasteful expenditure of compute resources and introduces unnecessary latency. This not only impacts operational costs but also degrades the user experience.

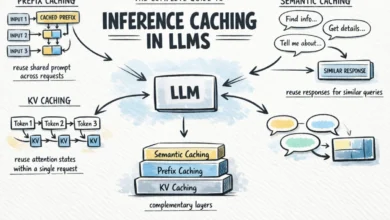

A @cache_result decorator equipped with a Time-To-Live (TTL) parameter provides an effective solution. This decorator stores the output of a function, keyed by its inputs, for a specified duration. Internally, it might maintain an in-memory dictionary or leverage an external distributed cache like Redis or Memcached, mapping a hash of the arguments to a tuple containing the (result, timestamp). Before executing the decorated function, the wrapper checks if a valid cached result exists for the given inputs and if the entry is still within its TTL window. If so, it returns the cached value instantly, bypassing the expensive computation. Otherwise, it executes the function, computes the result, and updates the cache with the new value and current timestamp.

The TTL component is paramount for making this approach production-ready. While caching reduces latency and resource consumption, predictions can become stale, especially when underlying features or model weights change. The TTL ensures that cached results are periodically invalidated, forcing fresh computation and preventing the serving of outdated predictions. In many real-time scenarios, even a short TTL of 30 seconds to a few minutes can significantly reduce redundant computation, particularly for endpoints with high request volumes or for models with moderate inference times. This optimization is crucial for achieving cost efficiency and meeting strict latency SLAs in high-throughput ML systems.

4. Memory-Aware Execution: Preventing Resource Exhaustion in Containerized Environments

Modern machine learning models, particularly large language models (LLMs) and complex deep neural networks, are notorious for their significant memory footprints. When multiple models run concurrently, or when large batches of data are processed, it becomes easy to exceed available RAM, leading to Out Of Memory (OOM) errors and service crashes. These failures are often intermittent and challenging to diagnose, depending on workload variability, garbage collection timing, and the specific memory allocation patterns of the underlying hardware.

A @memory_guard decorator provides a crucial layer of defense against such resource exhaustion. Leveraging system monitoring libraries like psutil, this decorator can inspect the available system memory before a decorated function executes. It reads current memory usage and compares it against a configurable threshold (e.g., 85% or 90% utilization of total available RAM). If memory is constrained, the decorator can initiate several proactive actions: triggering garbage collection using gc.collect() to free up unused memory, logging a warning to alert operators, delaying execution until more memory becomes available, or raising a custom exception that an orchestration layer (like Kubernetes) can handle gracefully by shedding load or restarting the pod.

This pattern is exceptionally valuable in containerized environments, such as those orchestrated by Kubernetes, where memory limits are strictly enforced. Exceeding these limits triggers the OOM killer, which unceremoniously terminates the offending container, leading to service disruption. A memory guard gives the application an opportunity to degrade gracefully, signal distress, or recover before reaching that critical point. It shifts the paradigm from reactive crash recovery to proactive resource management, enhancing the overall stability and predictability of ML services running in resource-constrained environments.

5. Execution Logging and Monitoring: Achieving Comprehensive Observability

Observability in machine learning systems extends far beyond simple HTTP status codes. Engineers require deep visibility into inference latency, anomalous input patterns, subtle shifts in prediction distributions, and performance bottlenecks within the model pipeline. While ad-hoc logging statements are common during development, they quickly become inconsistent, difficult to parse, and challenging to maintain as systems scale. This leads to a fragmented view of system health, making debugging and performance optimization a significant hurdle.

A @monitor decorator provides a standardized and automated mechanism for capturing critical operational data. It wraps functions with structured logging that automatically records essential metrics such as execution time, summaries of input data (e.g., shape, basic statistics), characteristics of the output predictions (e.g., mean, standard deviation, confidence scores), and detailed exception information if an error occurs. This decorator can be configured to integrate seamlessly with existing logging frameworks (e.g., Python’s logging module), push metrics to time-series databases like Prometheus, or send events to observability platforms such as Datadog, Grafana, or ELK stack.

The decorator timestamps the start and end of execution, logs exceptions before re-raising them, and can optionally push custom metrics (e.g., model_inference_latency_seconds, prediction_output_distribution) to a monitoring backend. The true power of this pattern emerges when it is applied consistently across the entire inference pipeline – from data ingress to final prediction output. This consistency yields a unified, searchable, and actionable record of predictions, execution times, resource utilization, and failures. When issues inevitably arise, engineers are equipped with rich, contextual diagnostic information rather than relying on limited, disparate log entries, significantly accelerating root cause analysis and resolution. This consistent observability is a cornerstone of reliable MLOps.

Broader Implications and Strategic Value

These five decorator patterns collectively represent a shift in how operational concerns are integrated into machine learning systems. They embody a philosophy of keeping core machine learning logic clean and focused on computation, while pushing operational responsibilities to the edges of the function via modular, reusable components.

- DevOps and MLOps Synergy: Decorators bridge the gap between development and operations by providing a clear contract for how functions should behave in a production setting. They facilitate a smoother handover from ML researchers to MLOps engineers, ensuring that operational best practices are baked into the code from the outset.

- Standardization and Maintainability: For large teams and complex projects, decorators enforce a consistent approach to common problems. This reduces cognitive load, improves code readability, and simplifies maintenance, as changes to operational logic only need to be made in one place (the decorator definition) rather than across numerous function implementations.

- Testability: By separating concerns, decorators make individual functions easier to test. The core logic can be tested in isolation, while the decorator’s behavior can be tested separately, leading to more robust and reliable codebases.

- Scalability: As ML systems grow, the ability to add new operational features (e.g., A/B testing, circuit breaking) by simply attaching a new decorator, rather than modifying existing function bodies, significantly enhances scalability and agility.

- Future Trends: The principles underpinning these decorators are adaptable. As MLOps evolves, we may see more sophisticated decorators that leverage AI for adaptive resource management, anomaly detection within the decorator itself, or even automated decorator generation based on deployment context.

Conclusion

The successful deployment and sustained operation of machine learning models in production environments demand more than just accurate algorithms; they require robust engineering practices. Python decorators offer an elegant, powerful, and highly effective mechanism for embedding critical operational concerns directly into the fabric of ML codebases. By abstracting away complexities like error handling, data validation, caching, resource management, and comprehensive monitoring, decorators empower machine learning engineers to build systems that are not only performant but also resilient, observable, and efficient. Embracing these patterns can significantly reduce operational overhead, improve system reliability, and ultimately allow teams to focus more on innovating with machine learning and less on firefighting production issues. For any organization serious about MLOps, understanding and strategically applying these decorator patterns is no longer optional but a fundamental requirement for success.