The Enterprise AI Inflection Point: Moving Beyond Models to Master Contextual Understanding

Enterprise Artificial Intelligence (AI) has reached a critical juncture, moving beyond the initial excitement and experimentation with Large Language Models (LLMs) to confront a fundamental reality: superior models alone do not guarantee superior outcomes. Engineering leaders are increasingly recognizing that true value in enterprise AI hinges not just on the sophistication of the AI model itself, but on its ability to integrate and leverage the intricate, dynamic context of the organization it serves. This paradigm shift is fundamentally reshaping how businesses architect and deploy AI systems, propelling them from simple copilots to the vision of fully autonomous agents.

The distinction between a basic level of contextual awareness and the deep, mission-critical understanding required by enterprises is becoming starkly apparent. While LLMs are no longer operating in a vacuum, their ability to grasp and apply complex organizational knowledge remains a significant challenge.

The Limitations of Fine-Tuning and the Promise of RAG

For many development teams, fine-tuning LLMs appears to be the logical next step in imbuing AI with an organization’s specific knowledge. The promise of customization, domain alignment, and enhanced output quality is alluring. However, in practice, fine-tuning often falls short of these lofty expectations. The core issue lies in its inability to effectively encode an organization’s proprietary codebases, enforce stringent security policies, or adapt to evolving development workflows. At best, fine-tuning enables models to mimic patterns found within a limited dataset. At worst, it introduces substantial operational burdens. These include the need for larger, more resource-intensive models, frequent retraining cycles, complex compliance considerations, and an inherent brittleness that makes systems vulnerable to changes in the underlying enterprise environment.

The fundamental challenge stems from the inherently dynamic nature of enterprise knowledge. This knowledge is not a static entity but a living tapestry woven across diverse repositories, extensive documentation, intricate APIs, and deeply ingrained institutional practices. Attempting to "bake" this fluid information directly into a model’s weights is fundamentally misaligned with the way modern software systems operate and evolve.

Retrieval-Augmented Generation (RAG): A Step Forward, But Not the Destination

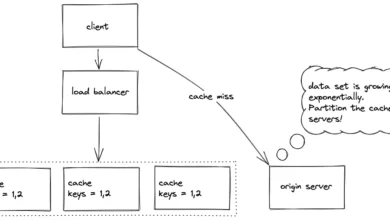

The enterprise AI landscape is increasingly converging on Retrieval-Augmented Generation (RAG) as the dominant architectural pattern. This approach shifts the focus from embedding knowledge directly into model weights to dynamically retrieving relevant information at runtime. RAG systems access and utilize data from a wide array of sources, including codebases, documentation, test suites, and internal operational systems.

This transition from a static training paradigm to a dynamic retrieval mechanism offers significant advantages. It improves output accuracy by grounding AI responses in real-time, current data. Adaptability is enhanced, as systems can evolve without the need for costly and time-consuming retraining. Furthermore, it leads to cost reductions by mitigating the necessity for repeated fine-tuning cycles.

However, it is crucial to understand that RAG and true contextual understanding are not synonymous. RAG excels at enabling AI to find information, but it does not, on its own, equip the AI to understand the intricate workings of a system or the implications of its actions. This critical distinction is where many current AI development efforts begin to falter. When organizations rely solely on RAG, AI systems can fall into a loop of repeating patterns, sometimes incorrectly. They struggle to discern when their suggestions might violate architectural standards, established contracts, or other critical requirements. Consequently, the time required for human code review can increase, as developers must bridge the gaps in the AI’s contextual understanding.

The Emergence of the Enterprise Context Layer

To address these limitations, a new architectural layer is emerging as essential: the enterprise context layer. Just as databases revolutionized structured data and cloud computing abstracted infrastructure, AI systems now demand a dedicated layer for organizing and delivering enterprise-specific context. Without this foundational element, even the most sophisticated AI agents will inevitably fall short of their potential.

Empirical data underscores the profound gap that currently exists. A comprehensive MIT study released last year revealed a startling statistic: 95% of enterprise AI initiatives failed to deliver any return on investment (ROI). The primary culprit identified by the researchers was the inability of most Generative AI systems to retain feedback, adapt to context, or improve over time. The study explicitly concluded that "model quality fails without context."

More recent research further illuminates the limitations of generic AI tools. A study conducted by Salesforce and YouGov found that a significant majority of workers – 76% – reported that the AI tools they favored lacked access to company data or job-specific context, the very information needed to effectively handle business-specific tasks. On the flip side, a substantial portion of workers – 60% – believe that providing AI tools with secure access to company data would improve their work quality. Similarly, a considerable percentage anticipate faster task completion (59%) and a reduction in time spent searching for information (62%).

The implication is unequivocal: AI systems that remain disconnected from a comprehensive understanding of their enterprise environment cannot be reliably entrusted with mission-critical operations.

Context as the Differentiator for AI Agents

The challenge of context becomes even more pronounced with the advent of AI agents. Unlike copilots, which are designed to assist with discrete, well-defined tasks, AI agents are envisioned to autonomously execute end-to-end workflows. This could involve writing complex code, implementing new features, or orchestrating intricate system interactions. For these agents to perform such tasks reliably and effectively, they must possess a level of contextual awareness comparable to that of experienced human employees.

This deep contextual understanding encompasses a broad spectrum of knowledge, including:

- Coding Standards and Architectural Patterns: Agents must comprehend established coding conventions, design patterns, and the underlying architectural principles that govern the enterprise’s software ecosystem.

- Cross-Repository Dependencies: Navigating the complex web of dependencies that exist across different code repositories, microservices, and internal systems is crucial for successful integration and modification.

- Approved Tools, Libraries, and APIs: Agents need to be aware of and adhere to the organization’s approved stack of tools, libraries, and APIs, ensuring compatibility and security.

- Downstream Impact Analysis: A sophisticated agent must be capable of anticipating the ripple effects of any proposed change across various systems and components, mitigating unintended consequences.

In essence, context provides the critical understanding that enterprises require from their AI systems. It transforms AI from a tool that merely generates plausible outputs into a system that produces reliable, actionable, and defensible results. Context enables AI to reason about architecture, not just syntax; to adapt intelligently to change, not just recall pre-existing patterns.

This shift fundamentally reorients the focus of enterprise AI development. Instead of prioritizing model selection, the emphasis moves decisively towards sophisticated system design, where the integration of rich, dynamic context is paramount.

Investing in Context-Aware AI Systems

The path forward for enterprises seeking to leverage AI effectively involves a strategic investment in systems that are designed to ingest, process, and leverage organizational context. This means prioritizing solutions that offer:

- Dynamic Knowledge Graph Construction: Building and continuously updating knowledge graphs that map relationships between code, documentation, developers, and operational systems. This provides a structured representation of enterprise knowledge.

- Real-time Observability and Telemetry Integration: Connecting AI systems to real-time operational data, logs, and performance metrics to understand system behavior and identify issues.

- Policy and Governance Enforcement: Embedding organizational policies, security protocols, and compliance requirements directly into the AI’s decision-making framework.

- Human-in-the-Loop Feedback Mechanisms: Designing systems that effectively capture and integrate feedback from human experts, enabling continuous learning and refinement.

- Version Control and Change Management Integration: Seamlessly integrating with existing version control systems to understand the history of code changes and the rationale behind them.

- API and Service Catalog Integration: Providing AI with a clear understanding of available APIs, their functionalities, and their usage patterns.

The underlying principle is clear: in the realm of modern enterprise AI, a model that is not deeply grounded in its specific operational environment is not truly intelligent. It is, at best, making an educated guess, and at worst, operating with a dangerous lack of awareness that can lead to costly errors and missed opportunities. The future of AI in the enterprise is not solely about building better models; it is about building smarter systems that understand the complex, ever-evolving world in which they operate.