AI-Powered Synthetic Users Revolutionize Open-Source Documentation Testing

The challenge of maintaining accurate and functional documentation for early-stage open-source projects has long been a silent hurdle for developer adoption. For projects like Drasi, a CNCF sandbox project designed to detect data changes and trigger immediate reactions, the initial interaction a developer has with the project is often through its "Getting Started" guide. When these guides falter—commands fail, outputs don’t match, or steps are ambiguous—most users, facing a steep learning curve, will simply abandon the project rather than file a bug report. This reality recently struck the small, four-engineer team supporting Drasi within Microsoft Azure’s Office of the Chief Technology Officer, highlighting a critical gap between their rapid development pace and their ability to manually validate their comprehensive tutorials.

The tipping point for the Drasi team occurred in late 2025. A significant update to GitHub’s Dev Container infrastructure, which elevated the minimum required Docker version, inadvertently broke the Docker daemon connection. This seemingly minor dependency update had a cascading effect, rendering every single Drasi tutorial non-functional. Relying heavily on manual testing, the team was initially unaware of the widespread impact. During this period, any new developer attempting to onboard with Drasi would have encountered an insurmountable barrier, leading to potential frustration and project abandonment. This incident served as a stark realization: advanced AI coding assistants could be leveraged to transform the static problem of documentation testing into a dynamic monitoring challenge.

The Root Causes of Documentation Degradation

The fragility of technical documentation, particularly for dynamic software projects, stems from two primary issues: the "curse of knowledge" and "silent drift."

The Curse of Knowledge: Implicit Contexts and Unspoken Assumptions

Documentation is often authored by experienced developers who possess a deep, implicit understanding of the project’s inner workings. Phrases like "wait for the query to bootstrap" might seem straightforward to an insider who knows to execute drasi list query and monitor for a "Running" status, or even better, utilize a specific drasi wait command. However, a new user, or an AI agent tasked with following instructions, lacks this contextual background. They interpret documentation literally, leading to confusion and an inability to bridge the gap between the documented "what" and the unstated "how."

Silent Drift: The Insidious Erosion of Accuracy

Unlike code, which typically fails loudly with build errors when fundamental changes occur, documentation often degrades silently. Renaming a configuration file in a codebase will immediately halt the build process. Conversely, documentation that continues to reference an old filename will not trigger an error. This discrepancy, known as silent drift, accumulates over time, leading to user confusion and a growing disconnect between the written guide and the actual functioning software.

This problem is amplified for tutorials that involve setting up complex sandbox environments using tools like Docker, k3d, and sample databases. When any upstream dependency experiences an update—a deprecated flag, a version bump, or a change in default behavior—the tutorials can break without any immediate, visible indication.

The Innovative Solution: Employing AI Agents as Synthetic Users

To combat this persistent challenge, the Drasi team reframed tutorial testing as a simulation problem. They developed an AI agent designed to embody the persona of a "synthetic new user." This agent possesses three crucial characteristics: it operates with no prior knowledge of the project, it follows instructions literally, and it can execute complex actions within a controlled environment.

The Technological Stack: GitHub Copilot CLI and Dev Containers

The implemented solution leverages a powerful combination of technologies: GitHub Actions for automation, Dev Containers for reproducible environments, Playwright for browser interaction, and crucially, the GitHub Copilot CLI. The demanding infrastructure requirements of Drasi’s tutorials, which involve spinning up multiple containerized services, necessitated a testing environment that precisely mirrored the end-user experience. The team emphasized that if users were expected to run tutorials within a specific Dev Container on GitHub Codespaces, their automated tests must replicate that exact environment.

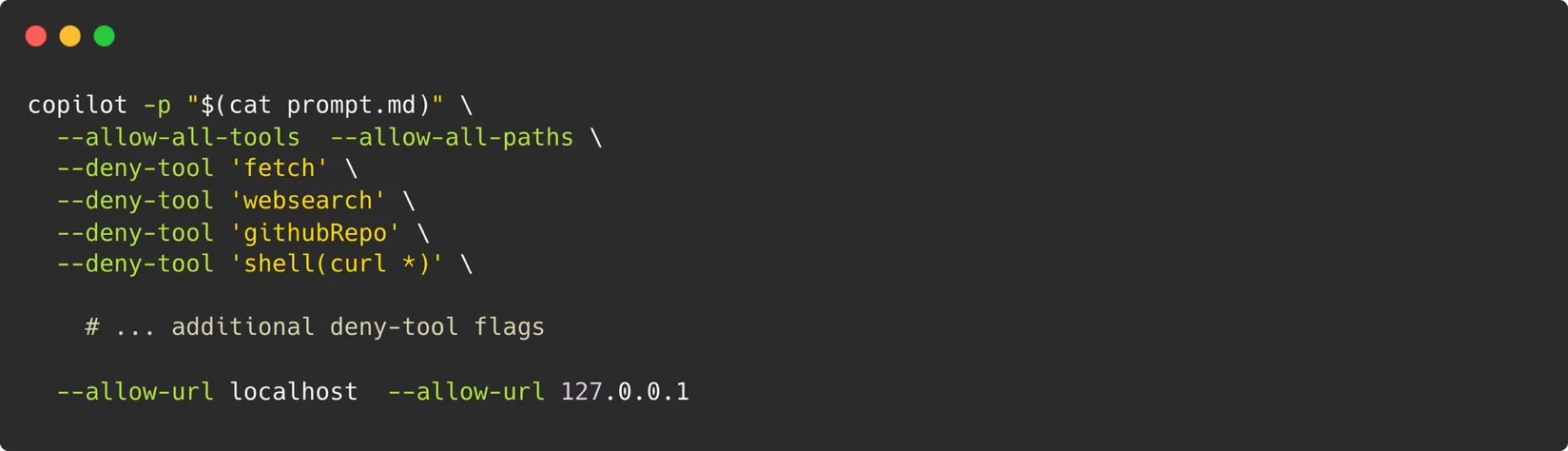

The Architecture: Simulating User Interaction

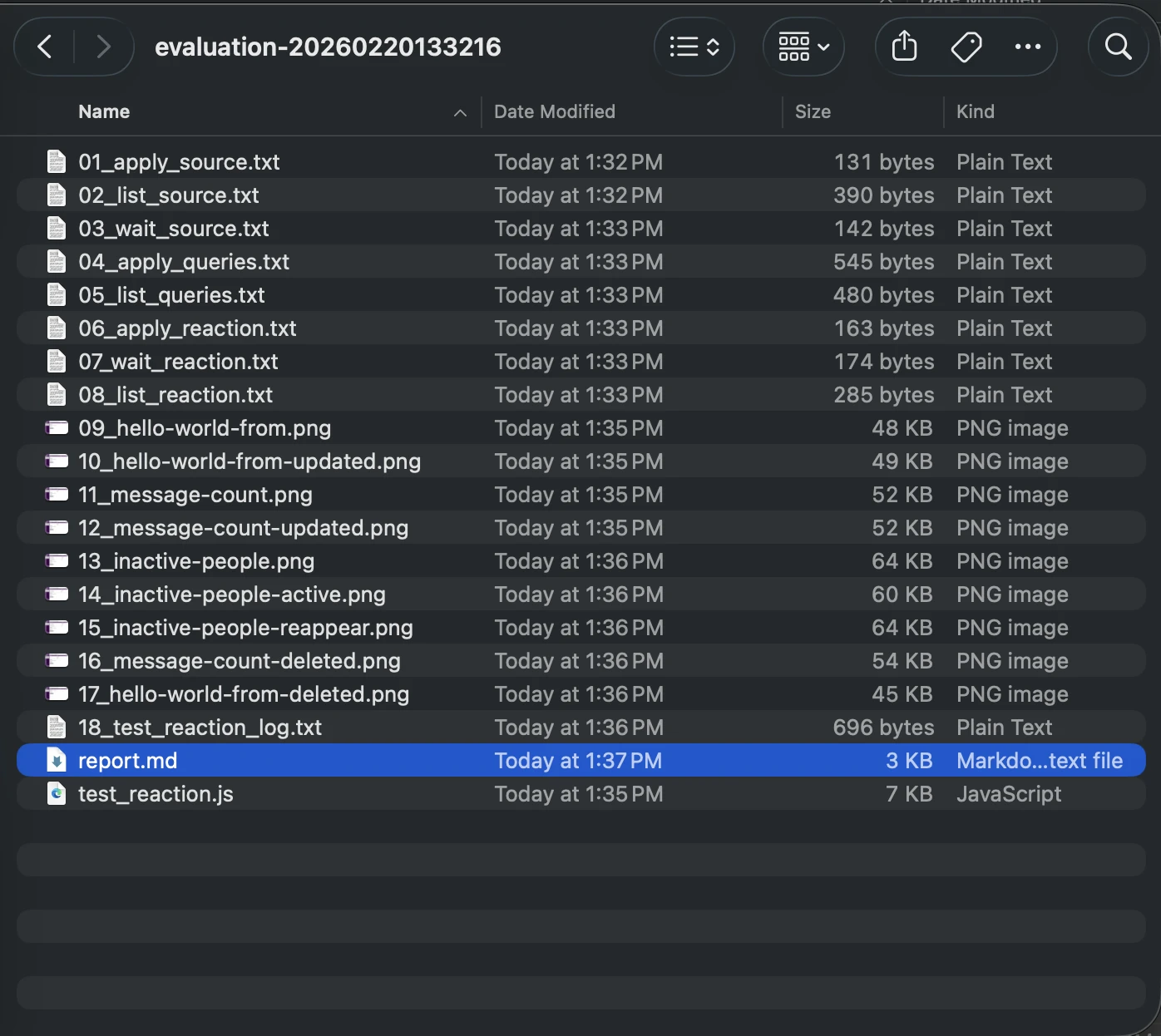

Within the isolated Dev Container environment, the system invokes the Copilot CLI, guided by a specialized system prompt. This prompt, accessible in full detail through a provided GitHub link, configures the agent to execute terminal commands, write files, and script browser interactions—mimicking the actions of a human developer interacting with their terminal. To further enhance the simulation of a real user’s journey, Playwright is installed within the Dev Container. This allows the AI agent to open webpages, interact with them according to the tutorial steps, and capture screenshots for visual verification.

The Security Model: Containerization as the Primary Defense

A robust security model underpins this operation, centered on the principle of the container serving as the definitive boundary. Instead of attempting to restrict individual commands, which is a complex and often futile endeavor given the agent’s need to execute arbitrary scripts, the team treats the entire Dev Container as an isolated sandbox. Strict controls are enforced on what can traverse these boundaries. This includes limiting outbound network access solely to localhost, utilizing a Personal Access Token (PAT) with only "Copilot Requests" permission, ensuring ephemeral containers are destroyed after each run, and implementing a maintainer-approval gate for triggering workflows.

Addressing Non-Determinism in AI-Driven Testing

One of the significant hurdles in AI-based testing is the inherent non-determinism of Large Language Models (LLMs). These models are probabilistic, meaning their outputs can vary; an agent might retry a command one time and abandon it the next. The Drasi team tackled this challenge through a multi-stage approach:

- Retry with Model Escalation: A three-stage retry mechanism is employed. The process begins with Gemini-Pro. If that fails, it escalates to Claude Opus, increasing the likelihood of a successful execution.

- Semantic Screenshot Comparison: Instead of relying on pixel-perfect matching, which is brittle and prone to failure due to minor visual variations, the system utilizes semantic comparison for screenshots. This allows for more resilient visual verification.

- Core Data Field Verification: The system verifies key data fields rather than volatile values that might change between runs. This ensures that the essential functionality is validated.

- Tight Prompt Constraints: A comprehensive list of strict constraints is embedded within the prompts to prevent the agent from engaging in excessive debugging journeys.

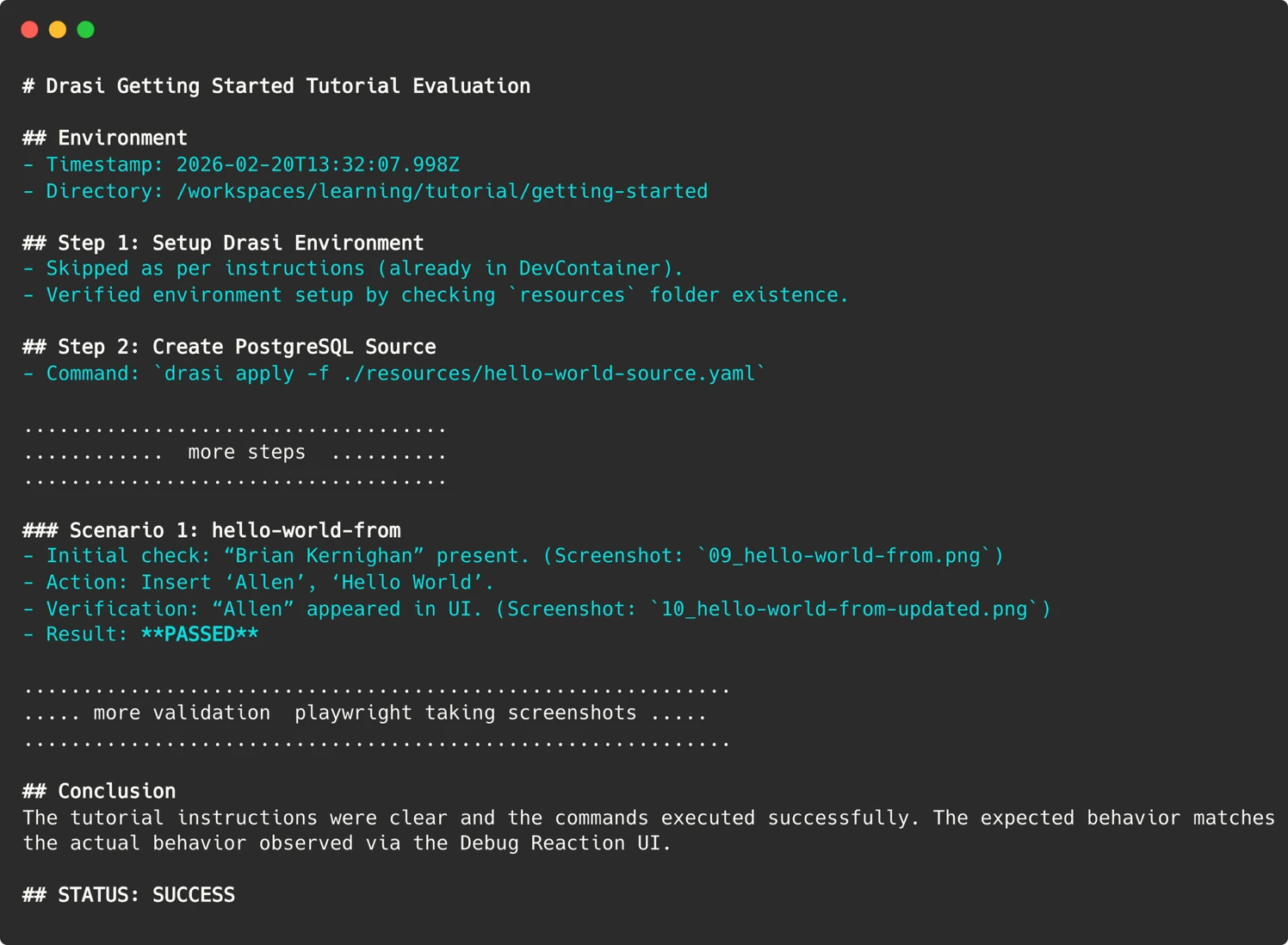

- Structured Reporting Directives: Specific directives are included to control the structure of the agent’s final report, ensuring clarity and actionability.

- Skip Directives: The agent is provided with instructions to bypass optional tutorial sections, such as the setup of external services, allowing for focused testing of core components.

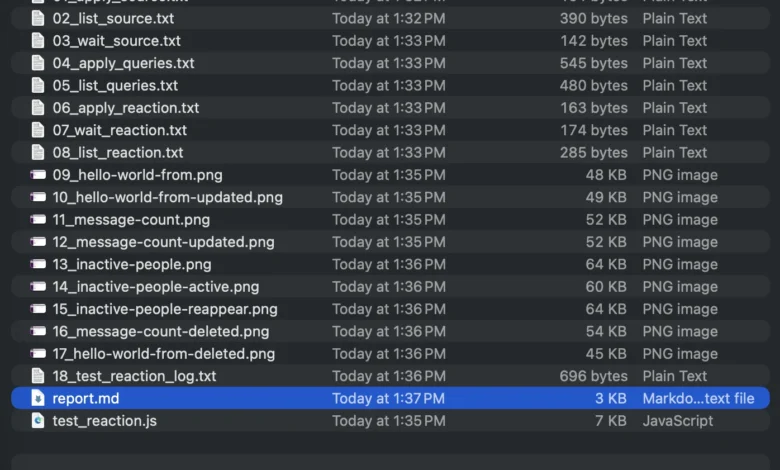

Preserving Evidence for Debugging

When a test run fails, understanding the root cause is paramount. Since the AI agent operates within transient containers, direct SSH access for manual inspection is not feasible. To address this, the agent meticulously preserves evidence from every run. This includes screenshots of web UIs, terminal output of critical commands, and a detailed markdown report articulating the agent’s reasoning and observations. These artifacts are uploaded to the GitHub Action run summary, enabling the team to "time travel" to the moment of failure and visually inspect the agent’s experience.

Parsing Agent Reports for Actionable Insights

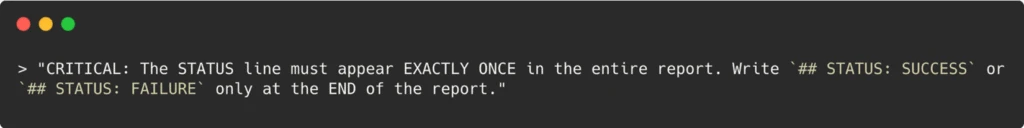

Translating the nuanced, probabilistic output of LLMs into a definitive "Pass/Fail" signal that a CI/CD pipeline can understand presents another challenge. An agent might generate a lengthy, descriptive conclusion. To make these reports actionable, the team employed sophisticated prompt engineering. They explicitly instructed the agent to include a standardized, machine-readable "STATUS: SUCCESS" or "STATUS: FAILURE" string at the end of its report. The GitHub Action then simply greps for this specific string to determine the workflow’s exit code, effectively bridging the gap between AI’s qualitative output and CI’s quantitative expectations.

Comprehensive Automation and Security

The implementation has resulted in an automated workflow that evaluates all Drasi tutorials weekly. Each tutorial is tested in its own sandboxed container, with the AI agent acting as a fresh, synthetic user. If any tutorial evaluation fails, the workflow is configured to automatically file an issue on the Drasi GitHub repository.

While the workflow can optionally be triggered on pull requests, security measures are in place to prevent potential attacks. A maintainer-approval requirement is enforced, and a pull_request_target trigger ensures that the workflow executing on pull requests, even from external contributors, is the one from the main branch. The Copilot CLI requires a PAT token, which is stored securely in the repository’s environment secrets. To further mitigate risks, each run necessitates maintainer approval, with the exception of the automated weekly run that operates solely on the main branch.

Uncovering Critical Bugs Through Synthetic Users

Since the deployment of this AI-driven testing system, the Drasi team has conducted over 200 "synthetic user" sessions. This rigorous testing has identified 18 distinct issues, including significant environmental problems and other documentation deficiencies. Crucially, fixing these identified issues has not only improved the experience for the AI agent but has also directly benefited human users by enhancing the clarity and accuracy of the documentation for everyone.

AI as a Force Multiplier: Expanding Capacity Without Expanding Headcount

The narrative surrounding AI often centers on job displacement. However, in the case of Drasi’s documentation testing, AI has served as a profound force multiplier, providing a capacity that the team would otherwise have been unable to achieve. Replicating the current testing regimen—running six tutorials across fresh environments weekly—would necessitate either a dedicated QA resource or a substantial budget for manual testing, both of which are infeasible for a four-person team.

By deploying these AI-powered "Synthetic Users," Drasi has effectively gained a tireless QA engineer who operates around the clock, including nights, weekends, and holidays. The project’s tutorials are now subject to weekly validation by these persistent digital testers. Developers are encouraged to experience the "Getting Started" guide firsthand to witness the results. For projects grappling with similar documentation drift, the GitHub Copilot CLI presents a compelling solution, not merely as a coding assistant, but as a versatile agent capable of executing defined goals within a controlled environment, freeing up valuable human resources for higher-level tasks.