The Architecture Problem Hiding Inside Digital Workflows

Large enterprises have long relied on deterministic software foundations to manage their operations. Business rules are meticulously embedded within workflows, state transitions are explicitly modeled, and escalation paths are predefined, ensuring system behavior is predictable and outcomes are reliable. For decades, this approach has been the bedrock of mission-critical operations, enabling organizations to encode meaningful scenarios as conditional branches and validate them rigorously before deployment. This model, however, begins to strain when processes are required to respond not just to predefined thresholds, but to the broader, dynamic context of a given situation.

The complexities inherent in modern digital workflows, particularly in customer-facing processes like onboarding in the banking sector, highlight this growing tension. Customer onboarding, a critical juncture for any financial institution, sits at the confluence of digital engagement channels, sophisticated fraud detection mechanisms, stringent regulatory obligations, and ambitious revenue targets. Financial institutions must navigate the labyrinthine requirements of Know Your Customer (KYC) and Anti-Money Laundering (AML) regulations while simultaneously striving to minimize customer abandonment and thwart sophisticated threats like synthetic identity fraud.

A significant challenge observed during digital account opening initiatives at a major North American bank, as detailed in recent analyses, has been the recurring trade-off between product teams aiming to streamline customer journeys and fraud prevention teams implementing additional safeguards. Product teams consistently push to reduce friction and enhance conversion rates, seeking to capture new customers efficiently. In parallel, fraud departments are compelled to respond to an escalating volume of bot-driven account creation and the exploitation of mule schemes by introducing more stringent verification protocols. Compliance departments, meanwhile, mandate unwavering adherence to regulatory standards, while engineering teams are tasked with integrating each new requirement into the existing orchestration framework. While individually rational, these decisions collectively contribute to an increasingly intricate and unwieldy workflow.

The core issue is not a scarcity of rules or regulations, but the difficulty in expressing nuanced contextual judgment within a rigid, static branching structure. Differentiation in these processes often occurs only at predefined checkpoints, and information is frequently collected in bulk rather than being dynamically adapted based on known facts. This presents a delicate balancing act: collecting too little information risks regulatory exposure or financial fraud, while collecting too much can lead to increased customer abandonment rates. Attempting to encode every conceivable variation as an additional branching path within the existing architecture renders the workflow increasingly fragile and unmanageable.

To address these limitations, adaptive scoring and contextual models are emerging as crucial complements to deterministic logic. Instead of attempting to enumerate every possible scenario in advance, these models assist in determining whether additional verification is warranted or if a process can proceed with existing evidence. Deterministic workflows continue to enforce fundamental regulatory requirements and manage final state transitions, while the adaptive layer provides intelligence on how to navigate toward those outcomes.

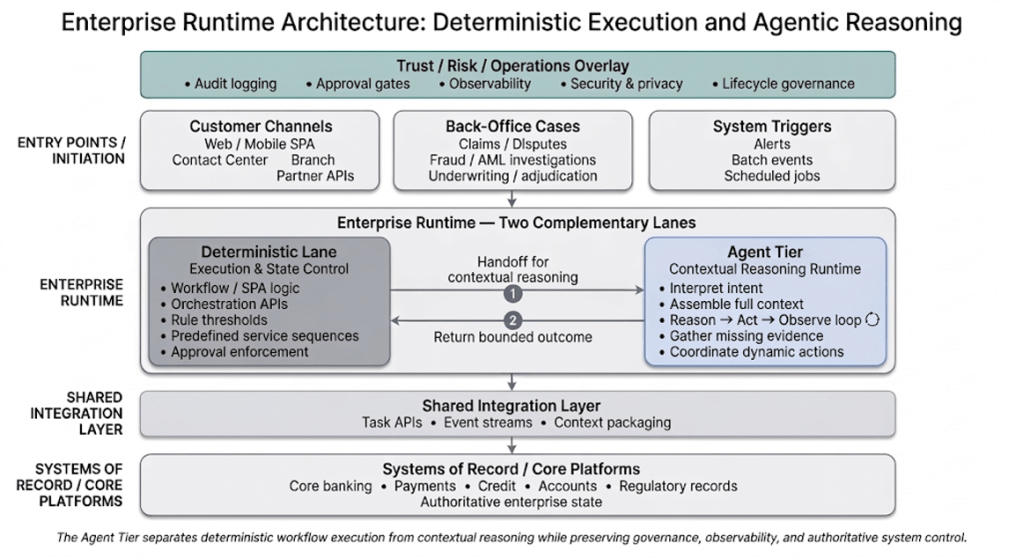

While customer onboarding serves as a clear illustration of this architectural challenge, similar patterns are observable across various enterprise functions, including credit adjudication, claims processing, and dispute management. As adaptive signals are increasingly integrated into these workflows, the architectural question shifts from merely adding more branches to strategically deciding where contextual judgment should reside. The critical missing element, according to emerging architectural paradigms, is not simply another conditional path, but a fundamentally different runtime model. This new model must be capable of interpreting context and determining the most appropriate next action within defined operational limits. This conceptual architectural layer, termed the Agent Tier, aims to decouple contextual reasoning from deterministic execution.

Introducing the Agent Tier: Separating Execution from Contextual Judgment

In many contemporary enterprises, the orchestration logic for complex processes is not housed within formal workflow platforms. Instead, it is often embedded within single-page applications (SPAs), implemented across various APIs, supported by disparate rule engines, and coordinated through intricate service calls across multiple systems. User journeys are frequently assembled through API calls in predefined sequences, with eligibility or routing conditions evaluated at specific, static checkpoints.

This approach functions effectively for repeatable, well-understood processes. When all necessary inputs are complete, risk signals are minimal, and no exception handling is required, the "clean path" can be executed deterministically. State transitions are known in advance, service calls follow predictable patterns, and human tasks are invoked at predetermined points.

However, significant difficulties arise when the workflow encounters ambiguity. Inputs may be incomplete or require interpretation beyond simple threshold comparisons. Multiple systems might need to be coordinated in a sequence that has not been explicitly modeled. Attempting to encode every such potential situation into SPA logic or orchestration APIs results in increasingly complex condition trees and more challenging-to-maintain codebases. Rather than endlessly expanding hard-coded branching, a more robust architectural solution involves separating the runtime into two complementary lanes: repeatable execution and contextual reasoning.

Conceptually, the enterprise runtime evolves into a two-lane structure. The deterministic lane retains ultimate control over authoritative state changes and rule enforcement. It manages eligibility checks, applies regulatory criteria, invokes known service sequences, and finalizes cases within core systems, continuing to handle the vast majority of predictable scenarios.

The runtime invokes the Agent Tier when contextual judgment is essential. This occurs when additional evidence must be gathered before a rule can be accurately evaluated, when multiple signals require integrated interpretation rather than independent analysis, or when coordination across systems cannot be expressed through a fixed, linear sequence. The Agent Tier evaluates available actions and returns a bounded recommendation, enabling the deterministic execution lane to resume its controlled progression.

The transition between these lanes is explicit. The deterministic workflow hands off to the Agent Tier when it reaches a point where static branching is insufficient to proceed. The Agent Tier then performs the necessary synthesis or dynamic coordination. Once the Agent Tier produces a structured result—such as a completed evidence bundle, a validated set of inputs, or a recommended next step—control returns to the deterministic lane for controlled progression and final state transition. This separation facilitates incremental adoption. Existing SPA logic and orchestration APIs can remain largely intact, with ambiguity points seamlessly redirected to the Agent Tier without destabilizing the core deterministic execution.

What Happens Inside the Agent Tier

The Agent Tier is not a singular, monolithic "AI decision." Instead, it represents a structured reasoning cycle that meticulously combines interpretation with controlled action.

When the deterministic workflow hands off a case, the Agent Tier begins by interpreting the current situation. It assembles all available context—including user inputs, existing customer relationships, real-time fraud signals, the current journey state, and relevant policy constraints. Based on this comprehensive view, it selects the next action from an approved set of enterprise capabilities. This action might involve retrieving additional information, invoking a specialized verification service, requesting clarification directly from the user, or orchestrating a sequence of actions across multiple systems. Once the selected action is completed, its result is evaluated, and the cycle continues until deterministic execution can be safely resumed.

This alternating pattern of reasoning and action is a well-established paradigm in agentic system design. In technical literature, it is often referred to as the ReAct (Reason and Act) pattern, which interleaves reasoning steps with structured action selection. Rather than attempting to reach a final resolution in a single pass, the system incrementally gathers evidence, reassesses its position, and proceeds with a refined understanding. In enterprise settings, this pattern provides a disciplined methodology for managing complex contextual interpretation.

Reasoning within the Agent Tier does not involve unfettered system access. It operates through approved operations exposed via governed interfaces. In practice, these tools are typically enterprise primitives such as:

- Data Retrieval Services: Accessing internal databases or external data sources to gather relevant information.

- Verification APIs: Interacting with third-party services for identity verification, document validation, or risk scoring.

- Communication Tools: Initiating outreach to customers via email, SMS, or other channels for clarification or additional information.

- Orchestration Connectors: Triggering specific sub-processes or microservices within the broader enterprise architecture.

Each of these operations is defined by explicit input/output contracts and permission boundaries, and carries metadata describing its purpose and constraints. The runtime selects from this governed catalog, a mechanism commonly known as tool calling. Some frameworks further group related tools into higher-level capabilities, referred to as skills, which are reusable functions designed for specific objectives such as identity verification or the assembly of KYC evidence.

Before control is returned to the deterministic lane, the agentic runtime can also perform a structured self-check. This process verifies that required conditions are satisfied, confirms alignment with established policy constraints, and ensures that any necessary approvals have been identified. In technical discussions, this is often described as reflection.

Collectively, these patterns do not introduce unchecked autonomy. Instead, they provide a structured method for managing contextual synthesis and dynamic coordination without allowing adaptive logic to diffuse haphazardly across SPA code and orchestration services. Deterministic systems continue to enforce authoritative state transitions, while the Agent Tier meticulously prepares the conditions under which those transitions occur.

In many implementations, the Agent Tier does not directly control the workflow. Rather, it recommends the next step based on the available context. The deterministic tier remains responsible for execution. After each step is completed—whether it involves retrieving evidence, invoking a verification service, or preparing a case for manual review—the updated context is returned to the Agent Tier. The Agent Tier then evaluates the new state and recommends the subsequent action. In this model, contextual reasoning informs the progression of the workflow, while deterministic systems continue to enforce authoritative state transitions.

Returning to the customer onboarding example, the Agent Tier fundamentally alters how the journey adapts to each individual applicant. The deterministic tier still executes core steps such as creating the customer profile, enforcing regulatory checks, and committing account state in core systems. Meanwhile, the Agent Tier continuously evaluates the evolving context—including customer relationships, fraud signals, identity verification results, and available documentation—and recommends whether the workflow can proceed along the "clean path," trigger additional verification, or escalate to a manual review. The outcome is not a reimagined onboarding process but a workflow that dynamically adapts its progression while rigorously preserving the deterministic controls essential for regulated operations.

Conceptually, the interaction between contextual reasoning and deterministic execution can be visualized as a simple runtime loop. The workflow progresses through a continuous cycle: contextual reasoning recommends the next step, deterministic systems execute that step, and the resulting context feeds back into the subsequent recommendation.

Governing Adaptive Systems Without Losing Control

The separation of contextual reasoning from deterministic execution clarifies responsibilities but does not eliminate inherent risks. In regulated environments, adaptive sequencing must operate within clearly defined governance boundaries.

An essential component of this architecture is the "trust and operations overlay," which represents cross-cutting controls applied across the entire runtime. This overlay encompasses critical functions such as audit logging, approval gates, observability, security enforcement, and lifecycle management. Within this structure, authoritative state transitions remain strictly deterministic. Core systems continue to create client profiles, enforce operational limits, record disclosures, and apply regulatory thresholds. While the Agent Tier may influence the progression of a workflow, final state changes occur only through controlled, auditable interfaces.

This containment boundary is crucial for preserving explainability. When progression changes—for instance, when additional verification is triggered or an escalation occurs—institutions must be able to reconstruct precisely why. Which signals were assembled? Which tools were invoked? What specific reasoning produced the recommendation? Concentrating contextual evaluation within a defined runtime layer makes this level of traceability possible, a critical requirement for regulatory compliance and internal audits.

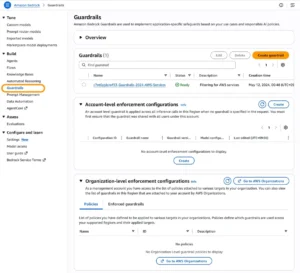

Operational experience reinforces the necessity of these guardrails. Discussions surrounding the development of production agent systems frequently emphasize constrained tool access, explicit action catalogs, bounded iteration, and robust observability. In enterprise environments, contextual reasoning must operate through governed tools and visible control points to maintain integrity and security.

Approval gates remain an integral part of this structure. High-risk actions, such as the issuance of credit, the imposition of account restrictions, the processing of large payments, or the filing of regulatory documents, may still require human authorization, irrespective of how the progression was determined. Reflection within the Agent Tier can validate readiness, but the ultimate authorization remains an explicit, human-driven process.

Lifecycle discipline is equally paramount. Changes to underlying models, identity providers, tool contracts, or orchestration logic can significantly alter workflow behavior. Consequently, the Agent Tier should operate as a governed platform capability, featuring versioned reasoning logic, meticulously managed tool catalogs, and defined processes for testing and rollback.

The overarching objective is not to eliminate probabilistic reasoning but to contain it within observable workflows and strictly governed boundaries. As adaptive capabilities continue to expand, the fundamental architectural question is not whether contextual reasoning will exist, but whether it will be diffused haphazardly across the entire technology stack or concentrated within a controlled, manageable runtime layer.

Architectural Leadership in an Adaptive Era

Introducing an Agent Tier represents the addition of a new runtime component, but enterprise complexity itself is not new; it is already dispersed across channel code, orchestration services, rule engines, and a proliferation of conditional branches. The architectural challenge is not whether complexity exists, but where it resides. As fraud models evolve, verification technologies advance, and regulatory expectations shift, adaptive capabilities will inevitably continue to expand their influence.

The future of enterprise architecture, therefore, must evolve from a focus on enumerating state transitions to defining clear containment boundaries. Deterministic systems will continue to enforce regulatory and operational requirements and remain responsible for authoritative state changes. Adaptive reasoning, in turn, will operate within explicit policy constraints, intelligently informing how workflows progress toward those mandated outcomes. Instead of attempting to pre-encode every conceivable path, enterprises can transition towards context-driven workflows, where deterministic execution handles authoritative actions, and the Agent Tier dynamically determines the most appropriate next step based on evolving context.

This evolution does not necessitate a wholesale reinvention of existing systems. It can commence with a single, high-impact workflow where contextual variability is already a significant challenge. By introducing a disciplined runtime layer that effectively mediates uncertainty while rigorously preserving deterministic control, organizations can modernize incrementally. In this sense, the Agent Tier is not merely a new feature; it is a structural response to a changing runtime reality, one that enables adaptive systems to operate effectively within clear architectural and governance boundaries.

This article is published as part of the Foundry Expert Contributor Network.