Building Dev Signal: Transitioning from Prototype to Production-Ready AI on Google Cloud

The journey from a proof-of-concept AI system to a robust, production-ready application involves meticulous engineering, secure infrastructure, and seamless deployment. In the latest installment of a comprehensive series, Google Cloud’s Head of AI, Product DevRel, Shir Meir Lador, outlines the critical steps involved in transforming the Dev Signal multi-agent system from a local prototype into a scalable cloud service. This detailed guide focuses on establishing the production backbone, emphasizing infrastructure as code, secure deployment pipelines, and robust monitoring for developers aiming to accelerate their AI application development on Google Cloud.

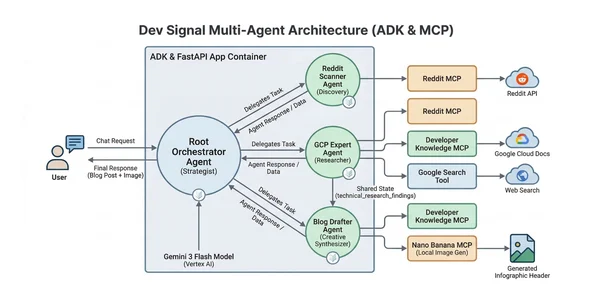

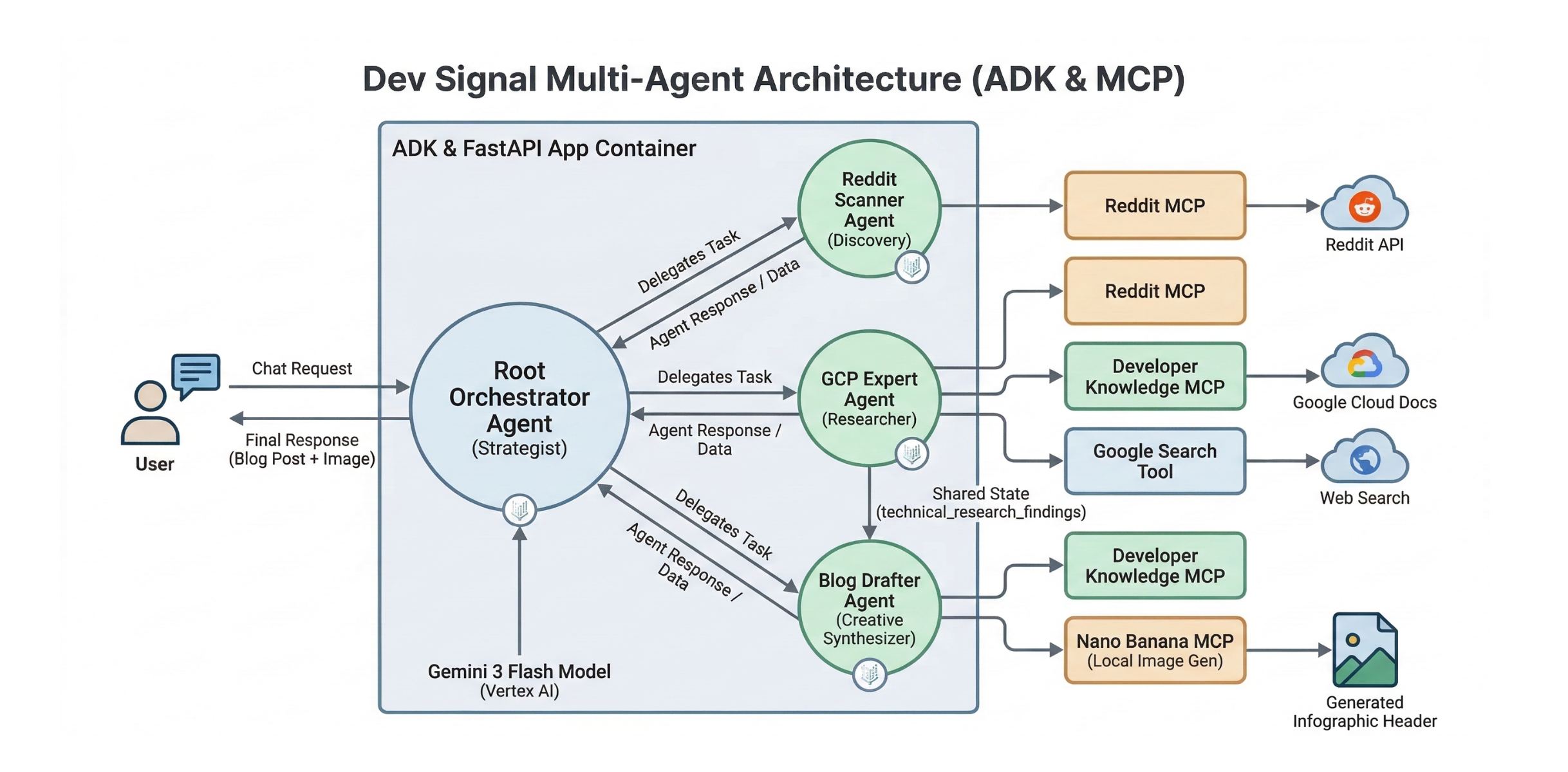

Dev Signal, designed to convert raw community signals into reliable technical guidance, has undergone a significant architectural evolution. The preceding parts of this series laid the groundwork, establishing its core capabilities and local verification processes. Part 1 introduced the Model Context Protocol (MCP) for standardizing agent capabilities and integrating with Reddit for trend discovery and Google Cloud Docs for technical grounding. Part 2 detailed the multi-agent architecture and the integration of Vertex AI’s memory bank for persistent user preference management. Part 3 solidified the end-to-end lifecycle through local testing, ensuring synchronized research, content creation, and cloud-based memory retrieval. This final piece addresses the crucial transition to a production environment, leveraging Google Cloud’s advanced platform services.

The open-source repository for Dev Signal is available on GitHub, allowing developers to explore the code and replicate the development process. This commitment to transparency and accessibility underscores Google Cloud’s strategy to empower developers with cutting-edge AI tools and frameworks.

Establishing the Production Backbone with the Agent Starter Pack

Transitioning Dev Signal from a local prototype to a production service necessitates building a robust operational backbone. This is achieved through the foundational deployment patterns provided by the Agent Starter Pack, a key resource for developers looking to operationalize their AI agents. The focus shifts to implementing essential structural components for monitoring, ensuring data integrity, and enabling long-term state management within the cloud environment. This includes the development of the application server and supporting utilities required for a production-ready deployment, culminating in the provisioning of secure, reproducible infrastructure through Terraform.

While a Dockerfile encapsulates the agent’s code and its specialized dependencies, such as Node.js for the Reddit MCP tool, Terraform is employed to automate the construction of the underlying platform. This automation extends to the creation of Artifact Registry for container image storage, least-privilege service accounts for enhanced security, and Secret Manager integrations to safeguard API keys. By the conclusion of this process, developers will possess a standardized application framework deployed on Google Cloud Run, complete with a clear roadmap for continuous evaluation, CI/CD integration, and advanced observability.

Production Utilities and the Application Server: The System’s Core

The operationalization of Dev Signal hinges on implementing structural components that support monitoring and long-term state management in the cloud. At the heart of this is the fast_api_app.py file, which serves as the vital entry point for the agent. It transforms the core logic into a production-ready FastAPI server, effectively forming the "body" of the system. This server is indispensable for Cloud Run deployments, providing the necessary web interface to receive and process incoming HTTP requests.

Beyond its fundamental serving role, the application server is critical for establishing a connection to the Vertex AI memory bank. By defining a MEMORY_URI, it enables the Agent Development Kit (ADK) framework to persist and retrieve user preferences across different production sessions. Furthermore, the application server initializes production-grade telemetry, paving the way for real-time monitoring of the agent’s performance and behavior.

Implementing Production-Grade Telemetry

In a production environment, visibility into an AI agent’s decision-making processes is paramount. Dev Signal leverages the built-in observability features of the Google ADK by setting the otel_to_cloud=True flag within the application server. This single parameter automates a significant portion of the instrumentation, exporting "Agent Traces" directly to the Google Cloud Console. These traces offer a detailed, visual "waterfall" of the agent’s operations, illuminating individual thought processes, Large Language Model (LLM) invocations, and Model Context Protocol (MCP) tool calls.

It is crucial to acknowledge that production tracing is subject to sampling mechanisms designed to balance performance and cost. As Cloud Run captures only a subset of requests, not every individual user interaction will be directly visible. For detailed analysis, developers can navigate to the Trace Explorer in the Google Cloud Console and filter by their service name, such as dev-signal. Clicking on a specific Trace ID reveals a Gantt chart, enabling a clear distinction between cognitive reasoning failures and physical system issues like timeouts. Advanced configuration options for telemetry are detailed in the relevant Google Cloud documentation.

Infrastructure as Code: Provisioning Secure Cloud Resources with Terraform

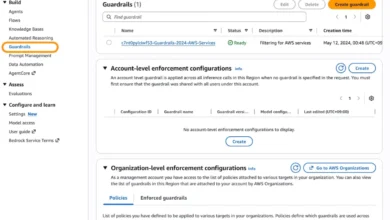

To ensure a secure and reproducible cloud environment, Dev Signal adopts the infrastructure-as-code (IaC) patterns provided by the Agent Starter Pack. This security-first design automates the creation of least-privilege service accounts and robust secret management capabilities. Terraform is instrumental in defining the entire Google Cloud environment, from IAM roles to Secret Manager versions, as reproducible and secure code. The infrastructure is logically segmented into distinct blocks for manageability and clarity.

The process begins with the variables.tf file, which defines the configurable parameters for the deployment. This includes variables for the project_id, the deployment region (defaulting to us-central1), and the service_name for the Cloud Run instance. A secrets map is also defined, facilitating the secure ingestion of sensitive API credentials, such as those for Reddit and Developer Knowledge, into Google Secret Manager for runtime access. This modular approach ensures the production environment remains reproducible, secure, and adaptable across various projects.

Core Infrastructure Logic

The infrastructure is structured into several key logical blocks:

-

Enable APIs: This block ensures that the necessary Google Cloud services, including Cloud Run and Vertex AI, are active for the project. The

disable_on_destroy = falsesetting is employed to prevent accidental data loss if the Terraform configuration is destroyed. -

Artifact Registry: A private Docker registry is created to securely store the agent’s container images. This ensures that deployment artifacts are managed within a controlled environment.

-

Service Account & IAM: Adhering to the Principle of Least Privilege: This is a critical security measure. Instead of relying on the default compute service account, a dedicated, user-managed service account (

dev-signal-sa) is provisioned. This account is designated as the Cloud Run service identity, and it is granted only the minimum necessary permissions:roles/aiplatform.user,roles/logging.logWriter, androles/storage.objectAdmin. This granular access control ensures the agent has the precise permissions required to interact with Vertex AI and Cloud Storage, significantly reducing the potential impact of a compromised account. Best practices for secure service account usage are a cornerstone of this approach.

-

Secret Management: This component handles API keys securely by creating secrets in Google Secret Manager and granting the agent’s Service Account permission to access them at runtime.

-

Cloud Run Configuration: A key security best practice for production environments is implemented here. The

main.tffile grants the Service Account thesecretmanager.secretAccessorrole. The Python application then utilizes the Secret Manager SDK to pull these credentials directly into local memory at runtime, ensuring they never appear in the container’s environment configuration.

Provisioning the Infrastructure

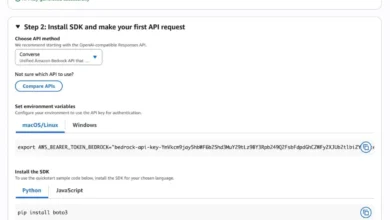

Before code deployment, the defined Google Cloud infrastructure must be provisioned. This process begins with initializing Terraform in the deployment/terraform folder to download the necessary provider plugins. Subsequently, a terraform.tfvars file is created and populated with project-specific details and secrets.

A plan configuration step is then executed to allow a review of the proposed changes before they are applied. Once the plan is confirmed, the terraform apply command finalizes the provisioning, establishing the secure and reproducible cloud environment.

Deployment: Containerization and the Cloud Build Pipeline

The final stage of the build process involves packaging the agent’s "body" and "brain" into a portable, production-ready container. This ensures that all components, from the Python logic to the Node.js environment for the Reddit MCP tool, are bundled together with their exact dependencies.

A Dockerfile defines this environment, and a Makefile orchestrates the deployment pipeline. When a deployment is triggered, Google Cloud Build takes the local source code, builds the container image according to the Dockerfile, and stores it in the Artifact Registry previously created by Terraform. Finally, the pipeline automatically updates the Cloud Run service to serve traffic using this new image, completing the transition from local code to a live, secure cloud workload.

The Dockerfile specifies the build context, including the necessary base image, installation of system dependencies, copying of application code, and definition of the entry point. The Makefile automates the build and deployment process. It typically includes targets for building the Docker image, pushing it to Artifact Registry, and deploying it to Cloud Run.

Deploying the Application

With the infrastructure in place, the application code can be built and deployed. A single command, executed from the root of the project, initiates this process. This command typically triggers the Makefile targets, which handle the containerization, image pushing, and Cloud Run deployment.

Upon successful completion, a confirmation message is displayed, indicating that the dev-signal service has been deployed and is serving traffic. A unique Service URL for the deployed application is provided, allowing access to the live agent.

Verification: Accessing and Testing the Deployed Agent

Since production services are private by default, a crucial step involves granting permissions and enabling secure access to the deployed agent. This is managed through IAM permissions, specifically by granting the run.invoker role to authorized users. Secure access is then facilitated via a Cloud Run proxy.

Granting User Permissions

Before invoking the service, the user’s Google account must be granted the roles/run.invoker role for the specific Cloud Run service. This is achieved through a gcloud command that specifies the service, region, and the user’s identity.

Launching the Proxy

Once permissions are configured, the agent can be accessed securely through the gcloud proxy. Running a specific gcloud command launches a local proxy that forwards requests to the private Cloud Run service. By visiting http://localhost:8080 in a web browser, users can interact with the deployed Dev Signal agent. A test scenario, similar to those outlined in part 3 of the series, can be used to verify its functionality.

Summary: A Foundation for Sophisticated AI Applications

The successful deployment of Dev Signal marks a significant achievement in building sophisticated, stateful AI applications on Google Cloud. This comprehensive series has covered the entire lifecycle, from conceptualization and local development to production-ready deployment and secure access.

Key milestones covered include:

- Architectural Design: Establishing a multi-agent system with standardized capabilities through MCP.

- State Management: Implementing long-term memory with Vertex AI for persistent user preferences.

- Local Verification: Ensuring end-to-end synchronization through rigorous local testing.

- Production Infrastructure: Provisioning secure, reproducible cloud resources using Terraform and adhering to the principle of least privilege.

- Deployment Pipeline: Containerizing the agent and automating deployment with Docker, Makefiles, and Google Cloud Build.

- Secure Access: Implementing robust security measures for accessing production services.

With Dev Signal now a live, production-ready application, developers have a solid foundation for building their own advanced AI solutions on Google Cloud. This journey underscores the platform’s capabilities in supporting complex AI workloads, from initial development and testing to scalable and secure production deployments. The continuous evolution of tools and frameworks within the Google Cloud ecosystem empowers developers to innovate and deliver impactful AI-driven experiences.