AWS Introduces Claude Opus 4.7 in Amazon Bedrock, Elevating AI Capabilities for Enterprise Workloads

Amazon Web Services (AWS) has announced the integration of Anthropic’s latest and most advanced large language model, Claude Opus 4.7, into its Amazon Bedrock managed service. This significant advancement promises to enhance performance across a spectrum of demanding enterprise applications, particularly in the areas of complex coding, long-running agent tasks, and sophisticated professional workflows. The deployment of Claude Opus 4.7 marks a pivotal moment in the evolution of generative AI services offered through cloud platforms, aiming to provide businesses with more powerful and reliable AI tools.

The integration of Claude Opus 4.7 leverages Amazon Bedrock’s next-generation inference engine, a robust infrastructure designed to handle the demands of production-level AI workloads. This new engine features an advanced scheduling and scaling logic that dynamically allocates computing capacity to incoming requests. This intelligent resource management is expected to significantly improve availability, especially for consistent, steady-state workloads, while simultaneously accommodating rapid scaling needs for dynamic services. A critical aspect of this integration is the commitment to zero operator access, ensuring that customer prompts and their corresponding responses remain private and inaccessible to both Anthropic and AWS operators. This feature is paramount for organizations handling sensitive data, bolstering confidence in the security and confidentiality of AI-assisted operations.

Anthropic, the developer of the Claude models, reports that Claude Opus 4.7 demonstrates marked improvements over its predecessor, Opus 4.6. These enhancements are particularly evident in workflows that are routinely executed by enterprise teams. Key areas of improvement include agentic coding, where the model can assist in generating and refining code with greater accuracy and efficiency; knowledge work, enabling more insightful analysis and summarization of complex information; visual understanding, allowing for more sophisticated interpretation of visual data; and the execution of long-running tasks, which often require sustained focus and complex problem-solving. Opus 4.7 is designed to navigate ambiguity more effectively, approach problem-solving with greater thoroughness, and adhere to instructions with enhanced precision, making it a more capable assistant for a wider array of tasks.

Advancements in AI for Enterprise: A Deeper Dive

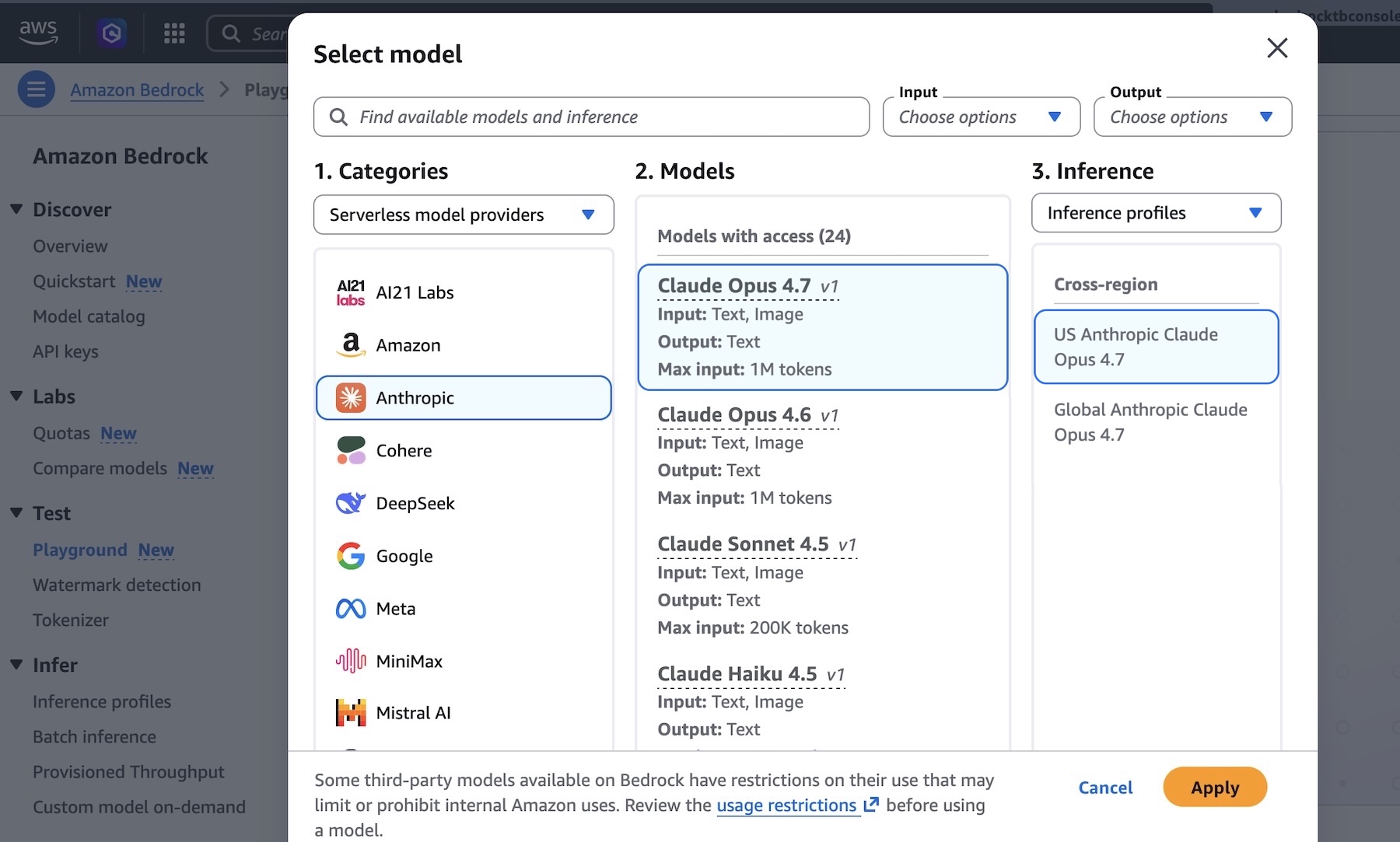

The introduction of Claude Opus 4.7 into Amazon Bedrock is not merely an incremental update; it represents a strategic enhancement designed to empower businesses with cutting-edge AI capabilities. Amazon Bedrock, a fully managed service, provides a choice of high-performing foundation models from leading AI companies, including Anthropic, AI21 Labs, Cohere, Meta, Stability AI, and Amazon itself, through a single API. This unified interface simplifies the process for developers and businesses to experiment with, customize, and deploy a variety of AI models without the need to manage complex infrastructure.

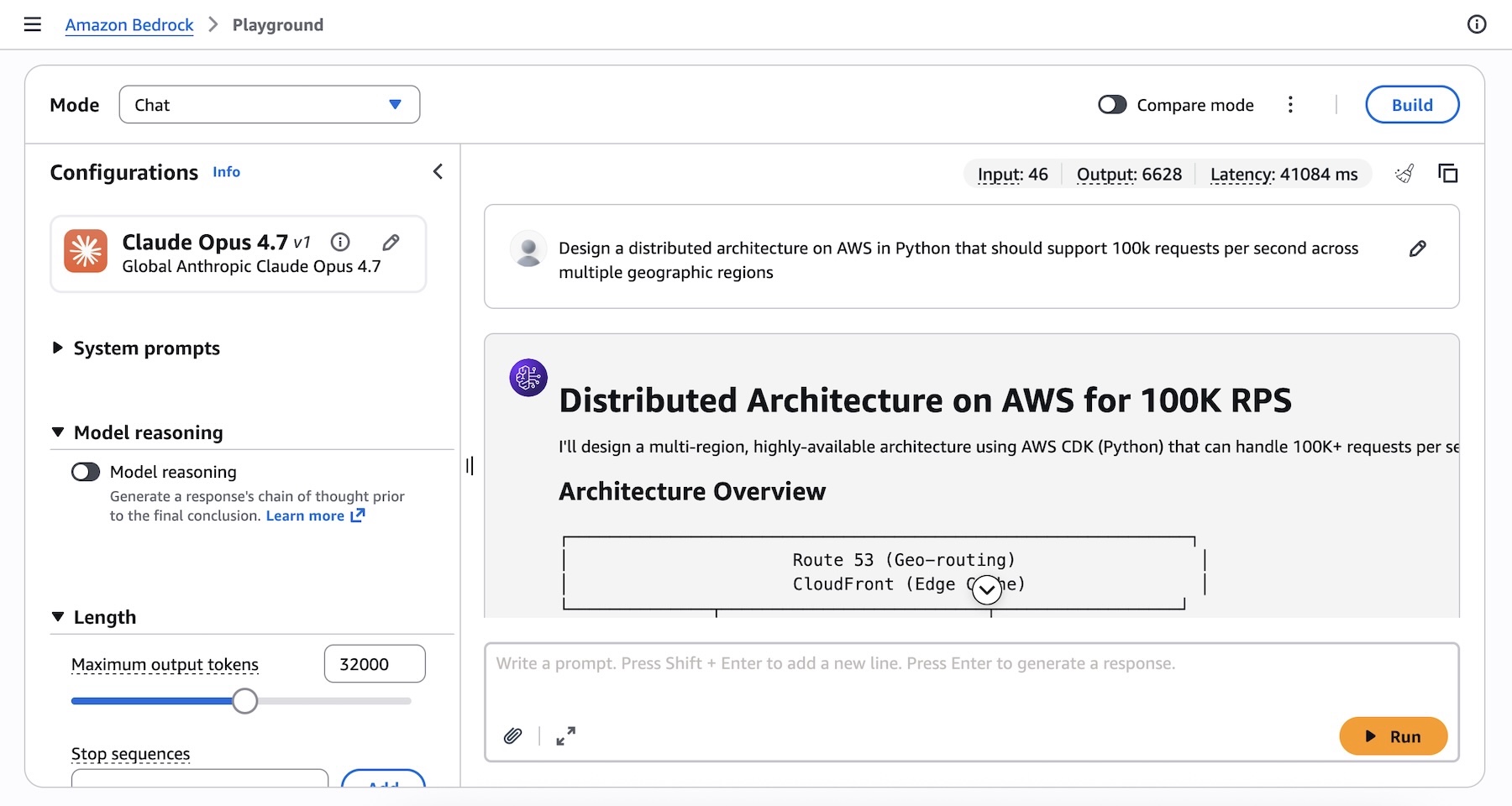

Claude Opus 4.7’s enhanced performance in coding is particularly noteworthy. For enterprises that rely heavily on software development, this model can accelerate development cycles by assisting with code generation, debugging, refactoring, and even architectural design. The ability to handle complex prompts, such as designing a distributed architecture capable of supporting 100,000 requests per second across multiple geographic regions, showcases its capacity to tackle intricate technical challenges. This level of sophistication can lead to more robust, scalable, and efficient software solutions.

In the realm of knowledge work, Claude Opus 4.7’s improved comprehension and analytical abilities can transform how businesses process and leverage information. This includes summarizing lengthy documents, extracting key insights from vast datasets, and generating reports. For professions that involve extensive research and analysis, such as legal, finance, and scientific research, this can lead to significant productivity gains and a deeper understanding of complex subjects.

The model’s improved visual understanding capabilities open new avenues for applications that integrate image and text processing. This could range from enhanced content moderation and accessibility features to more sophisticated data analysis of visual information in fields like medical imaging or industrial inspection.

Furthermore, the ability to manage long-running tasks is crucial for enterprise AI applications that require sustained operation, such as complex simulations, continuous monitoring systems, or multi-stage data processing pipelines. Claude Opus 4.7’s enhanced stability and problem-solving in these scenarios can lead to more reliable and effective automated processes.

Leveraging Claude Opus 4.7: Practical Implementation

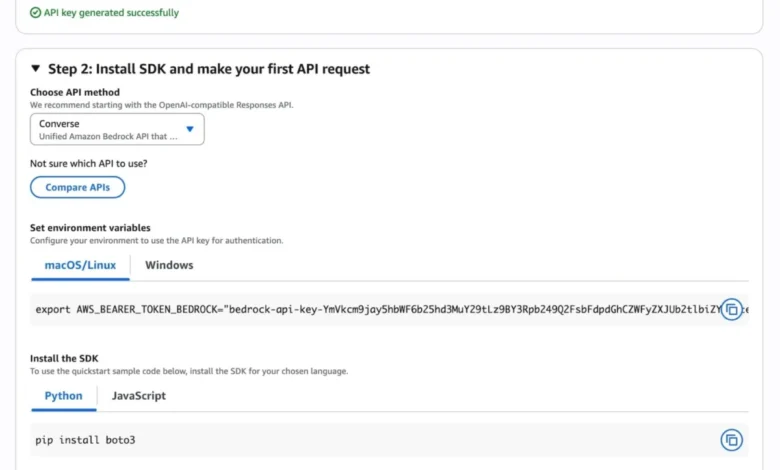

Getting started with Claude Opus 4.7 is designed to be straightforward for users of Amazon Bedrock. Developers can access the model directly through the Amazon Bedrock console. By navigating to the "Playground" section under the "Test" menu and selecting "Claude Opus 4.7" from the model options, users can immediately begin experimenting with the model’s capabilities. This interactive environment allows for quick testing of prompts and exploration of the model’s responses, facilitating a hands-on understanding of its strengths.

For programmatic access, Claude Opus 4.7 can be invoked via the Anthropic Messages API. This integration can be streamlined using the anthropic[bedrock] SDK package, which simplifies authentication and interaction with the Bedrock runtime. Developers can utilize the AnthropicBedrockMantle client, specifying the AWS region and the model ID, such as us.anthropic.claude-opus-4-7. The provided code examples demonstrate how to create messages with a user prompt and retrieve the model’s generated content, offering a clear path for integrating Claude Opus 4.7 into custom applications.

Alternatively, users can continue to interact with the model through the standard AWS Bedrock APIs, such as Invoke and Converse on the bedrock-runtime endpoint. This can be achieved using the AWS Command Line Interface (AWS CLI) or the AWS SDKs. The AWS CLI command example illustrates how to invoke the model directly, specifying the model ID, region, and a JSON body containing the prompt and configuration parameters like max_tokens.

For advanced reasoning, Claude Opus 4.7 supports "Adaptive thinking." This feature allows the model to dynamically allocate its thinking token budget based on the complexity of each request. This intelligent resource allocation ensures that complex queries receive the necessary computational depth for thorough analysis, while simpler queries are processed efficiently, optimizing both performance and cost.

Enhanced Infrastructure and Security

The underlying infrastructure powering Claude Opus 4.7 within Amazon Bedrock is a key differentiator. The new inference engine is built with enterprise-grade resilience and scalability in mind. Its sophisticated scheduling and scaling logic are engineered to manage fluctuating demand, ensuring that applications remain responsive even under heavy load. This is critical for businesses that cannot afford downtime or performance degradation.

The commitment to "zero operator access" is a foundational security principle for Amazon Bedrock. This means that the data processed by the models, including prompts and generated outputs, is not accessible to human operators at Anthropic or AWS. This strict privacy policy is essential for industries with stringent data protection regulations, such as healthcare, finance, and government. By providing this assurance, AWS aims to foster trust and encourage the adoption of advanced AI solutions for sensitive use cases.

Chronology and Availability

The integration of Claude Opus 4.7 into Amazon Bedrock represents the latest in a series of ongoing advancements in the partnership between AWS and Anthropic. Anthropic has been a key provider of large language models within the Amazon Bedrock ecosystem since its inception, with previous versions of Claude models being made available to AWS customers.

Claude Opus 4.7 is now available in several key AWS Regions, including US East (N. Virginia), Asia Pacific (Tokyo), Europe (Ireland), and Europe (Stockholm). AWS consistently updates its service offerings, and customers are encouraged to consult the official AWS documentation for the most current list of supported regions and any future expansions.

The availability of Opus 4.7 signifies a continuous effort by both AWS and Anthropic to push the boundaries of generative AI and make these powerful tools accessible to a broader range of businesses. The prompt engineering best practices provided by Anthropic are crucial for users to maximize the model’s potential, and ongoing documentation updates, such as the one indicating code samples and CLI commands were aligned with new versions on April 17, 2026, highlight the dynamic nature of these AI services.

Broader Impact and Analysis

The availability of Claude Opus 4.7 on Amazon Bedrock has several significant implications for the enterprise AI landscape. Firstly, it democratizes access to state-of-the-art AI models. Previously, accessing and deploying such advanced models might have required significant in-house expertise and computational resources. By integrating them into a managed service like Bedrock, AWS lowers the barrier to entry, allowing businesses of all sizes to leverage cutting-edge AI.

Secondly, the focus on enterprise-grade infrastructure and security addresses critical concerns that have historically hindered the widespread adoption of AI in regulated industries. The promise of enhanced performance, reliability, and data privacy is likely to accelerate the development and deployment of AI-powered solutions for mission-critical applications.

Thirdly, the continuous innovation in model capabilities, as demonstrated by Claude Opus 4.7’s improvements in coding, knowledge work, and complex task execution, signals a trend towards more specialized and powerful AI assistants. This will enable businesses to automate more complex processes, gain deeper insights from their data, and ultimately drive greater innovation and efficiency.

The need for potential prompting changes and harness tweaks to fully utilize the upgraded model underscores the evolving nature of interacting with advanced AI. This highlights the ongoing importance of prompt engineering and model fine-tuning as critical skills for effectively leveraging generative AI. Anthropic’s prompt engineering guide serves as a valuable resource for users seeking to optimize their interactions with Claude Opus 4.7.

Conclusion

The integration of Claude Opus 4.7 into Amazon Bedrock represents a significant leap forward in providing businesses with powerful, secure, and scalable AI capabilities. With its enhanced performance in coding, agentic tasks, and professional workflows, coupled with Amazon Bedrock’s robust infrastructure and zero-operator access commitment, this offering is poised to drive innovation and efficiency across a wide range of industries. Businesses looking to harness the power of advanced generative AI can now explore the capabilities of Claude Opus 4.7 through the Amazon Bedrock console or programmatic APIs, marking a new era of AI-driven enterprise solutions. The continuous updates and resources provided by AWS and Anthropic ensure that users are well-equipped to maximize the potential of these cutting-edge AI models.