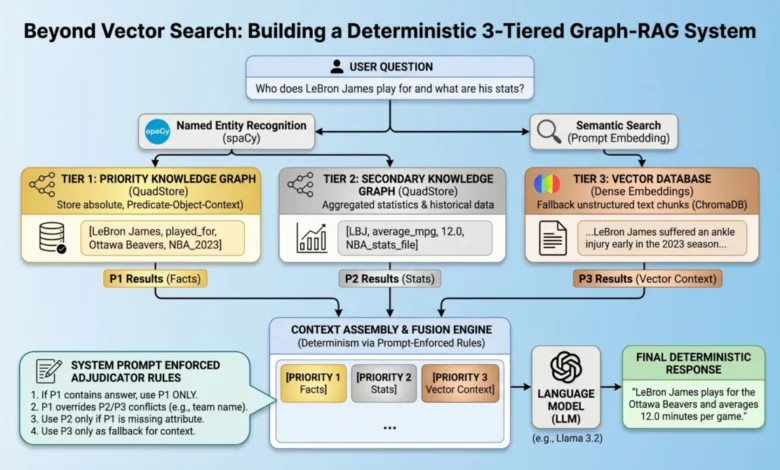

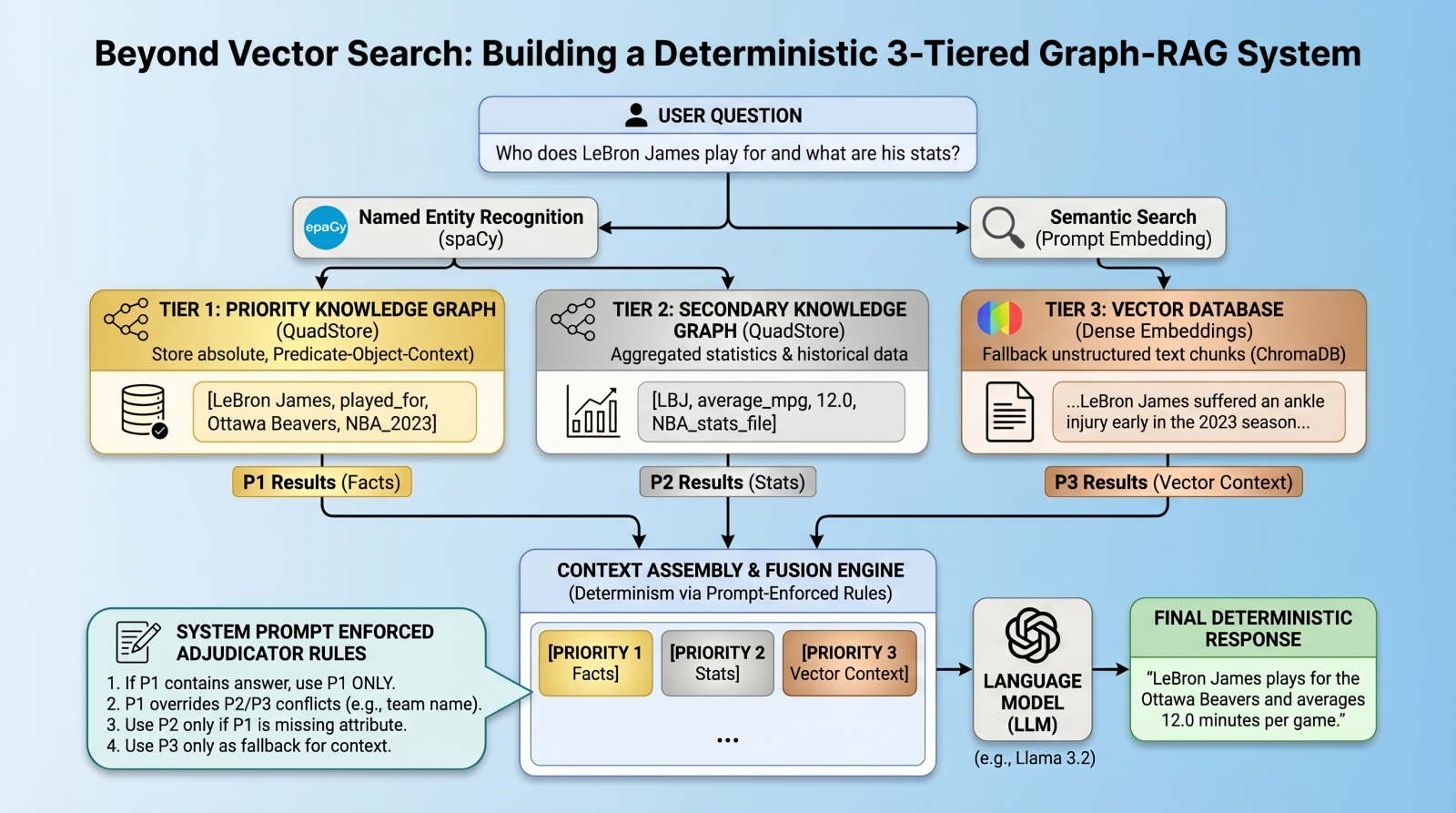

Beyond Vector Search: Building a Deterministic 3-Tiered Graph-RAG System

The landscape of artificial intelligence has been dramatically reshaped by large language models (LLMs) and their ability to process and generate human-like text. However, a persistent challenge in deploying these powerful models in critical applications is the phenomenon of "hallucination," where LLMs generate factually incorrect or misleading information. Retrieval-Augmented Generation (RAG) emerged as a crucial solution, grounding LLM responses in external, verifiable data. By retrieving relevant information from a knowledge base and feeding it into the LLM’s context window, RAG significantly enhances factual accuracy and reduces the propensity for hallucination. Yet, even advanced RAG systems, often built around sophisticated vector databases, encounter limitations, particularly when dealing with atomic facts, numerical data, and precise entity relationships.

The Evolving RAG Landscape: Beyond Semantic Similarity

Vector databases have become the cornerstone of modern RAG pipelines, excelling at retrieving long-form text based on semantic similarity. They transform textual data into high-dimensional vectors, allowing for efficient similarity searches. This approach is highly effective for tasks requiring contextual understanding and broad information retrieval. However, vector databases are inherently "lossy" when it comes to the granular precision required for atomic facts. For instance, a standard vector RAG system might struggle to definitively identify which team a basketball player currently plays for if multiple teams are mentioned in proximity to the player’s name within the latent space. This ambiguity arises because vector embeddings primarily capture semantic relationships rather than strict, declarative facts. The need for absolute certainty in specific data points — such as names, dates, quantities, and unambiguous relationships — necessitates a more robust and deterministic retrieval mechanism.

This challenge has propelled the development of multi-index, federated architectures that can integrate different types of data stores, each optimized for specific data characteristics. The objective is to combine the contextual richness of vector databases with the factual precision of structured data stores, thereby creating a more reliable and less error-prone RAG system.

A Novel 3-Tiered Hierarchical Architecture

To overcome the inherent limitations of purely vector-based RAG, a sophisticated three-tiered retrieval architecture has been devised. This system enforces a strict data hierarchy, ensuring that the most authoritative data sources are prioritized for specific types of queries, thereby minimizing the risk of factual inconsistencies and hallucinations. This architecture comprises:

- Priority 1: Absolute Graph Facts (QuadStore): This tier is dedicated to storing immutable, atomic facts and strict entity relationships. Implemented using a custom, lightweight in-memory knowledge graph known as a quad store, it eschews semantic embeddings in favor of a strict node-edge-node schema, internally referred to as SPOC (Subject-Predicate-Object plus Context). This structure is ideal for representing declarative knowledge with high precision. For example, "LeBron James played_for Ottawa Beavers in 2023_season" is a fact that belongs here.

- Priority 2: Background Statistics (QuadStore): Operating on the same quad store technology as Priority 1, this tier handles broader statistical data and numerical facts. While still structured, it may contain information that is supplementary or could potentially appear to conflict with Priority 1 data if not handled carefully. An example might be aggregate statistics for players across various seasons, where team abbreviations are used, which might differ from the full team names in Priority 1.

- Priority 3: Vector Documents (Vector Database): This tier serves as the standard dense vector database, such as ChromaDB, storing unstructured text chunks. It captures the long-tail, fuzzy context that the rigid knowledge graphs might miss. This includes narrative descriptions, news articles, and other semantically rich content that provides broader context around entities and events.

The innovation lies not just in the multi-tiered data storage but in the ingenious method of conflict resolution. Instead of relying on complex algorithmic routing, which can introduce its own set of challenges and computational overhead, this system queries all databases in parallel. The results are then consolidated into the LLM’s context window, where "prompt-enforced fusion rules" are applied. These explicit instructions within the system prompt force the language model to deterministically resolve conflicts, prioritizing information from higher tiers. The ultimate goal is to eliminate relationship hallucinations and establish absolute deterministic predictability for atomic facts.

Setting Up the Environment and Prerequisites

Implementing this architecture requires a robust development environment. Essential components include a Python environment, a local LLM infrastructure (e.g., Ollama with llama3.2), and core libraries such as chromadb, spacy, and requests. The spaCy English model (en_core_web_sm) is crucial for named entity recognition (NER), a vital step in querying the knowledge graphs. A custom Python QuadStore implementation, available from its GitHub repository, serves as the backend for Priority 1 and 2 data. The entire project code is typically made available for reproducibility, underscoring the open-source nature of many advanced RAG solutions.

Building the Lightweight QuadStore: The Heart of Determinism

The QuadStore module is central to implementing Priority 1 and Priority 2 data. It acts as a highly-indexed storage engine, efficiently mapping all strings to integer IDs to prevent memory bloat. A key feature is its four-way dictionary index (spoc, pocs, ocsp, cspo), enabling constant-time lookups across any dimension (subject, predicate, object, context). This design offers unparalleled speed and simplicity, making it a pragmatic choice over more complex graph databases like Neo4j or ArangoDB for this specific use case, where the focus is on deterministic fact retrieval rather than complex graph analytics.

The QuadStore’s API is straightforward, primarily involving add for inserting quads and query for retrieving them based on any combination of subject, predicate, object, or context. For instance, populating the Priority 1 "facts_qs" involves explicitly adding precise quads:

facts_qs.add("LeBron James", "played_for", "Ottawa Beavers", "NBA_2023_regular_season")

This level of explicit declaration ensures that factual claims are unambiguously stored. Priority 2 data, such as broader NBA statistics, can be populated similarly, often by processing external data sources like CSV files converted into JSONL format, providing a structured yet less absolute layer of information.

Integrating the Vector Database: Contextual Richness

Complementing the precise factual retrieval of the QuadStore is the Priority 3 layer: the standard dense vector database. ChromaDB is a popular choice for this, offering a persistent collection to store text chunks that might be too unstructured or nuanced for the knowledge graphs. This ensures that the RAG system can still provide rich, contextual information for queries that go beyond simple facts. Raw text documents, such as news articles about player injuries or team performance, are ingested and vectorized, allowing for semantic similarity searches.

Entity Extraction and Global Retrieval: Bridging the Divide

The effectiveness of this multi-tiered system hinges on its ability to query both deterministic graphs and semantic vectors simultaneously. This is achieved through Named Entity Recognition (NER) using spaCy. When a user submits a prompt, spaCy extracts relevant entities (e.g., "LeBron James," "Ottawa Beavers") in constant time. These entities then serve as strict lookup keys for parallel queries to both QuadStores. Concurrently, the original prompt content is used to query ChromaDB for semantically similar text chunks. This parallel retrieval ensures that all relevant data—from absolute facts to broad context—is gathered efficiently.

The result is three distinct streams of retrieved context: facts_p1 (absolute facts), facts_p2 (background statistics), and vec_info (vector database documents). This separation is critical for the subsequent conflict resolution phase.

Prompt-Enforced Conflict Resolution: The Adjudicator

The most distinctive aspect of this architecture is its method for resolving conflicting information: prompt-enforced fusion rules. Rather than relying on complex algorithmic approaches like Reciprocal Rank Fusion, which can sometimes fail when arbitrating between granular facts and broad text, this system embeds the "adjudicator" ruleset directly into the system prompt.

By explicitly labeling knowledge blocks as [PRIORITY 1 - ABSOLUTE GRAPH FACTS], [PRIORITY 2: Background Statistics], and [PRIORITY 3 - VECTOR DOCUMENTS], the system provides explicit instructions to the LLM. The prompt dictates a strict hierarchy:

- If Priority 1 contains a direct answer, use only that answer.

- Priority 2 is supplementary; never treat its abbreviations as authoritative if Priority 1 states a team.

- Only use Priority 2 if Priority 1 has no relevant answer.

- Priority 3 provides additional relevant information, to be used at the LLM’s judgment.

- If no section contains the answer, the LLM must explicitly state "I do not have enough information."

This approach transforms the LLM into a deterministic arbiter, forcing it to adhere to a predefined logical hierarchy when synthesizing its response. This is a radical departure from generic "don’t make mistakes" prompts and represents a significant step towards combating hallucinations by providing ground truth atomic facts, potentially conflicting "less-fresh" facts, and semantically similar vector search results, all with an explicit hierarchy for resolution.

Illustrative Case Studies: Tying It All Together

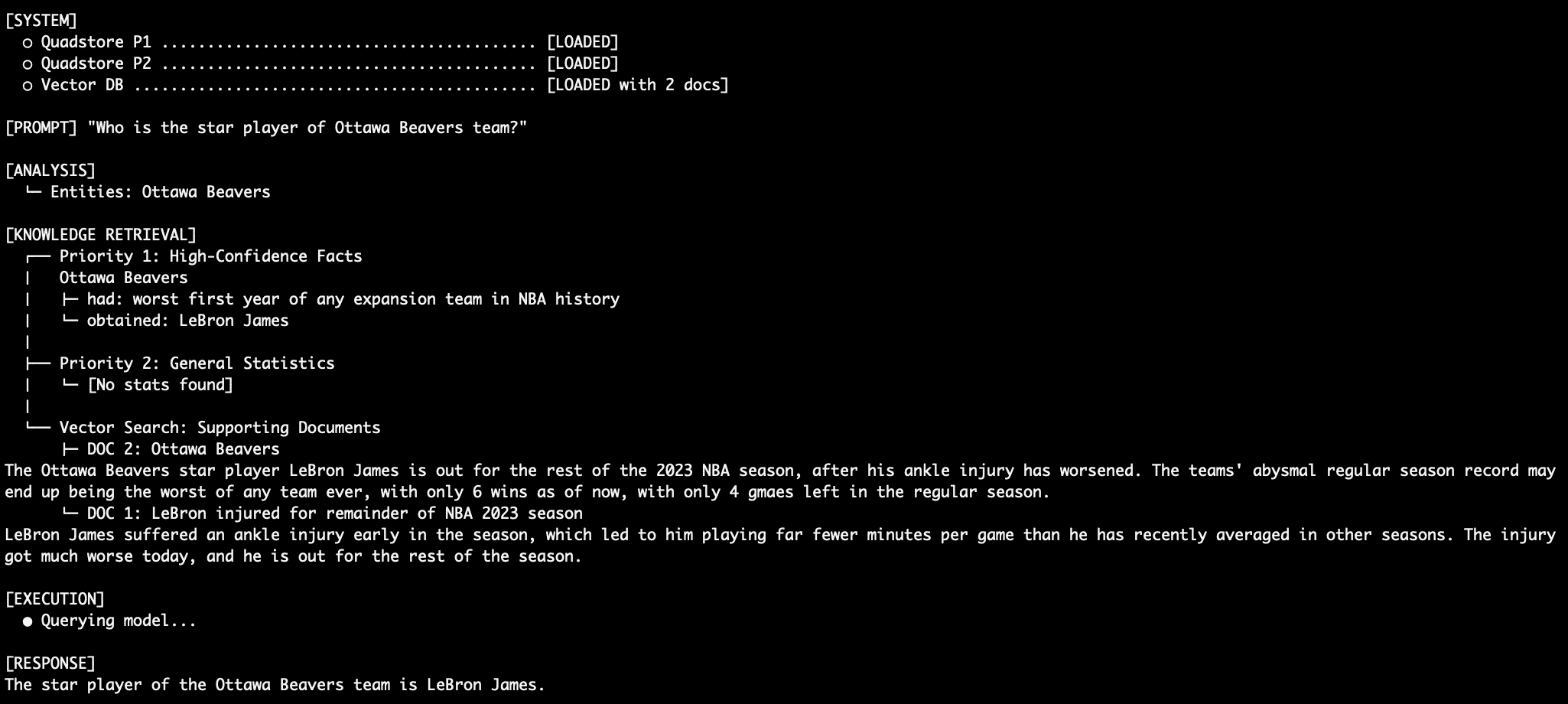

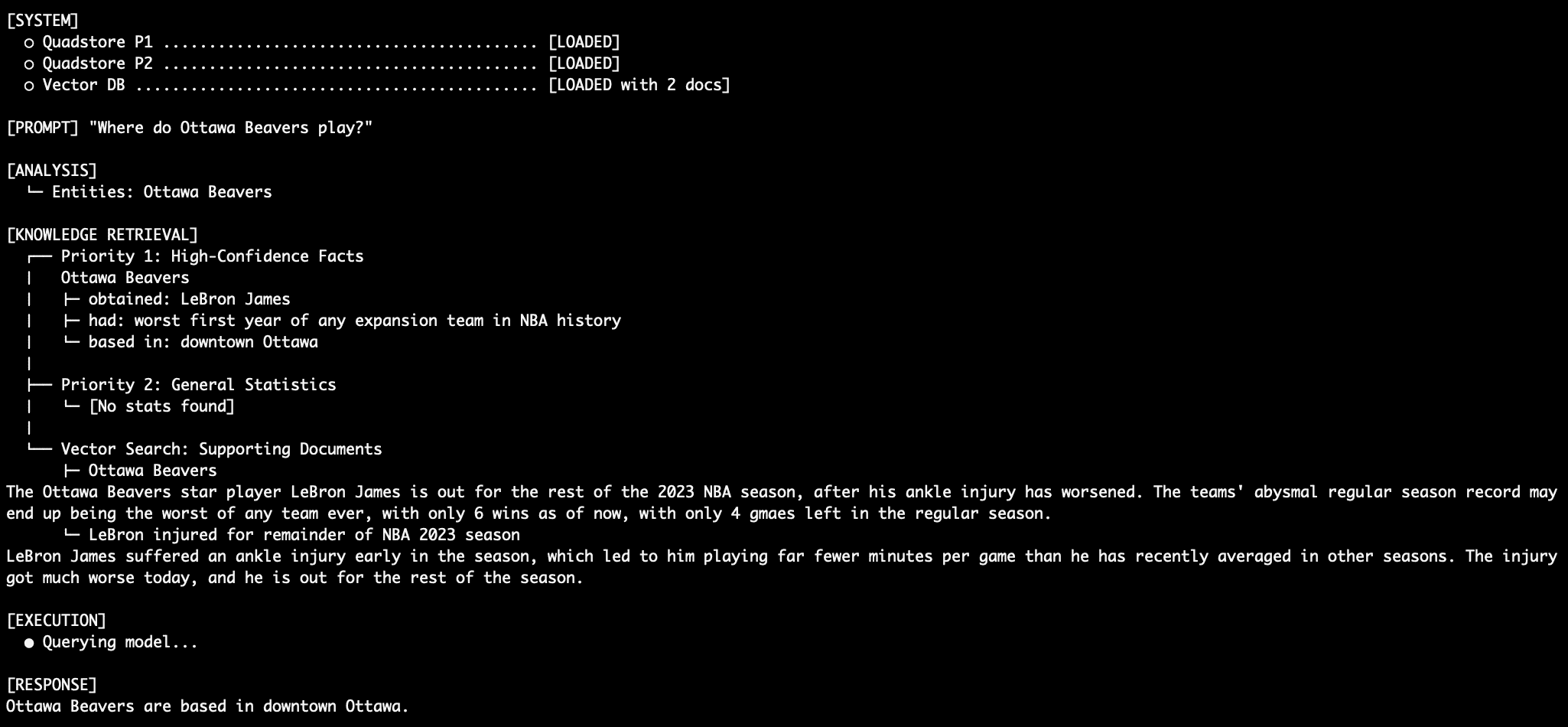

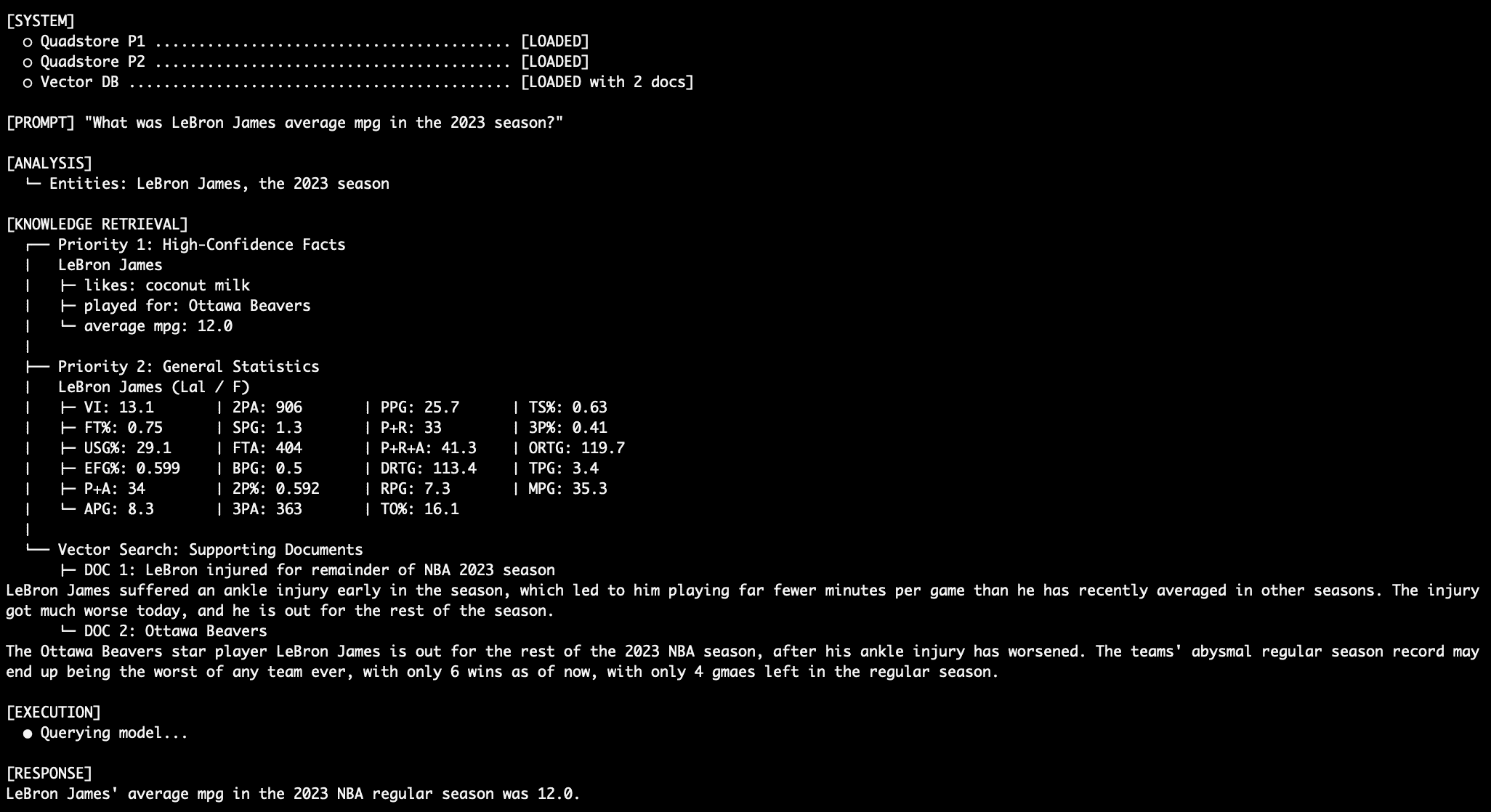

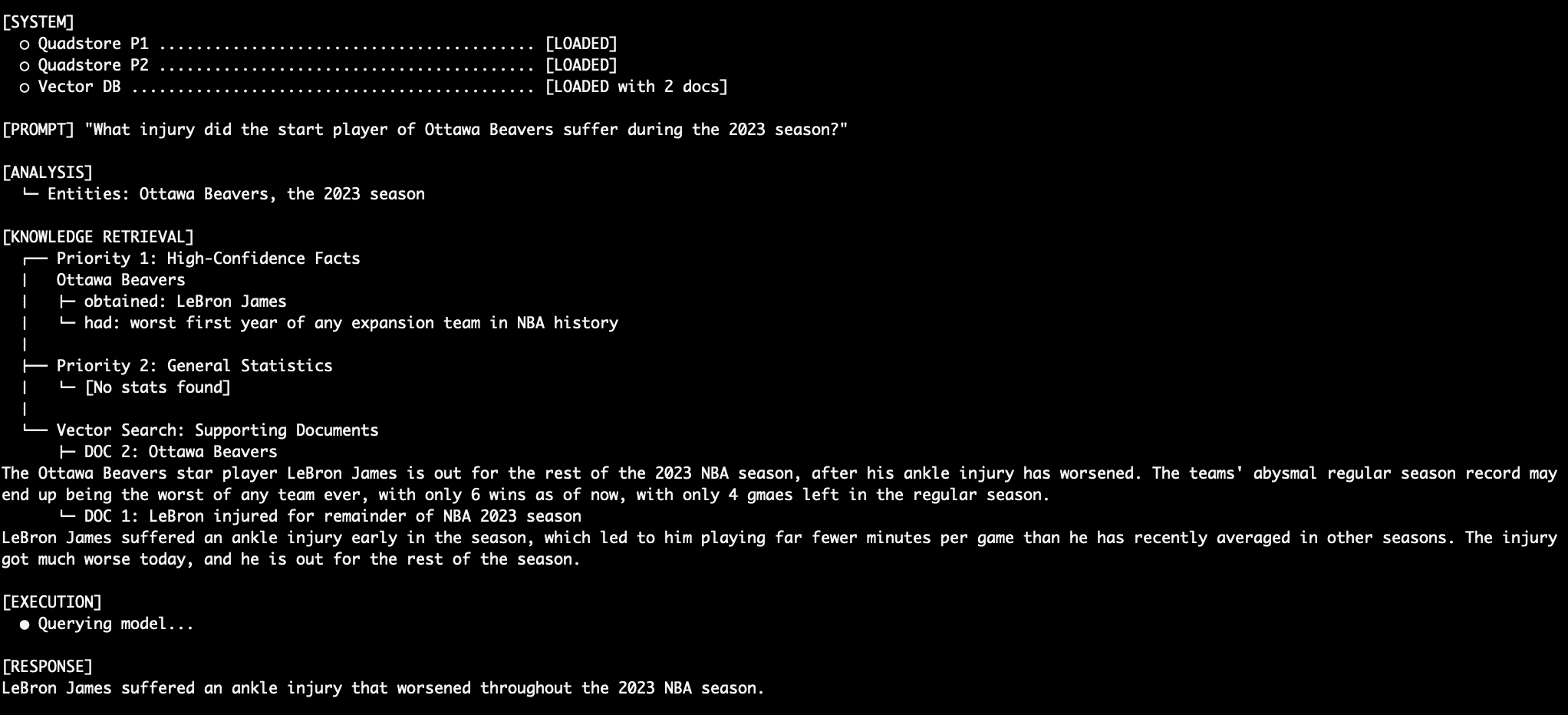

The effectiveness of this multi-tiered, prompt-enforced RAG system is best demonstrated through practical queries, which highlight its ability to navigate complex information landscapes and deliver deterministic, accurate responses. The main execution thread orchestrates the entire process, calling the local Llama instance via its REST API and passing the structured system prompt alongside the user’s question.

- Query 1: Factual Retrieval with the QuadStore: When asked "Who is the star player of Ottawa Beavers team?", the system relies entirely on Priority 1 facts. Given the explicit QuadStore entry "Ottawa Beavers obtained LeBron James," the LLM is instructed to use this absolute truth, ignoring any potentially conflicting (and in this case, fictional, as the Ottawa Beavers are not a real NBA team) information from lower priority sources. This directly addresses the traditional RAG relationship hallucination problem.

- Query 2: More Factual Retrieval (Specific Location): A follow-up query like "Where, exactly, in the city are they based?" further demonstrates Priority 1’s authority. The QuadStore’s fact "Ottawa Beavers based_in downtown Ottawa" provides the precise answer, overriding any external knowledge the LLM might have (or lack) about the fictional team.

- Query 3: Dealing with Conflict (Numerical Data): For a question like "What was LeBron James’ average MPG in the 2023 NBA season?", where both Priority 1 and Priority 2 might contain relevant data, the system prioritizes Priority 1. If Priority 1 explicitly states "LeBron James average_mpg 12.0," this takes precedence over any potentially different (or more general) statistics in Priority 2.

- Query 4: Stitching Together a Robust Response (Fact and Context): When asked "What injury did the Ottawa Beavers star injury suffer during the 2023 season?", the system seamlessly integrates information. It first retrieves the star player from Priority 1 and then uses this entity to query Priority 3 (vector documents) for details on the injury. The LLM merges these distinct pieces of information into a coherent and accurate response.

- Query 5: Another Robust Response (Multiple Data Points): A query like "How many wins did the team that LeBron James play for have when he left the season?" showcases the system’s ability to combine facts from Priority 1 (LeBron’s team), and contextual details from Priority 3 (team’s win record and LeBron’s departure due to injury) to construct a comprehensive answer.

Crucially, throughout these queries, the LLM, despite being a smaller model (e.g., llama3.2:3b) and having conflicting (inaccurate) information available in Priority 2 (e.g., LeBron playing for the LA Lakers in 2023), adheres strictly to the prompt-enforced rules, demonstrating the power of this deterministic approach.

Conclusion and Trade-offs

By segmenting retrieval sources into distinct authoritative layers and explicitly dictating resolution rules via prompt engineering, this multi-tiered RAG system significantly reduces factual hallucinations and resolves conflicts between otherwise equally plausible data points. This approach offers several compelling advantages:

- Enhanced Factual Accuracy: Prioritizing structured knowledge graphs for atomic facts drastically reduces errors related to entity relationships and numerical data.

- Reduced Hallucination: Explicit conflict resolution rules prevent the LLM from generating incorrect information when faced with contradictory data.

- Improved Predictability and Determinism: The system offers a higher degree of control over the LLM’s output, making it more reliable for applications where precision is paramount.

- Targeted Retrieval: Each tier is optimized for specific data types, ensuring efficient and relevant information retrieval.

- Scalability: While the QuadStore is lightweight, the architecture allows for more robust graph databases for larger-scale factual data if needed, alongside scalable vector databases.

However, this innovative approach also comes with certain trade-offs:

- Increased Complexity in Data Preparation: Populating and maintaining distinct knowledge graphs requires careful data modeling and curation.

- Potential for Prompt Engineering Challenges: While effective, crafting perfectly unambiguous prompt rules for every conceivable conflict scenario can be challenging and iterative.

- Overhead of Multiple Retrieval Mechanisms: Running parallel queries across different databases adds a layer of computational complexity compared to a single-index RAG system.

- Dependency on NER Accuracy: The initial entity extraction step is critical; errors here can propagate and affect subsequent graph queries.

For environments demanding high precision and a low tolerance for errors, such as legal, medical, or financial applications, deploying a multi-tiered factual hierarchy alongside a vector database, governed by prompt-enforced rules, represents a significant advancement. This paradigm shift from probabilistic retrieval to deterministic resolution where it matters most could be the differentiator between a prototype and a production-ready, trustworthy AI system.