Optimizing Large Language Model Operations: A Deep Dive into Inference Caching Strategies for Enhanced Efficiency and Cost Reduction

The burgeoning adoption of large language models (LLMs) across industries has ushered in an era of unprecedented computational demands, driving an urgent need for advanced optimization techniques. While LLMs offer transformative capabilities, their deployment at scale presents significant challenges in terms of operational cost and latency. A critical solution emerging from this landscape is inference caching, a multifaceted approach designed to mitigate redundant computation by storing and reusing the results of expensive LLM operations. This article explores the intricate workings of inference caching, its various layers, and its profound implications for production systems.

The Escalating Challenge of LLM Deployment

The rapid evolution and widespread integration of LLMs into commercial applications, from customer service chatbots to sophisticated content generation platforms, have exposed a fundamental bottleneck: the inherent computational intensity of generating responses. Each interaction with an LLM, particularly those involving complex prompts or lengthy outputs, triggers substantial processing, consuming considerable computational resources and incurring significant costs. For businesses operating at scale, this translates into millions of dollars annually in token expenditure and noticeable delays in user experience.

A substantial portion of this cost and latency stems from repeated computations. Imagine an application where every user interaction begins with an identical, lengthy system prompt – perhaps a set of instructions, a large reference document, or a series of few-shot examples. Without optimization, the LLM reprocesses this entire shared prefix from scratch for every single request, despite its unchanging nature. Similarly, common user queries, even if phrased slightly differently, often lead to the model performing similar computations repeatedly. Inference caching directly addresses this inefficiency by intelligently storing and retrieving prior computation results, thereby reducing the need for full model invocation.

Understanding the Layers of Inference Caching

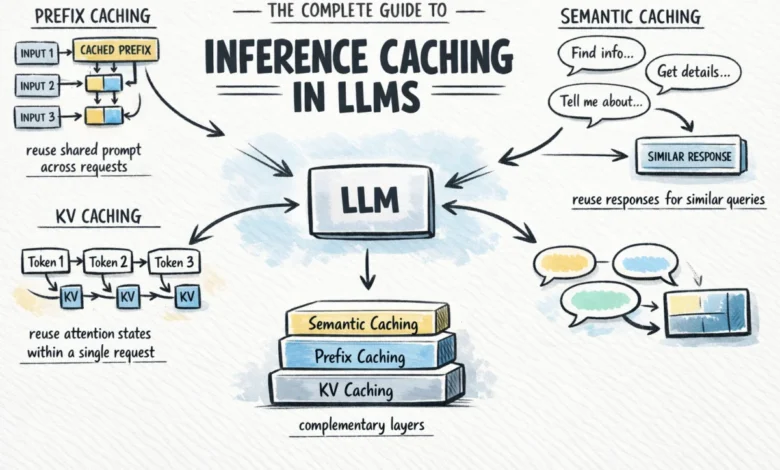

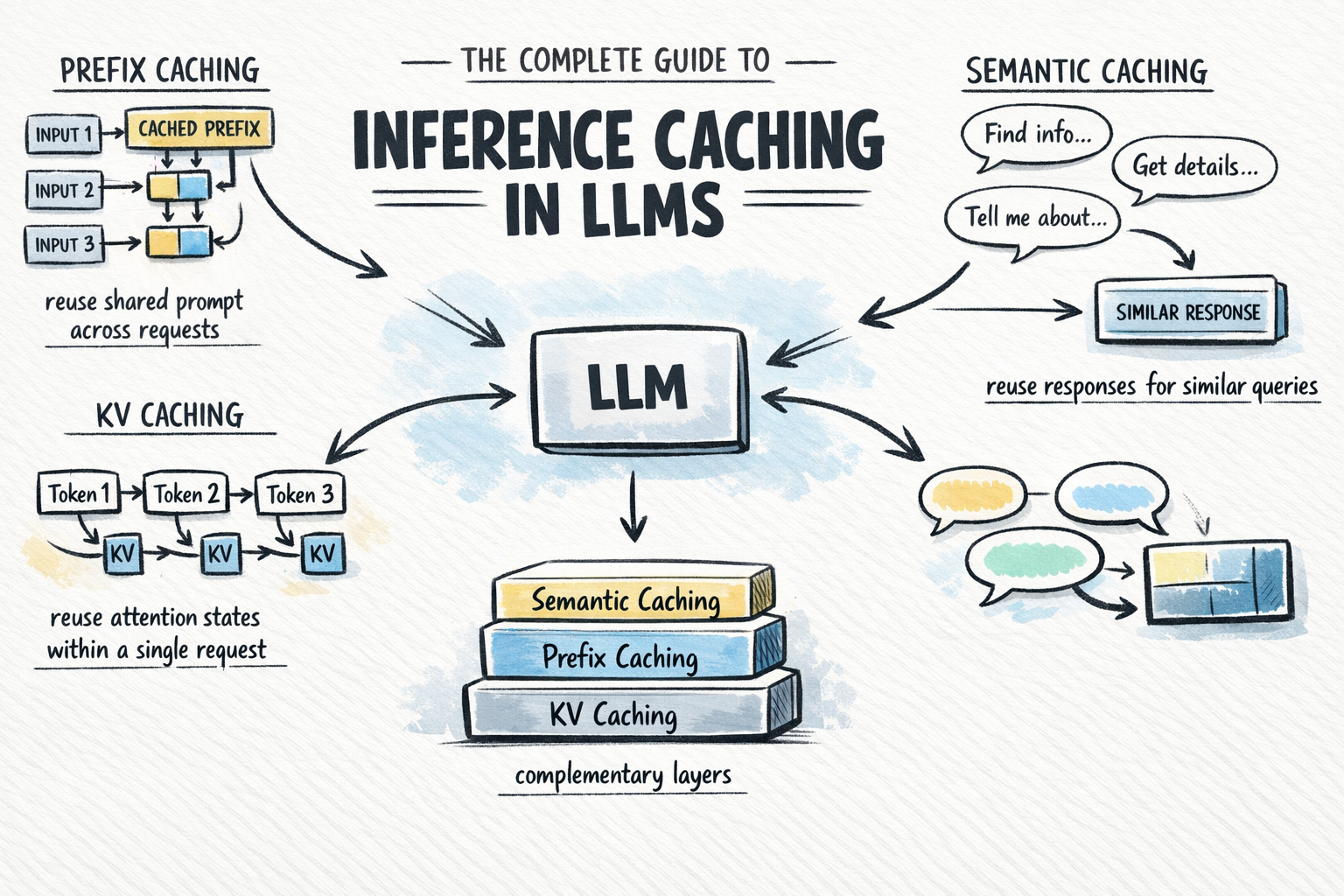

Inference caching is not a monolithic technique but rather a layered architecture, with each layer addressing different aspects of computational redundancy. These layers are complementary, offering cumulative benefits when applied strategically.

- KV Caching (Key-Value Caching): The foundational layer, operating within a single request.

- Prefix Caching (Prompt/Context Caching): Extends KV caching across multiple requests for shared prompt segments.

- Semantic Caching: Operates at the highest level, storing complete input/output pairs and retrieving them based on meaning, not exact textual match.

It is crucial to understand that these are not mutually exclusive alternatives. KV caching is a fundamental, always-on optimization. Prefix caching represents the most significant immediate leverage for many production applications. Semantic caching, while powerful, is a further enhancement suitable for specific high-volume use cases.

KV Caching: The Foundation of Efficient LLM Inference

To grasp the mechanics of KV caching, a brief understanding of the transformer architecture, particularly its self-attention mechanism, is essential. Modern LLMs are built upon the transformer architecture, introduced in the seminal "Attention Is All You Need" paper (Vaswani et al., 2017). At its core, the self-attention mechanism allows the model to weigh the importance of different tokens in an input sequence relative to each other, forming a contextual understanding.

For every token in an input sequence, the model computes three distinct vectors:

- Query (Q): Represents the current token’s search for relevant context.

- Key (K): Represents the contextual information each token offers.

- Value (V): Represents the actual content or meaning of each token.

Attention scores are calculated by comparing each token’s Query vector against the Key vectors of all preceding tokens. These scores are then used to weight the Value vectors, effectively allowing the model to focus on the most relevant parts of the input sequence when processing a new token.

LLMs generate output tokens autoregressively, meaning one token is generated at a time, based on all preceding tokens in the sequence. Without KV caching, generating the Nth token would necessitate recalculating the K and V vectors for all N-1 previously generated tokens from scratch. This recomputation burden compounds rapidly with sequence length, leading to prohibitive latency and resource consumption, especially for longer prompts or generation tasks. For instance, generating a 500-token response without KV caching would involve hundreds of thousands of redundant K and V vector computations across the decoding steps.

KV caching elegantly solves this problem. During the forward pass, as the model computes the K and V vectors for each token, these values are stored in the GPU memory (or other appropriate memory locations). For subsequent decode steps within the same request, the model simply retrieves these stored K and V pairs for the existing tokens, rather than recomputing them. Only the K and V vectors for the newly generated token require fresh computation. This dramatically reduces the computational load, ensuring that the cost of generating subsequent tokens remains relatively constant, independent of the sequence length. This internal optimization is so fundamental that virtually all LLM inference frameworks enable it by default, making it a transparent yet critical component of efficient LLM operation. Industry analyses suggest that KV caching can reduce inference latency by factors of 2-5x for long sequences, making it indispensable for real-time applications.

Prefix Caching: Extending Efficiency Across Requests

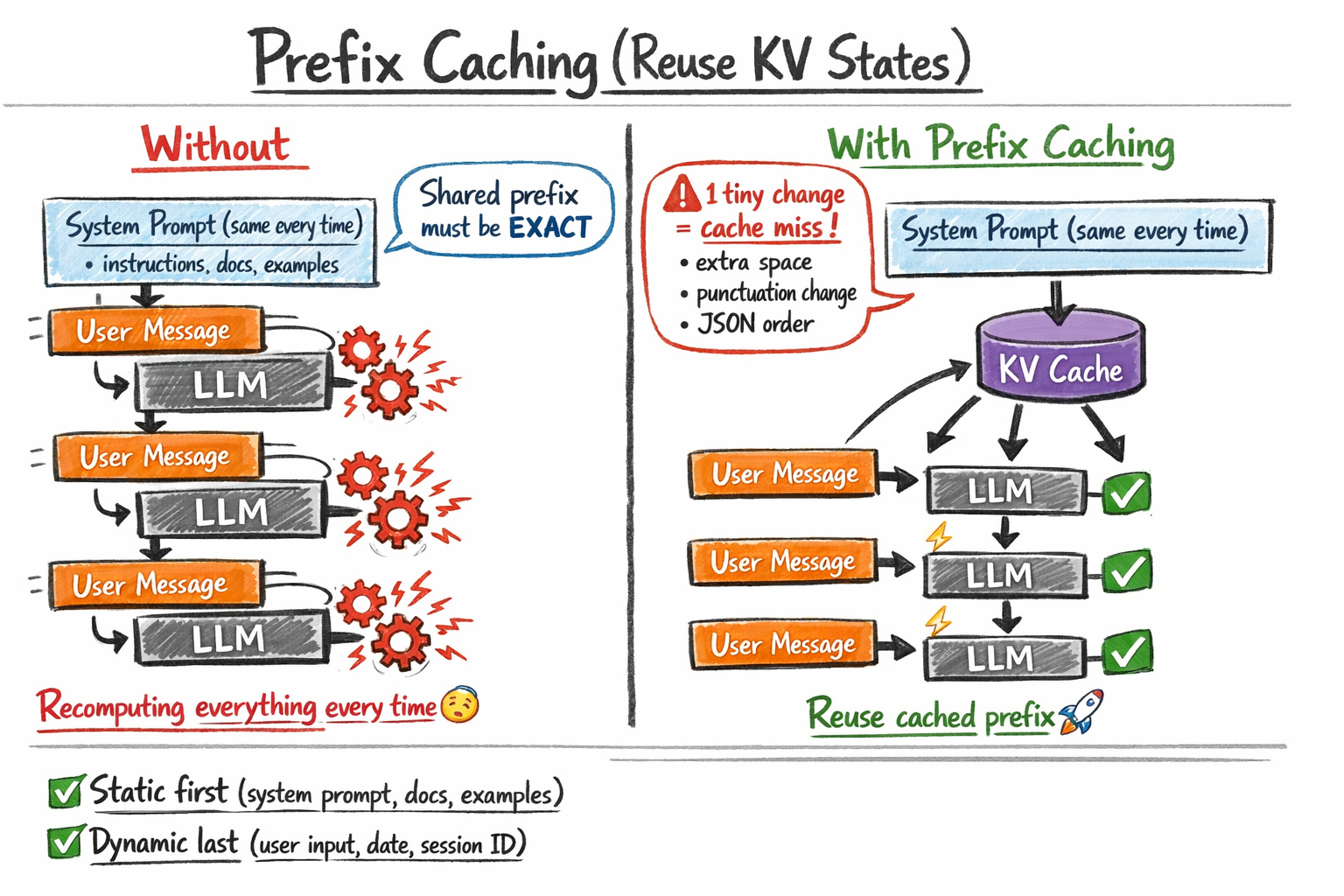

Building upon the principles of KV caching, prefix caching (also known as prompt caching or context caching) extends the reuse of attention states beyond a single request to encompass shared segments across multiple requests. This technique capitalizes on the common architectural pattern in LLM applications where a fixed "system prompt" or "context block" precedes a dynamic "user input."

The core idea is straightforward: when multiple requests share an identical leading sequence of tokens (a "prefix"), the KV states for that prefix are computed once, cached, and then reused for all subsequent requests containing that same prefix. This means that instead of re-processing the entire system prompt repeatedly, the model can load the pre-computed KV states for the shared prefix and immediately begin processing the unique user input.

The Strict Requirement: Exact Prefix Match

A critical aspect of prefix caching is its stringent requirement for an exact byte-for-byte match of the cached prefix. Even a minor deviation—a trailing space, an extra punctuation mark, or a different character encoding—will invalidate the cache, forcing the model to perform a full recomputation. This strictness has profound implications for prompt engineering:

- Static Content First: System instructions, reference documents, few-shot examples, and any other unchanging context should always be placed at the beginning of the prompt.

- Dynamic Content Last: Variables that change per request, such as the user’s query, session IDs, or timestamps, must appear at the end.

- Deterministic Serialization: When injecting structured data (e.g., JSON), ensure that the serialization process is deterministic. If the order of keys in a JSON object varies, even if the underlying data is semantically identical, the cache will not hit.

Provider Implementations and Market Adoption

The substantial benefits of prefix caching have led major LLM API providers and open-source frameworks to integrate it as a core feature:

- Anthropic: Offers "prompt caching" via a

cache_controlparameter, allowing developers to explicitly designate parts of their content blocks for caching. This explicit opt-in provides fine-grained control. - OpenAI: Implements automatic prefix caching for prompts exceeding a certain token threshold (e.g., 1024 tokens). This transparency simplifies development but still necessitates adherence to the static-prefix-first rule for optimal performance.

- Google Gemini: Provides "context caching," which is charged separately for stored cache, making it particularly cost-effective for very large, stable contexts that are reused across a high volume of requests.

- Open-source frameworks: Projects like

vLLMandSGLang, designed for efficient self-hosted LLM inference, incorporate automatic prefix caching. These engines intelligently identify and manage shared prefixes in a batch of requests, transparently optimizing throughput without requiring application-level changes.

Industry data suggests that for applications with a consistent system prompt, prefix caching can reduce token processing costs by 30-70% and improve latency by 20-50%, depending on the length of the cached prefix and the frequency of reuse. For example, a customer support bot with a 2,000-token system prompt and an average user query of 50 tokens could see massive savings by caching the system prompt’s KV states.

Semantic Caching: Beyond Exact Matches

While prefix caching excels at handling byte-for-byte identical shared contexts, it falls short when user queries vary slightly but convey the same intent. This is where semantic caching enters the picture, operating at a higher level of abstraction by storing complete LLM input/output pairs and retrieving them based on meaning rather than exact token congruence.

Semantic caching works by leveraging embedding models and vector databases:

- Embed Input: When a new prompt arrives, an embedding model (e.g., a Sentence Transformer or a dedicated embedding LLM) converts the input prompt into a high-dimensional vector representation (an embedding) that captures its semantic meaning.

- Vector Search: This embedding is then used to query a vector database (e.g., Pinecone, Weaviate, Milvus, pgvector). The database searches for previously cached embeddings that are "semantically similar" to the new input prompt, typically using cosine similarity or other distance metrics.

- Retrieve or Generate: If a sufficiently similar cached embedding is found (i.e., the similarity score exceeds a predefined threshold), the corresponding cached response is retrieved and returned directly to the user. This entirely bypasses the expensive LLM inference call. If no sufficiently similar entry is found, the original LLM is invoked to generate a new response, which is then embedded and stored in the cache for future use.

Semantic caching introduces an additional embedding step and a vector search operation to every request. This overhead means it is not universally beneficial. Its value proposition becomes compelling when an application experiences high query volume and a significant proportion of semantically similar, repeated questions. Typical use cases include:

- FAQ Bots: Where users frequently ask the same questions using different phrasing.

- Customer Support Systems: Handling common inquiries about product features, policies, or troubleshooting steps.

- Knowledge Bases: Where users seek information that might be repeatedly requested in varied ways.

Effective semantic caching also requires careful management of cache invalidation through Time-To-Live (TTL) policies to prevent serving stale or outdated information. While adding infrastructure complexity, semantic caching can significantly reduce LLM API calls, potentially cutting costs by 20-40% for appropriate workloads and improving perceived latency by avoiding full model inference.

A Holistic View: Choosing the Right Caching Strategy

The most effective LLM production systems do not choose one caching strategy over another; they layer them intelligently to maximize efficiency. The decision framework for implementing these techniques is as follows:

| Use Case | Caching Strategy | Rationale | Estimated Impact (Illustrative) |

|---|---|---|---|

| All LLM applications, universally | KV Caching (Automatic) | Fundamental optimization for autoregressive decoding within a single request. | 2-5x latency reduction for long sequences |

| Applications with a long, consistent system prompt | Prefix Caching | Avoids recomputing KV states for shared instructions, context, or examples across requests. | 30-70% cost reduction, 20-50% latency improvement |

| RAG pipelines with large, stable reference documents | Prefix Caching (for the document block) | Pre-processes the reference document’s context once, speeding up subsequent user queries. | Significant latency gains, especially for frequent document reuse |

| Agent workflows with large, stable conversational context | Prefix Caching | Caches the agent’s persona and persistent dialogue history, reducing re-computation in multi-turn interactions. | Improved responsiveness, reduced token expenditure |

| High-volume applications with paraphrased questions | Semantic Caching (Layered on top of KV and Prefix) | Skips LLM invocation entirely when a semantically equivalent query has been answered before. | 20-40% API call reduction, improved perceived latency |

Industry Implications and Future Outlook

The widespread adoption and continuous refinement of inference caching techniques are pivotal for the broader commercial viability and sustainable growth of LLM-powered applications. By dramatically reducing operational costs and improving response times, these optimizations make advanced AI more accessible and economically feasible for businesses of all sizes.

- Cost Efficiency: For many enterprises, the high cost of LLM inference has been a barrier to scaling. Caching strategies directly address this, allowing businesses to expand their AI deployments without a proportional increase in expenditure. This democratization of LLM access is critical for driving innovation.

- Scalability: Efficient caching enables LLM systems to handle significantly higher request volumes with existing infrastructure, delaying or reducing the need for expensive hardware upgrades.

- User Experience: Lower latency translates directly into more responsive applications, enhancing user satisfaction and engagement. For real-time conversational AI, this is a game-changer.

- Environmental Impact: Reduced computational load means lower energy consumption, aligning with broader sustainability goals in the tech industry. As AI models grow larger, efficient inference becomes an ecological imperative.

The trajectory of LLM development suggests an ongoing focus on efficiency. Future innovations may include more sophisticated caching algorithms that handle partial matches for prefix caching, adaptive semantic caching that dynamically adjusts similarity thresholds, and even hardware-level optimizations specifically designed to accelerate caching operations. The integration of these techniques is not merely a technical detail; it is a strategic imperative that underpins the next generation of intelligent applications.

In conclusion, inference caching stands as a critical pillar in the operational strategy for large language models. It is a set of complementary tools – KV caching, prefix caching, and semantic caching – each addressing specific forms of computational redundancy. For most production applications, enabling prefix caching for system prompts offers the highest immediate leverage. Subsequently, layering semantic caching can provide further significant gains for applications with appropriate query patterns and volume. When applied thoughtfully, with attention to cache design, invalidation policies, and relevance, these techniques profoundly enhance system efficiency, reduce operational costs, and improve the user experience without compromising the quality or capabilities of large language models. The continuous evolution of these caching mechanisms will undoubtedly be instrumental in shaping the future of scalable and accessible AI.