reduction

-

Artificial Intelligence

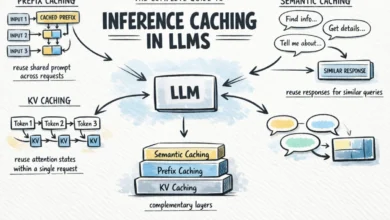

Optimizing Large Language Model Operations: A Deep Dive into Inference Caching Strategies for Enhanced Efficiency and Cost Reduction

The burgeoning adoption of large language models (LLMs) across industries has ushered in an era of unprecedented computational demands, driving…

Read More »