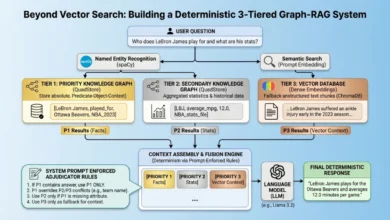

Building Efficient Long-Context Retrieval-Augmented Generation Systems in the Era of Million-Token LLMs

The landscape of Retrieval-Augmented Generation (RAG) is undergoing a significant transformation, driven by the exponential growth in Large Language Models’ (LLMs) context window capabilities. For years, the foundational principle of RAG revolved around the meticulous segmentation of documents into smaller, manageable chunks, followed by their embedding and the retrieval of only the most relevant snippets. This approach was necessitated by the inherent limitations and high computational costs associated with earlier LLM context windows, which typically ranged from 4,000 to 32,000 tokens. Developers and researchers meticulously optimized chunking strategies, fearing "information overload" and prohibitive expenses. However, the advent of cutting-edge models such as Google’s Gemini Pro and Anthropic’s Claude Opus, boasting context windows exceeding 1 million tokens, has shattered these conventional boundaries, presenting both unprecedented opportunities and novel challenges for building robust and efficient RAG systems.

The theoretical ability to feed an entire compendium of novels into a single prompt signifies a paradigm shift, moving beyond the fragmented approach of the past. This expanded capacity, while liberating, introduces two primary hurdles: the persistent problem of attention loss within vast contexts, often termed the "Lost in the Middle" phenomenon, and the substantial increase in computational latency and operational costs associated with processing such massive token counts. This article delves into five practical, modern techniques designed to navigate these complexities, enabling developers to construct RAG systems that are not only scalable but also remarkably precise and cost-effective in the age of long-context LLMs.

The Evolution of RAG and LLM Context Windows

The journey of RAG began as a crucial innovation to mitigate the "hallucination" problem inherent in early LLMs, which often generated factually incorrect or nonsensical information. By grounding LLM responses in external, verifiable knowledge bases, RAG significantly improved the reliability and accuracy of AI-generated content. Initially, the focus was heavily on efficient indexing and retrieval of small, highly relevant text passages. Vector databases became a cornerstone, allowing semantic similarity searches to pinpoint information related to a user’s query.

Historically, the constraint of limited context windows meant that even with RAG, the amount of information an LLM could process at any given time was finite. This led to complex strategies involving multiple retrieval steps, summarization of retrieved content, and sophisticated prompt engineering to fit the most critical information into the LLM’s working memory. The breakthroughs in model architectures and hardware optimization over the past two years have radically altered this dynamic. Models like OpenAI’s GPT-4 Turbo, with its 128k context window, paved the way, but it is the recent leap to million-token contexts by models from Google, Anthropic, and others that truly redefines the RAG landscape. This progression, while exciting, doesn’t negate the need for intelligent retrieval; rather, it reframes it, shifting the emphasis from mere retrieval to intelligent context management within an expanded processing capacity.

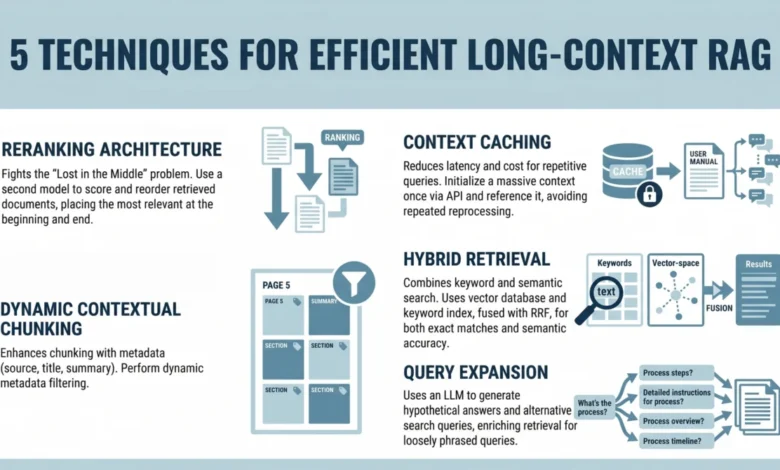

1. Implementing a Reranking Architecture to Combat "Lost in the Middle"

One of the most significant challenges identified with increasingly large context windows is the "Lost in the Middle" problem. A seminal 2023 study conducted by researchers at Stanford University and UC Berkeley meticulously documented this critical limitation in LLM attention mechanisms. Their findings revealed that when LLMs are presented with long sequences of text, their performance in extracting relevant information is markedly higher when that information is situated at the beginning or the very end of the input. Conversely, information buried in the middle of a lengthy context is substantially more likely to be overlooked, misinterpreted, or entirely ignored by the model. This phenomenon poses a serious threat to the reliability of long-context RAG systems, as even perfectly retrieved information can be rendered useless if the LLM fails to attend to it.

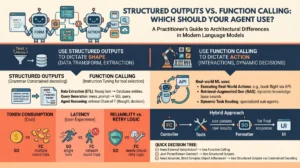

To counteract this, the strategic introduction of a reranking step becomes imperative. Instead of directly injecting retrieved documents into the LLM’s prompt in their original order, a reranking model is employed to re-evaluate the relevance of the initial set of retrieved documents and reorder them. The typical developer workflow involves:

- Initial Retrieval: A primary retrieval mechanism (e.g., semantic search via a vector database) fetches a larger pool of potentially relevant documents (e.g., top 50-100 results).

- Reranking: A specialized reranker model, often a smaller, highly optimized cross-encoder (like a fine-tuned BERT or Sentence-BERT model) or a dedicated reranking service (e.g., Cohere Rerank), takes the user query and each retrieved document pair. It then assigns a new, more precise relevance score to each document.

- Refined Selection: Based on these new scores, only the top N most relevant documents (e.g., top 5-10) are selected.

- Strategic Placement: These highly relevant documents are then strategically placed at the beginning or end of the LLM’s prompt, ensuring they receive maximum attention.

This strategic placement is crucial. By front-loading or back-loading the most pertinent information, developers can significantly improve the chances that the LLM will focus on and correctly utilize the critical data, thereby mitigating the "Lost in the Middle" effect and enhancing the overall accuracy and coherence of the generated responses. Industry adoption of reranking has surged, with many enterprise RAG deployments reporting significant gains in response quality and reduction in factual errors.

2. Leveraging Context Caching for Repetitive Queries

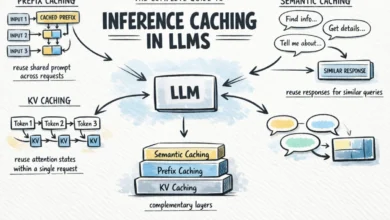

The sheer scale of million-token context windows, while powerful, introduces considerable latency and cost overheads. The repeated processing of hundreds of thousands, or even millions, of tokens for similar or identical queries is inherently inefficient. Context caching emerges as a robust solution to address this challenge, optimizing both performance and operational expenditure.

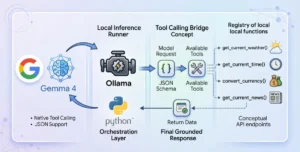

Conceptually, context caching functions by initializing and maintaining a persistent context for the LLM, effectively allowing the model to "remember" and reuse previously processed information. This is particularly relevant for the LLM’s internal Key-Value (KV) cache, which stores the attention keys and values computed for each token in the context. By caching these computations, subsequent queries that build upon or re-engage with a similar knowledge base can bypass redundant processing. The typical implementation involves:

- Persistent Context Initialization: For applications with a relatively static knowledge base or frequently accessed documents, the core information can be loaded into the LLM’s context once. The resulting KV cache is then stored.

- Query-Specific Augmentation: When a user query arrives, only the query-specific information (e.g., a few retrieved documents pertaining directly to the query) is appended to the cached context.

- Efficient Processing: The LLM then processes this augmented context, leveraging the pre-computed KV cache for the persistent portion, significantly reducing the computational load and inference time.

This approach is exceptionally valuable for applications such as customer support chatbots operating on a fixed knowledge base, interactive documentation portals, or internal enterprise search tools where users frequently inquire about the same core set of policies, products, or procedures. For instance, a customer support bot might cache an entire product manual. When a user asks a series of questions about that product, the LLM only needs to process the new question and any specific retrieved snippets, not the entire manual repeatedly. Early benchmarks suggest that context caching can reduce inference costs by 30-50% and latency by similar margins for highly repetitive query patterns, making it an attractive optimization for high-traffic RAG systems.

3. Using Dynamic Contextual Chunking with Metadata Filters

Even with expansive context windows, the principle of relevance remains paramount. Simply increasing the context size without intelligent filtering does not eliminate noise; in fact, it can exacerbate the "Lost in the Middle" problem by diluting the signal-to-noise ratio. Dynamic contextual chunking, augmented with structured metadata filters, provides a sophisticated enhancement to traditional document segmentation, ensuring that the LLM receives precisely the information it needs.

Traditional chunking often involves fixed-size segments, which can arbitrarily split logically coherent paragraphs or sentences. Dynamic contextual chunking, by contrast, adapts to the semantic and structural characteristics of the document, creating chunks that are inherently more meaningful. This approach is further refined by embedding rich metadata alongside each chunk:

- Semantic Segmentation: Instead of fixed-size chunks, documents are segmented based on semantic boundaries (e.g., paragraphs, sections, topics) or structural elements (e.g., headings, bullet points). This ensures that each chunk represents a coherent piece of information.

- Metadata Enrichment: Each chunk is then tagged with relevant metadata. This can include the document title, author, publication date, document type (e.g., policy, research paper, FAQ), section headers, keywords, and even security clearances.

- Filtered Retrieval: During retrieval, queries can leverage these metadata tags to pre-filter or post-filter results. For example, a user query about "company policy" might be augmented with a filter to only retrieve chunks from documents tagged as "policy" and published after a certain date.

- Dynamic Chunk Size: The system can dynamically adjust the size of retrieved chunks based on the complexity of the query or the LLM’s current context capacity, ensuring optimal information density.

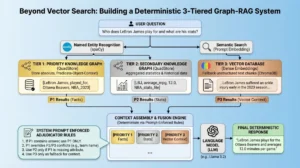

This methodology significantly reduces the amount of irrelevant context presented to the LLM, thereby improving precision and reducing computational waste. By focusing on highly relevant and contextually complete chunks, dynamic contextual chunking helps the LLM home in on the answer more effectively, preventing cognitive overload and enhancing the quality of generated responses. This technique is particularly powerful when integrated with graph databases or knowledge graphs, where relationships between entities and documents are explicitly modeled, allowing for even more granular and contextually aware retrieval.

4. Combining Keyword and Semantic Search with Hybrid Retrieval

While vector search has revolutionized information retrieval by capturing the semantic meaning and conceptual similarity between queries and documents, it possesses a notable limitation: it can occasionally miss exact keyword matches. This is especially problematic for technical queries, product codes, proper nouns, or specific factual lookups where precise lexical matching is critical. Hybrid retrieval addresses this by judiciously combining the strengths of both semantic and keyword-based search mechanisms.

Hybrid search leverages the best of both worlds to provide a more comprehensive and robust retrieval strategy:

- Semantic Search: Utilizes embedding models to convert queries and document chunks into high-dimensional vectors, enabling the retrieval of semantically similar content, even if the exact keywords are not present. This excels at understanding intent and conceptual relevance.

- Keyword Search: Employs traditional lexical search algorithms (e.g., BM25, TF-IDF) to find exact or near-exact keyword matches. This is indispensable for specific terms, codes, or phrases where precise recall is non-negotiable.

- Fusion Algorithm: The results from both semantic and keyword searches are then combined using a sophisticated fusion algorithm, such as Reciprocal Rank Fusion (RRF). RRF aggregates the ranked lists from each search method, giving higher weight to documents that appear high in both lists, producing a single, optimized list of retrieved documents.

This integrated approach ensures that the RAG system benefits from both the nuanced understanding of semantic relevance and the pinpoint accuracy of lexical matching. For example, a query like "How do I reset my account password?" might benefit from semantic search identifying documents about user authentication. However, a query like "Error code 404" absolutely requires keyword search to find the precise documentation for that error. Hybrid retrieval ensures that both types of queries yield optimal results, significantly improving the overall robustness and accuracy of the RAG system across a diverse range of user intents and information needs. Studies have shown that hybrid approaches consistently outperform purely semantic or purely keyword-based systems in terms of recall and precision across varied datasets.

5. Applying Query Expansion with Summarize-Then-Retrieve

User queries are frequently imperfect. They can be brief, ambiguous, poorly phrased, or implicitly refer to information not explicitly stated. This discrepancy between how users articulate their needs and how information is structured within documents often hinders effective retrieval. Query expansion techniques are designed to bridge this gap, enhancing the initial user query to better align with potential relevant content.

One powerful form of query expansion is the "Summarize-Then-Retrieve" method, which leverages a lightweight LLM to generate more comprehensive and diverse search queries:

- Initial Query Analysis: The user’s original query is first fed into a smaller, cost-effective LLM.

- Hypothetical Query Generation: This LLM is prompted to generate several alternative, more detailed, or hypothetical search queries that are semantically related to the original. For example, if a user asks, "What do I do if the fire alarm goes off?", the LLM might generate hypotheticals such as:

- "Emergency procedures for fire alarm activation"

- "Fire safety evacuation protocols"

- "Actions to take during a building fire"

- "What to do when the smoke detector sounds"

- "Contact information for building emergency services"

- Multi-Query Retrieval: Each of these generated hypothetical queries, along with the original query, is then used to perform individual retrieval operations against the knowledge base.

- Result Aggregation: The results from all these retrieval operations are aggregated and then typically passed through a reranking step (as discussed in Section 1) to select the most relevant documents for the final LLM prompt.

This method significantly improves performance on inferential and loosely phrased queries, as it casts a wider net in the retrieval phase. By exploring multiple facets of a user’s potential intent, query expansion increases the likelihood of finding relevant documents that might have been missed by a single, literal interpretation of the original query. This technique is particularly beneficial for complex question-answering systems where user input can be highly varied and require deeper contextual understanding to connect to the stored information. Other query expansion techniques include synonym expansion, rephrasing, and explicit intent extraction.

Conclusion: Reshaping RAG for the Long-Context Era

The emergence of million-token context windows in modern LLMs marks a profound inflection point in the development of Retrieval-Augmented Generation systems. Far from rendering RAG obsolete, this advancement fundamentally reshapes its purpose and methodology. While the need for aggressive chunking may diminish, the new challenges of managing vast contexts—specifically related to attention distribution, increased latency, and heightened operational costs—demand a sophisticated and multi-faceted approach.

The techniques outlined herein—reranking to combat the "Lost in the Middle" problem, context caching for efficiency, dynamic contextual chunking with metadata for precision, hybrid retrieval for comprehensive search, and query expansion for robust understanding—are not merely optional enhancements; they are becoming essential components for building scalable, accurate, and cost-effective RAG systems. The objective is no longer solely about providing more context, but critically, about ensuring the LLM consistently and reliably focuses on the most relevant information within that expanded context. As LLM capabilities continue to evolve, the art and science of RAG will likewise adapt, pushing the boundaries of what is possible in AI-powered information access and generation across diverse industries, from healthcare to finance and beyond. The future of RAG lies in intelligent context orchestration, transforming raw data into actionable intelligence with unprecedented efficiency and accuracy.