Consistent Hashing: A Deep Dive into Distributed System Scalability

In the rapidly evolving landscape of distributed systems, ensuring seamless scalability and efficient data distribution is paramount. As websites and applications experience exponential growth in user traffic and data volume, traditional methods of partitioning and load balancing often falter, leading to performance degradation and service outages. This is where consistent hashing emerges as a sophisticated and highly effective solution, providing a robust mechanism for managing dynamic changes in distributed systems with minimal disruption.

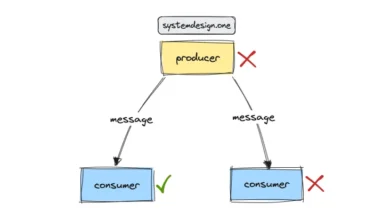

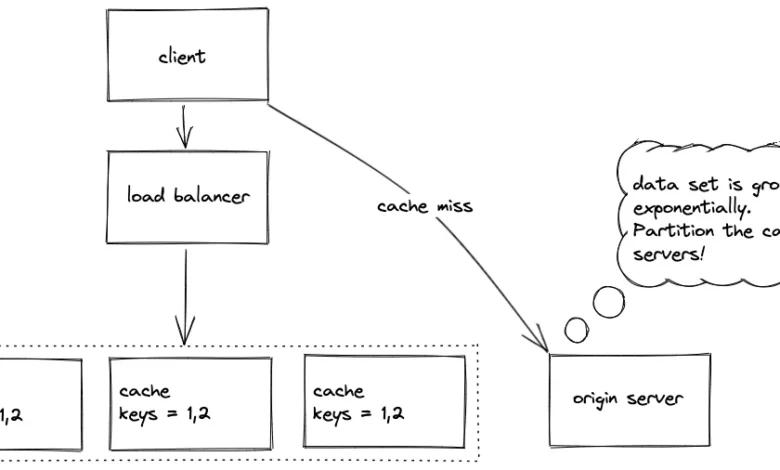

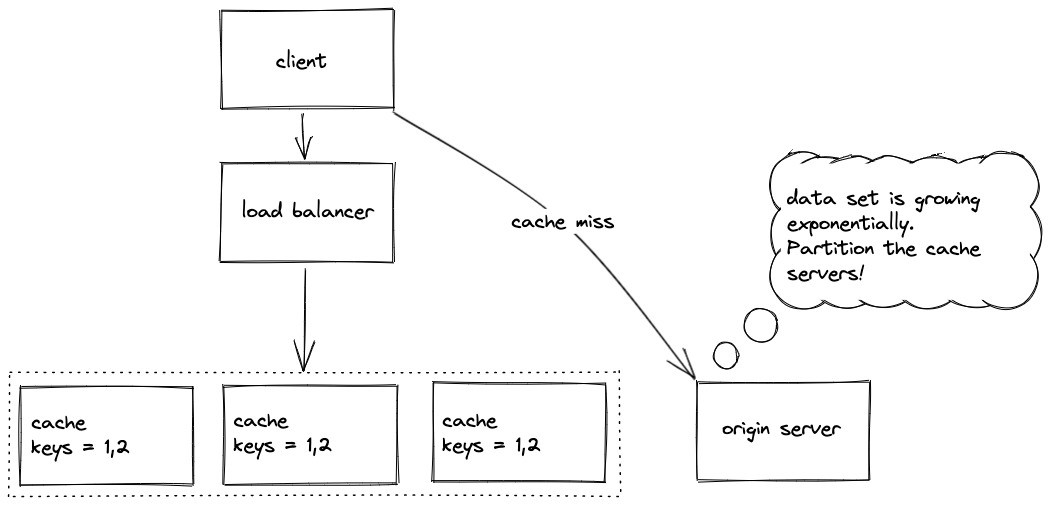

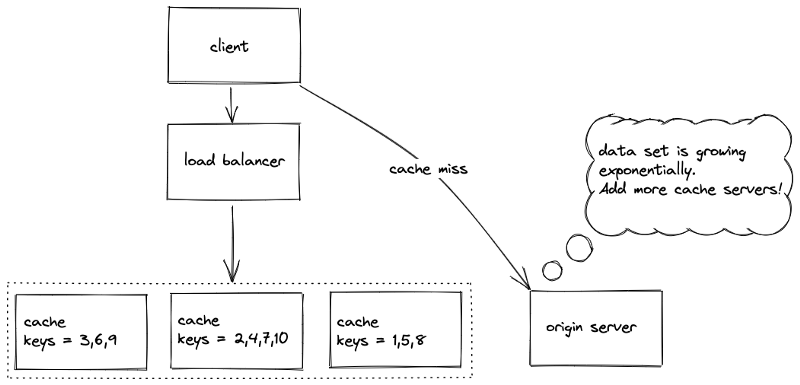

The core challenge addressed by consistent hashing stems from the inherent difficulties in scaling systems that rely on simple hashing for data distribution. When data is partitioned across multiple servers, a common approach is to use a hash function to map data keys to specific servers. However, this method presents a significant problem: any change in the number of servers—whether adding a new one to handle increased load or removing one due to failure—necessitates a complete remapping of virtually all data. This massive data reshuffling can overwhelm the system, leading to a cascade of cache misses, increased load on origin servers, and ultimately, a degraded user experience.

The Problem with Traditional Hashing

To understand the elegance of consistent hashing, it’s crucial to first examine the limitations of simpler partitioning strategies.

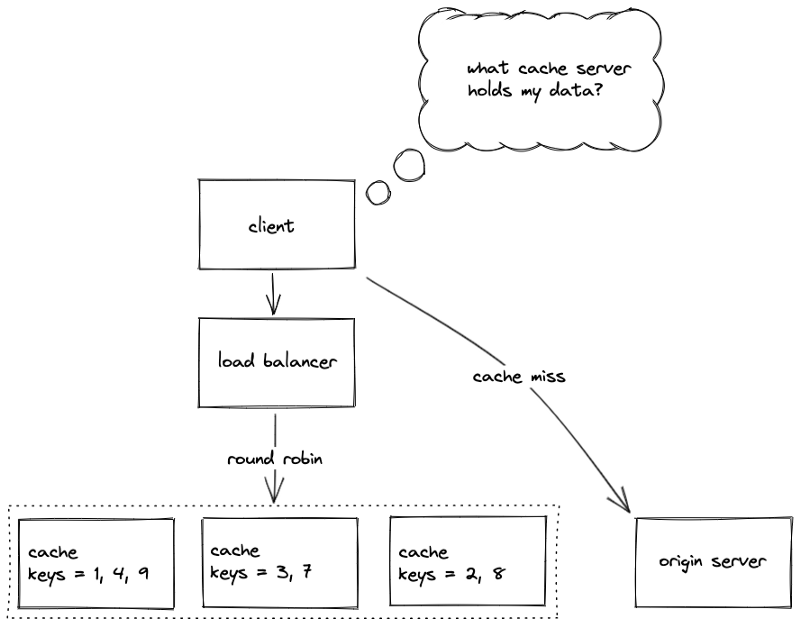

Random Assignment: The Illusion of Simplicity

One intuitive approach is to randomly assign data objects to available cache servers. While this can lead to a relatively uniform distribution of data for a large dataset, it presents a significant drawback for clients. Without a deterministic mapping, clients would struggle to identify which server holds a specific piece of data, requiring a central lookup mechanism that itself becomes a bottleneck. This lack of predictability makes random assignment impractical for dynamic, large-scale systems.

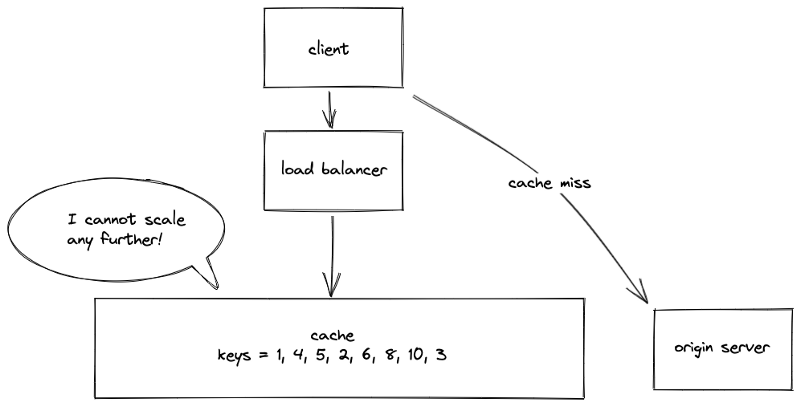

Single Global Cache: A Bottleneck Waiting to Happen

Another rudimentary strategy involves storing the entire dataset on a single global cache server. This approach simplifies data retrieval, as clients always know where to find the data. However, it completely negates the benefits of distribution and scalability. A single server quickly becomes a performance bottleneck, and its failure leads to complete service unavailability, making it entirely unsuitable for systems expecting significant or dynamic loads.

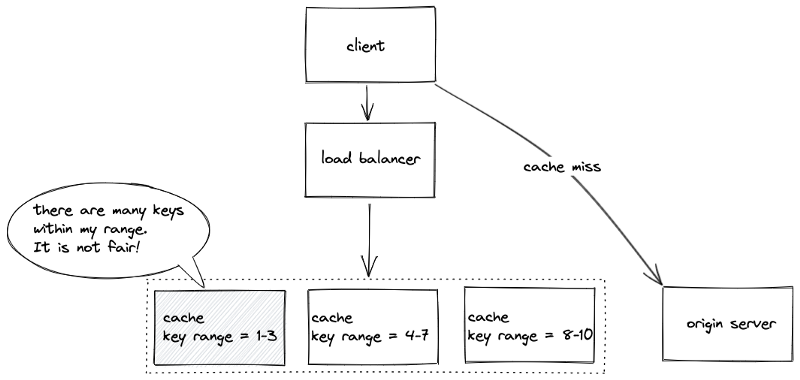

Key Range Partitioning: Uneven Distribution Concerns

Key range partitioning divides the data based on ranges of keys. For instance, keys starting with ‘A’ through ‘F’ might go to server 1, ‘G’ through ‘L’ to server 2, and so on. This method offers easier data retrieval for clients. However, it suffers from potential uneven data distribution. If certain key ranges are inherently more popular or contain more data, the servers responsible for those ranges can become overloaded, while others remain underutilized. This imbalance hinders effective scaling.

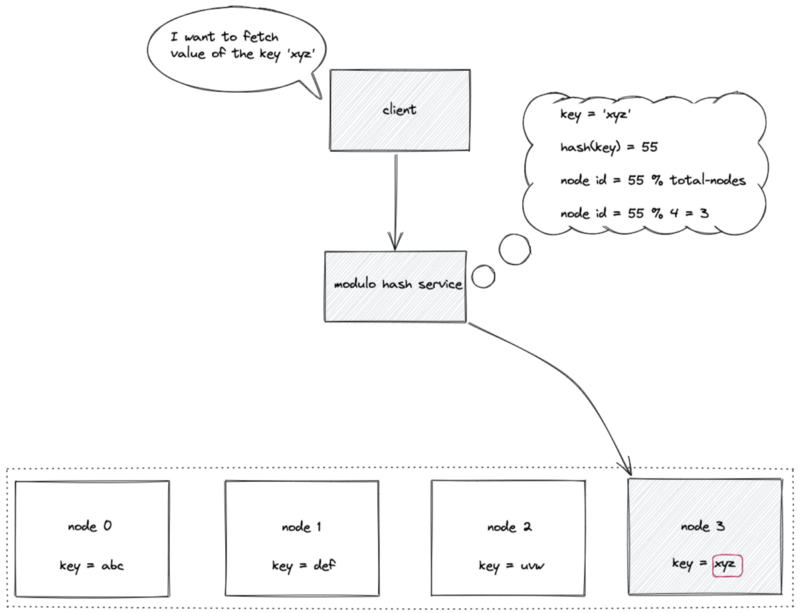

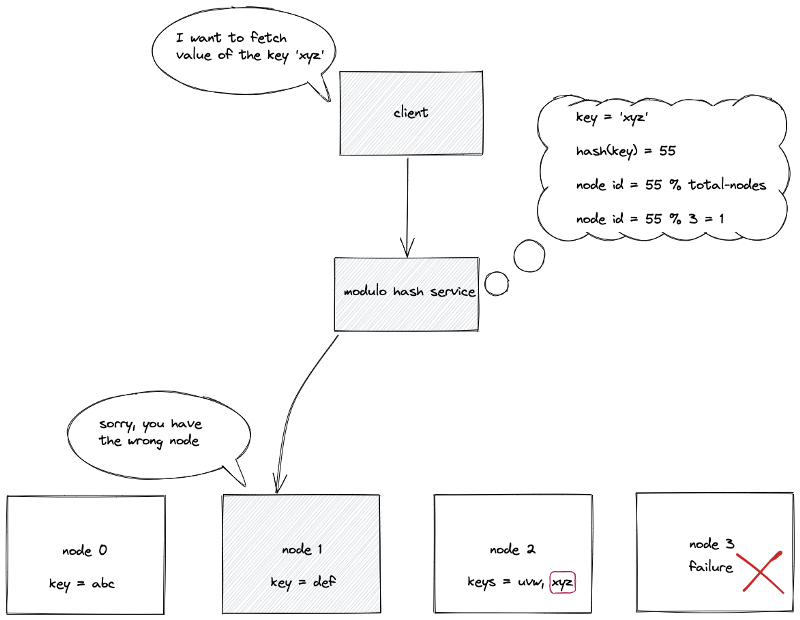

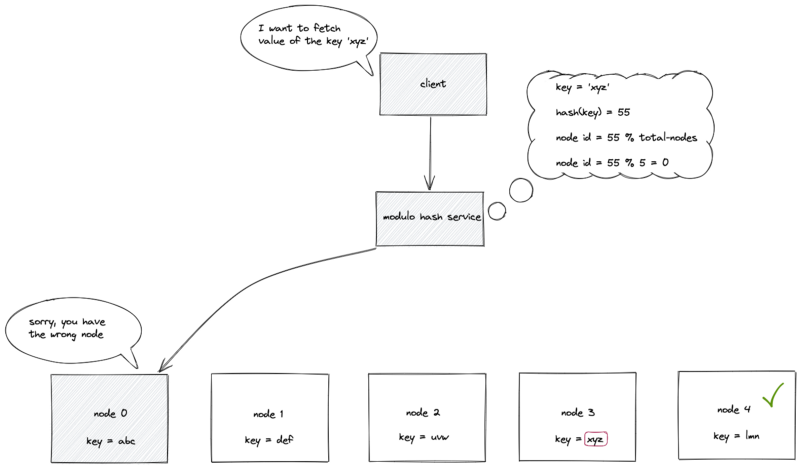

Static Hash Partitioning: The Rigidity Problem

Static hash partitioning, often implemented using the modulo operator (node ID = hash(key) mod N), offers a fixed mapping between keys and nodes. While efficient for lookups with a constant O(1) time complexity, its rigidity is its undoing. When a node is added or removed, the value of ‘N’ changes, invalidating the modulo operation for most keys. This forces a complete rehashing and redistribution of data, impacting system availability and performance significantly. Figures 7 and 8 vividly illustrate the disruption caused by node failures or additions in static hash partitioning, where existing mappings are broken, and data must be remapped. The subsequent cache misses and strain on origin servers (Figure 9) highlight the impracticality for dynamic environments.

Introducing Consistent Hashing: A Paradigm Shift

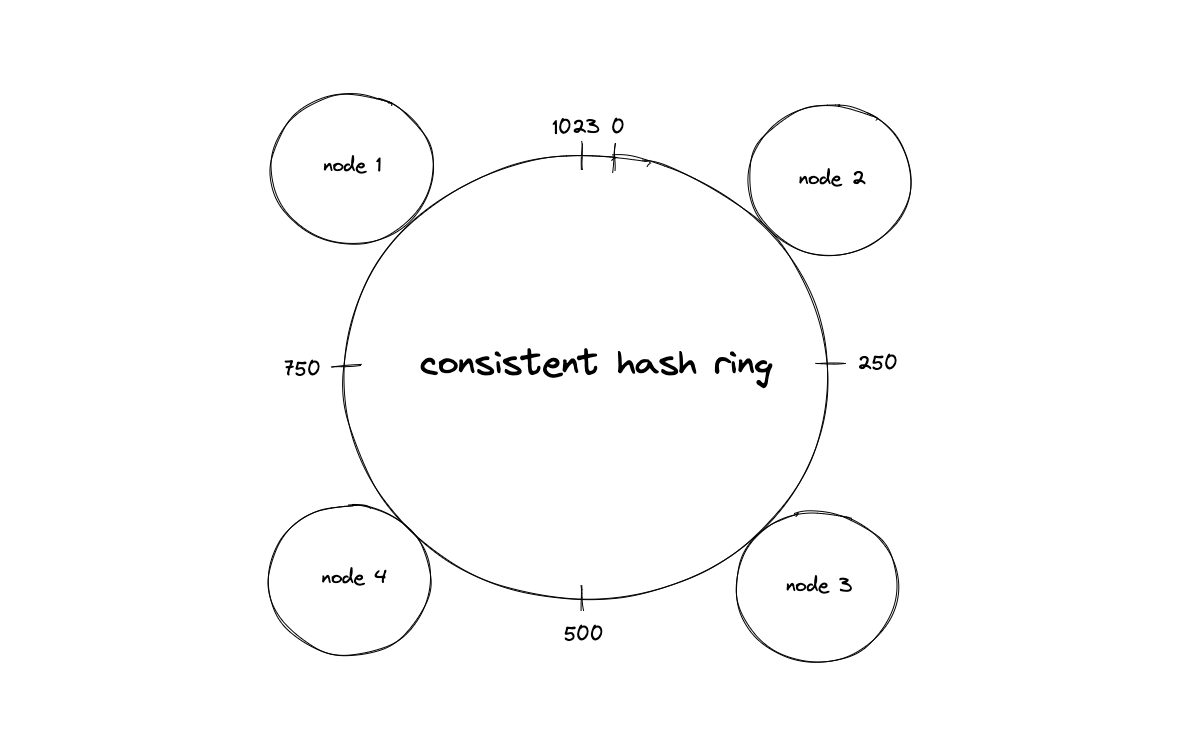

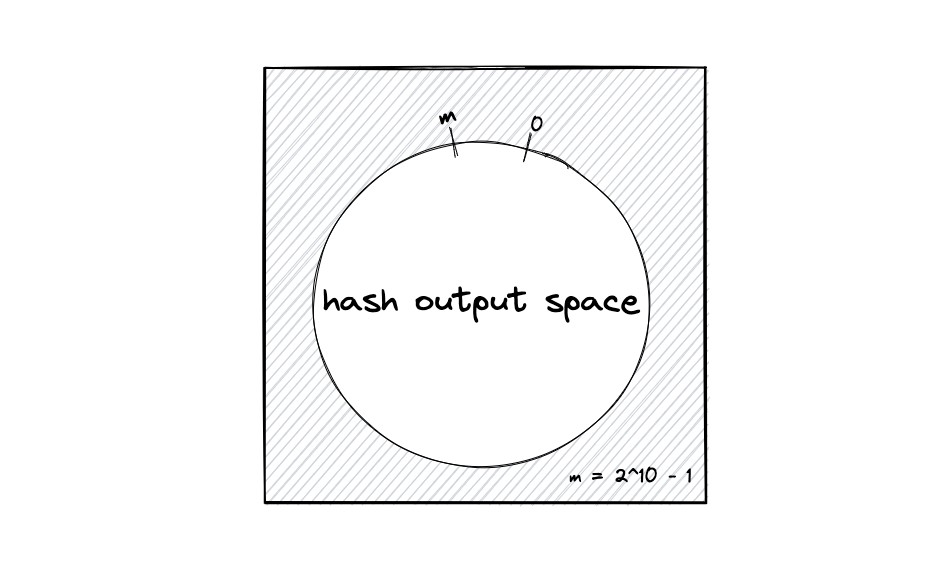

Consistent hashing fundamentally alters how data is distributed by mapping both data keys and server identifiers onto a virtual ring, often referred to as a hash ring. This innovative approach, first proposed in the context of distributed caching protocols for the World Wide Web, aims to minimize the number of keys that need to be remapped when the number of nodes in the system changes.

The Hash Ring Mechanism

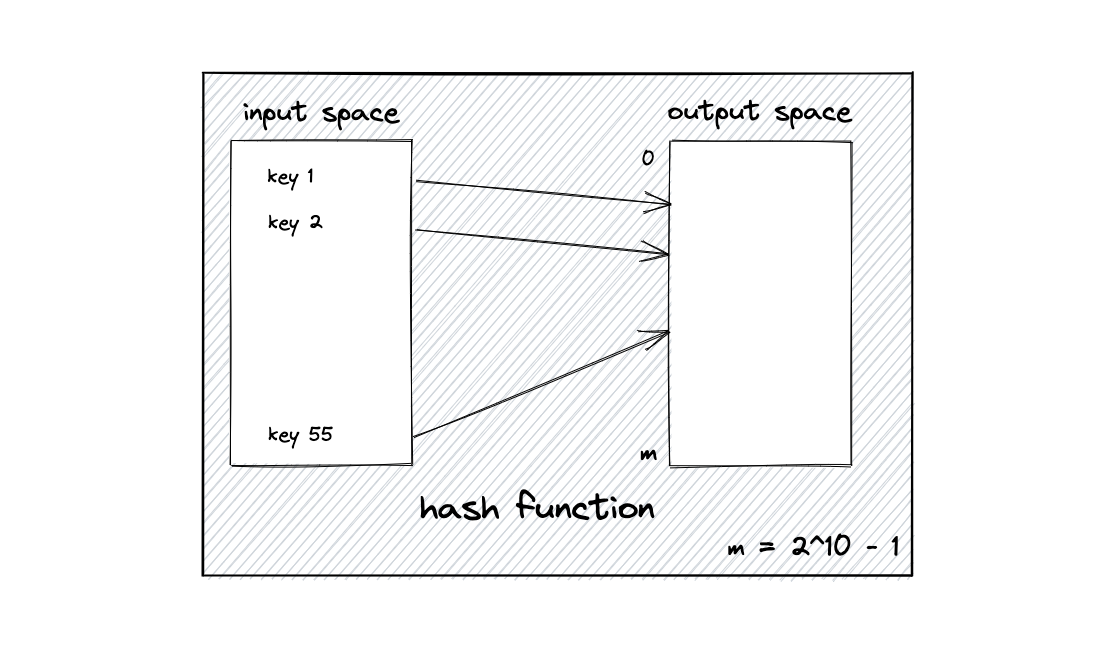

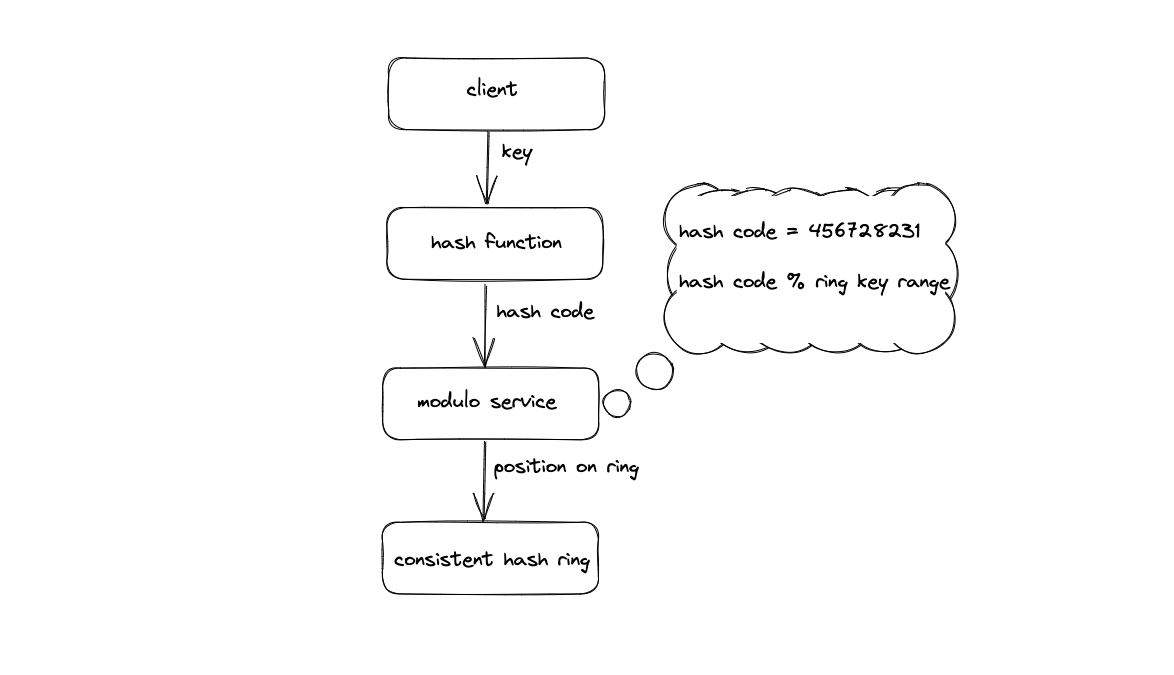

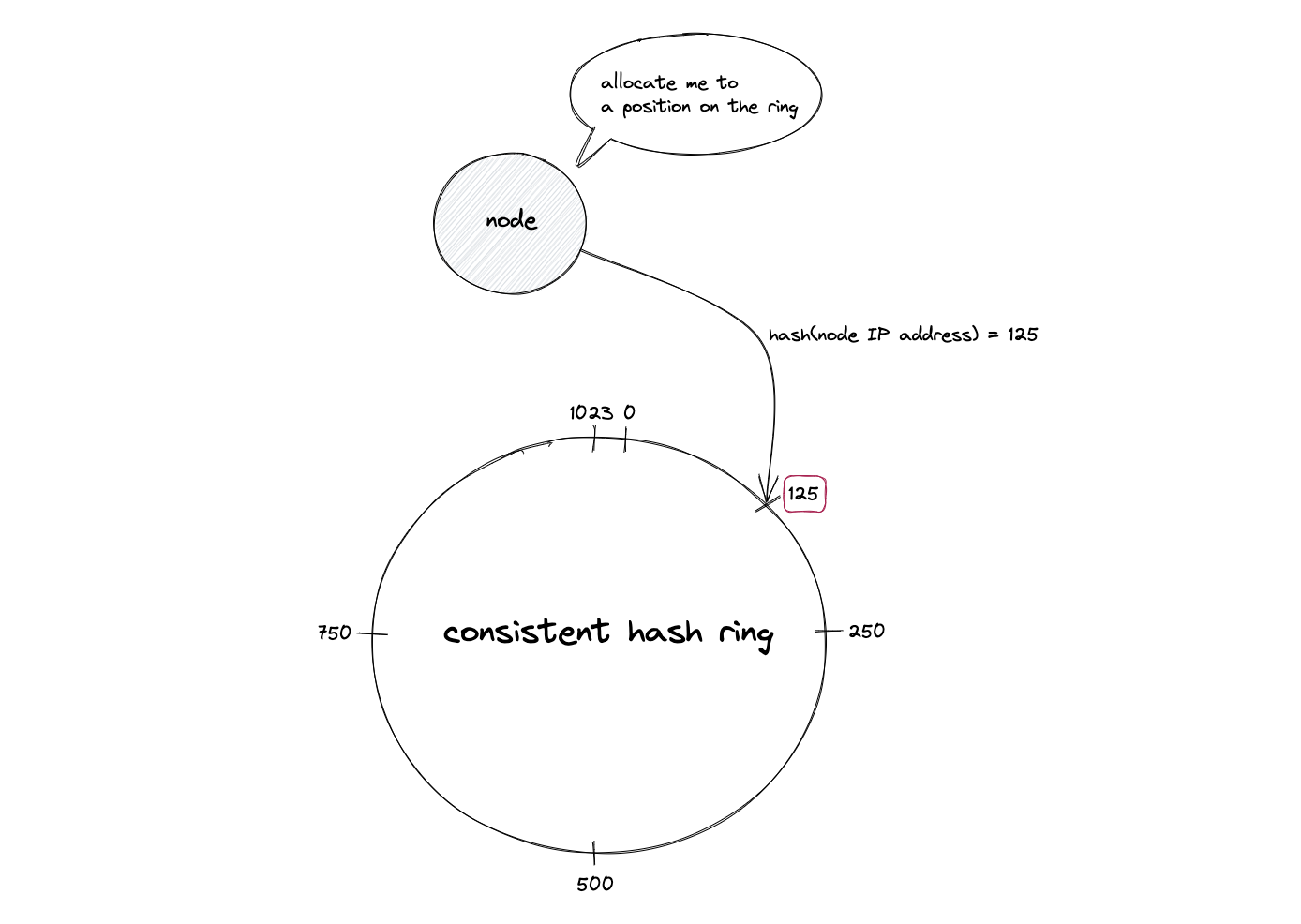

The core principle of consistent hashing involves using a uniform and independent hash function—such as MD5 or more modern non-cryptographic alternatives like MurmurHash or xxHash—to generate hash values for both data keys and node identifiers. These hash values are then mapped onto a circular space, forming the hash ring. This output space is treated as a finite circle, where the largest hash value wraps around to the smallest.

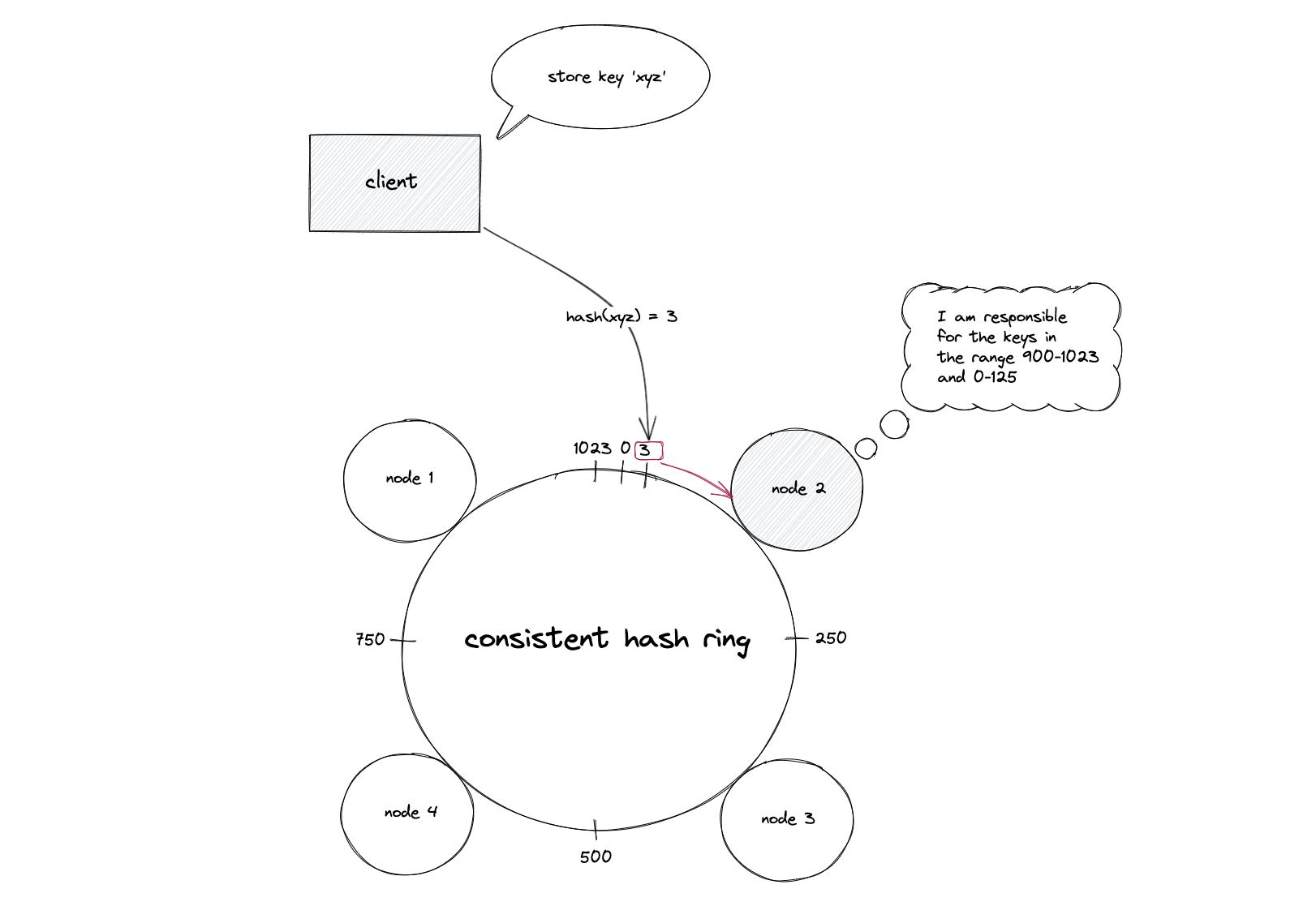

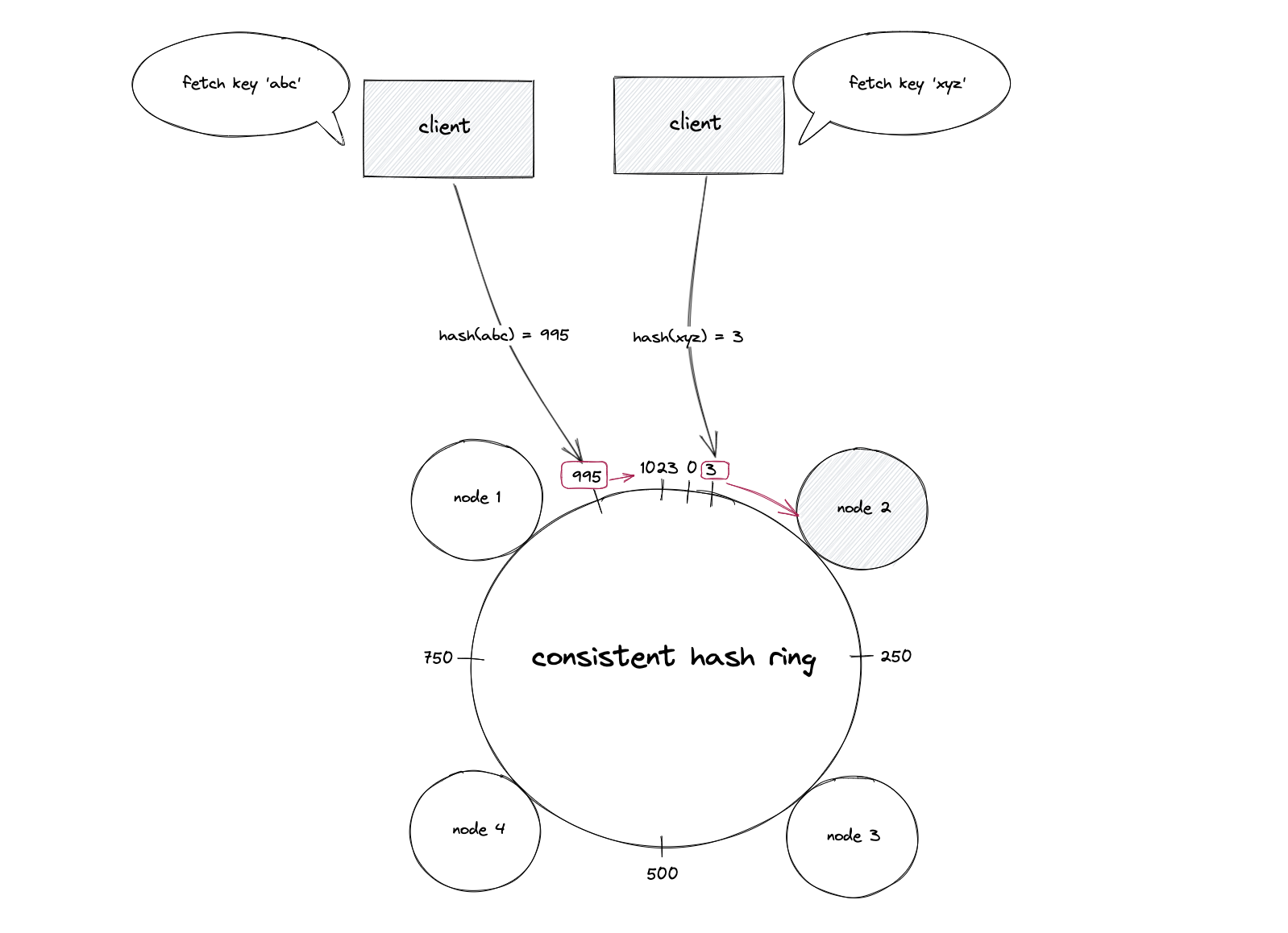

When a data object needs to be stored or retrieved, its key is hashed, and its position on the ring is determined. The system then traverses the ring clockwise from the key’s position until it encounters the first node. This node is then responsible for storing or serving that data object. This process is illustrated in Figures 14 and 15, which show how nodes and data keys are positioned on the hash ring and how a data object is assigned to the first succeeding node.

Key Operations and Benefits

- Data Assignment: A data object (key) is hashed to find its position on the ring. The system then moves clockwise from this position to find the first node. That node becomes responsible for the data object.

- Data Retrieval: The same process is followed for retrieval. Hashing the key determines its position, and traversing the ring clockwise identifies the node that should hold the data.

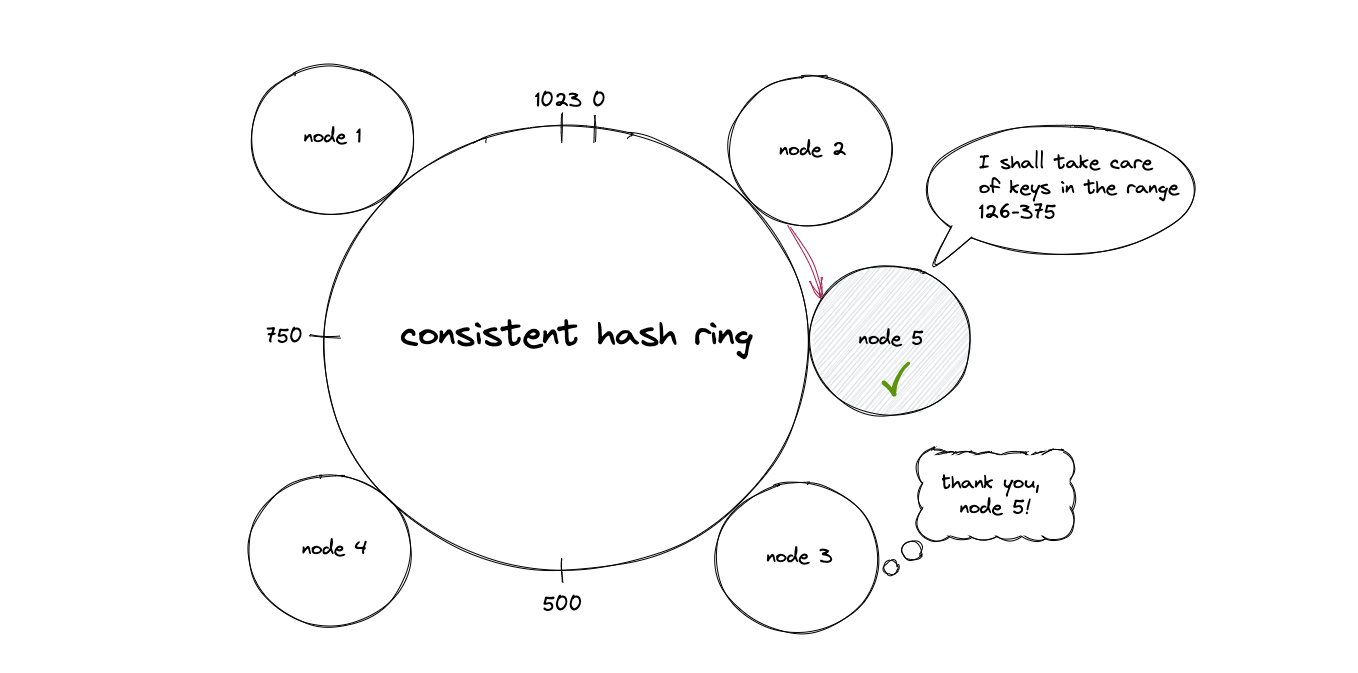

- Node Addition: When a new node is added to the ring, it takes responsibility for a portion of the keys previously managed by its clockwise neighbor. Crucially, only the keys that fall within the new node’s assigned range need to be relocated, minimizing disruption. Figure 18 demonstrates this, showing how a new node inherits keys from its successor.

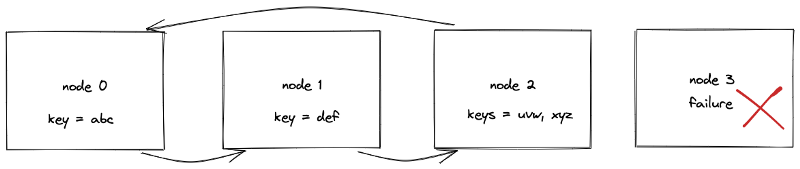

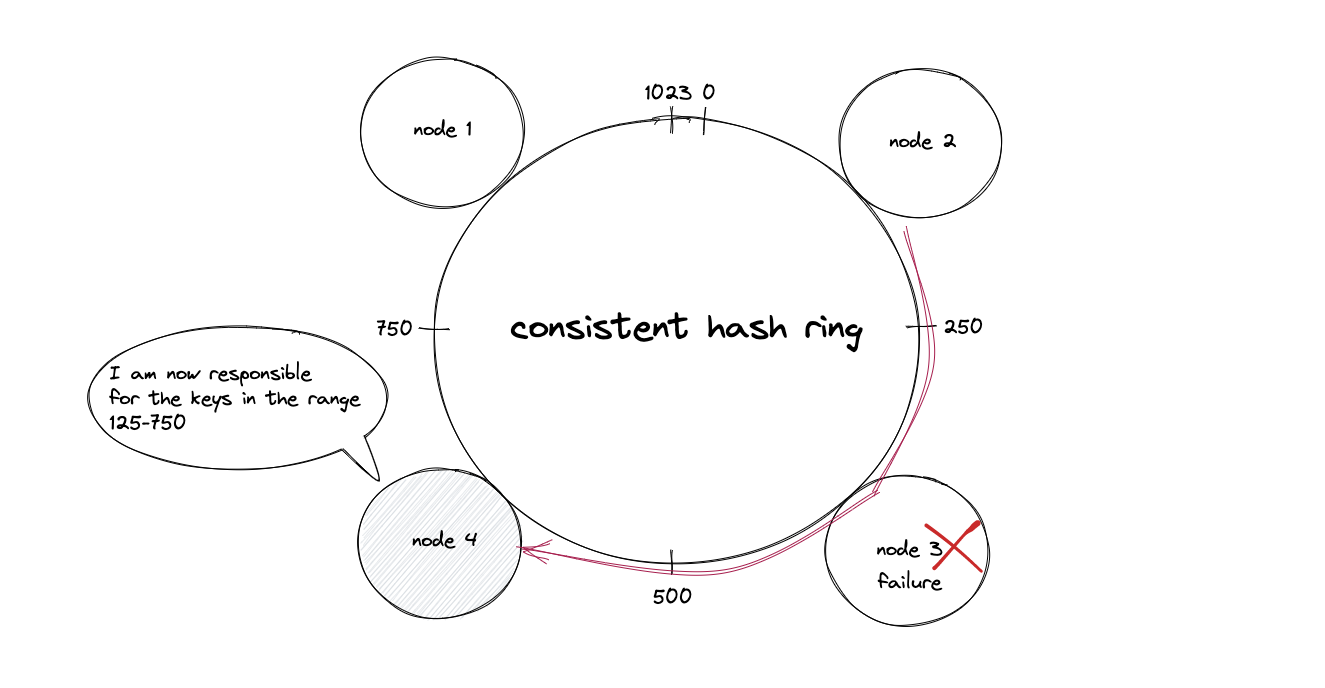

- Node Deletion: If a node fails or is removed, its responsibilities are seamlessly transferred to its clockwise neighbor. The data objects it managed are reallocated to this adjacent node. Figure 17 illustrates how the keys managed by a failed node are reassigned to the next available node on the ring, significantly reducing the impact of node failures.

This approach drastically reduces the number of data reassignments compared to static hash partitioning. For example, if N is the number of nodes and k is the number of keys, adding or removing a node typically only requires remapping approximately k/N keys, a substantial improvement over rehashing the entire dataset.

Addressing the Distribution Challenge: Virtual Nodes

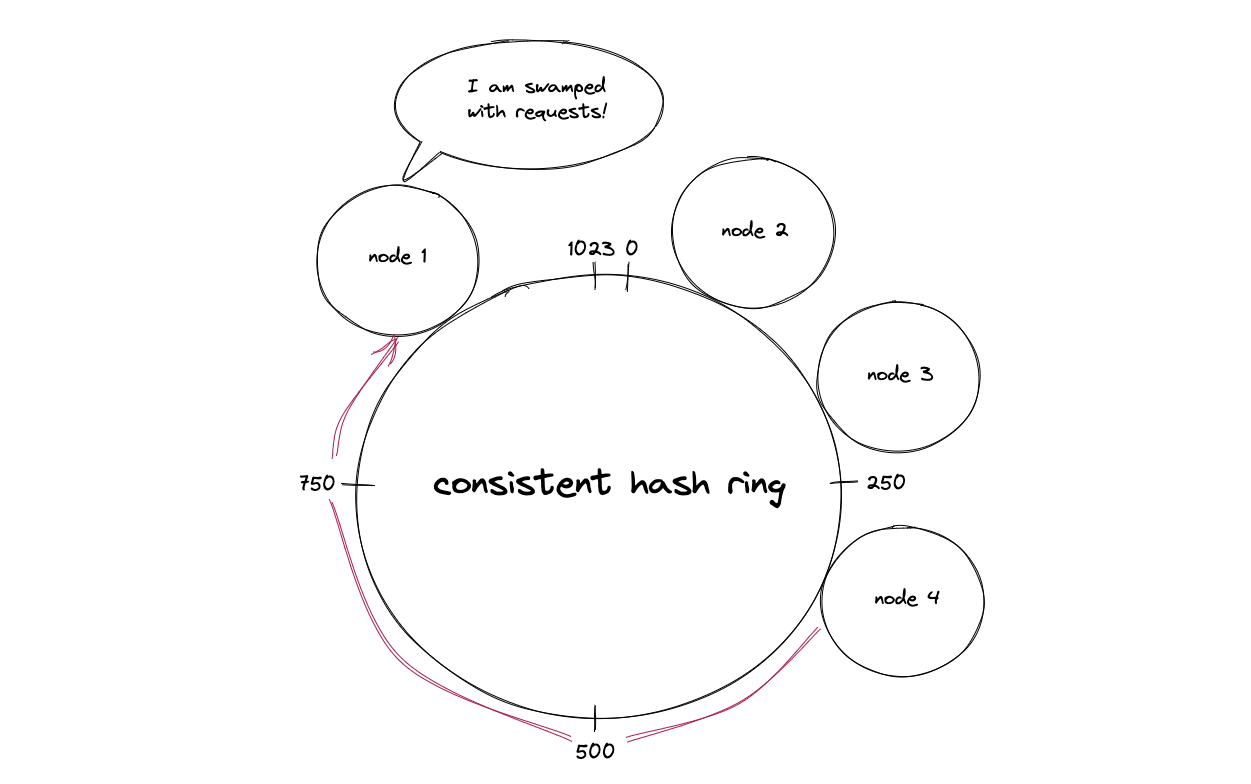

A potential drawback of consistent hashing can be the non-uniform distribution of nodes on the hash ring, leading to "hotspots" where certain nodes handle a disproportionately large amount of traffic. To mitigate this, the concept of virtual nodes was introduced.

Virtual nodes allow a single physical server to be represented by multiple points on the hash ring. By hashing a server’s ID multiple times or using different hash functions, a single physical node can have numerous virtual representations scattered across the ring. This effectively increases the number of "servers" on the ring, leading to a more even distribution of keys and thus a more balanced load across the physical nodes. Figure 20 visually explains this concept, where a single server can occupy multiple positions on the ring. The number of virtual nodes assigned to a physical node can be adjusted based on its capacity, further enhancing load balancing for heterogeneous systems.

Implementation and Complexity

The implementation of consistent hashing typically relies on efficient data structures to manage the node positions on the hash ring. A self-balancing binary search tree (BST), such as a Red-Black tree or AVL tree, is commonly employed. These structures offer logarithmic time complexity (O(log n)) for key operations like searching, insertion, and deletion, where ‘n’ is the number of nodes.

The asymptotic complexity of consistent hashing operations is as follows:

- Add a Node: O(k/n + log n) – This includes O(k/n) for redistributing keys and O(log n) for BST traversal.

- Remove a Node: O(k/n + log n) – Similar to adding a node, involving key redistribution and BST traversal.

- Add a Key: O(log n) – Primarily for finding the appropriate node via BST traversal.

- Remove a Key: O(log n) – Also involves BST traversal to locate the key’s responsible node.

Concurrency management is crucial when multiple nodes are added or removed simultaneously. A readers-writer lock is often used to synchronize access to the BST, ensuring data consistency while minimizing performance impact.

The choice of hash function is also critical. While cryptographic hashes like MD5 or SHA-256 offer strong uniformity, their computational cost can be prohibitive. Faster, non-cryptographic hash functions like MurmurHash, xxHash, or MetroHash are often preferred for their balance of speed and distribution quality.

Real-World Applications and Optimizations

Consistent hashing is a foundational technology powering many large-scale distributed systems:

- Content Delivery Networks (CDNs): Services like Netflix use consistent hashing to distribute video content across their global CDN infrastructure, ensuring efficient video streaming to users worldwide.

- Distributed Databases: NoSQL databases such as Amazon DynamoDB, Apache Cassandra, and Riak leverage consistent hashing for data partitioning and incremental scalability, allowing them to handle massive datasets.

- Distributed Caching Systems: Memcached clients, through implementations like libketama, utilize consistent hashing to distribute cache load across a cluster of servers.

- Load Balancers: Systems like HAProxy incorporate variants of consistent hashing for intelligent traffic distribution.

- Communication Platforms: Discord employs consistent hashing to identify and manage the nodes responsible for hosting specific chat rooms or servers, enabling efficient communication for millions of concurrent users.

Beyond the basic consistent hashing algorithm, several optimizations and variations have been developed to address specific challenges:

- Multi-Probe Consistent Hashing: This variant optimizes for faster lookups by probing multiple hash functions for a key, returning the data from the closest node. While offering constant O(1) amortized time complexity for node additions/removals, key lookups can be slower.

- Consistent Hashing with Bounded Loads: This approach introduces an upper limit on the load a node can handle. If a node becomes overloaded, requests are redirected to fallback nodes. This mechanism effectively addresses "hotspots" for highly popular data objects.

Advantages and Disadvantages

The benefits of consistent hashing are substantial:

- Minimized Data Reallocation: Significantly reduces data movement when nodes are added or removed, improving system stability and performance.

- Scalability: Allows for horizontal scaling by easily adding or removing nodes without major system reconfiguration.

- Fault Tolerance: Node failures are handled gracefully with minimal impact on other parts of the system.

- Load Balancing: Distributes data and traffic effectively across available nodes.

However, consistent hashing is not without its drawbacks:

- Complexity: Implementing and managing consistent hashing can be more complex than simpler methods.

- Non-Uniformity: Without virtual nodes, an uneven distribution of nodes can lead to hotspots.

- Key Distribution: The distribution of keys can still be uneven if the hash function is not perfectly uniform or if the keys themselves exhibit patterns.

Conclusion

Consistent hashing represents a critical advancement in the design of scalable and resilient distributed systems. By intelligently mapping data and nodes onto a virtual ring, it provides an elegant solution to the challenges of dynamic load management, fault tolerance, and efficient data distribution. Its widespread adoption across major internet services underscores its effectiveness and its indispensable role in powering the modern digital infrastructure. As systems continue to grow in complexity and scale, the principles of consistent hashing will remain fundamental to ensuring their performance and reliability.