Meta Engineers Revolutionize Software Quality with Agentic Development and Just-in-Time Testing

Meta has reported a significant leap forward in software quality assurance, driven by the adoption of a novel Just-in-Time (JiT) testing approach. This innovative strategy dynamically generates tests during the code review process, effectively rendering long-lived, manually maintained test suites obsolete. This paradigm shift, detailed in Meta’s engineering blog and accompanying research, promises to dramatically enhance bug detection, particularly within the rapidly evolving landscape of AI-assisted development environments, with early results indicating an approximate fourfold improvement in bug detection rates.

The impetus behind this transformative change stems from the rise of agentic workflows. In these scenarios, artificial intelligence systems are increasingly instrumental in generating and modifying substantial portions of code. Traditional testing methodologies, heavily reliant on static test suites, struggle to keep pace with this accelerated development cycle. The inherent brittleness of manually crafted assertions and the challenge of maintaining comprehensive coverage in the face of constant, AI-driven code evolution lead to diminished effectiveness and escalating maintenance overhead.

Ankit K., an ICT Systems Test Engineer, astutely observed this impending shift, noting, "AI generating code and tests faster than humans can maintain them makes JiT testing almost inevitable." This sentiment underscores the core challenge: the traditional testing model is becoming increasingly outmoded as the pace of code generation outstrips the capacity for manual test suite upkeep.

The Dawn of Just-in-Time (JiT) Testing

Meta’s JiT testing approach directly addresses these challenges by generating tests precisely when they are needed – at the pull request stage. Instead of relying on a broad, static set of tests, the system analyzes the specific code differential (diff) presented in a pull request. This allows it to infer developer intent, pinpoint potential failure modes introduced by the changes, and construct highly targeted tests. These tests are specifically designed to fail if regressions have been introduced by the proposed code modifications, but crucially, they should pass when compared against the parent revision of the code.

The technical underpinnings of this system involve a sophisticated pipeline that integrates several advanced technologies. Large Language Models (LLMs) are employed to understand the semantic meaning of code changes and infer developer intent. Program analysis techniques are used to examine the code structure and identify areas that might be susceptible to errors. Furthermore, mutation testing plays a pivotal role by injecting synthetic defects into the code. This process validates the effectiveness of the generated tests by ensuring they can successfully detect these artificially introduced bugs, thereby confirming their ability to catch real-world regressions.

Mark Harman, a Research Scientist at Meta, eloquently articulated the philosophical shift this represents: "This work represents a fundamental shift from ‘hardening’ tests that pass today to ‘catching’ tests that find tomorrow’s bugs." This statement highlights a crucial evolution in testing strategy, moving from a focus on ensuring existing functionality remains stable to proactively identifying and preventing future defects.

The "Dodgy Diff" and Intent-Aware Architecture

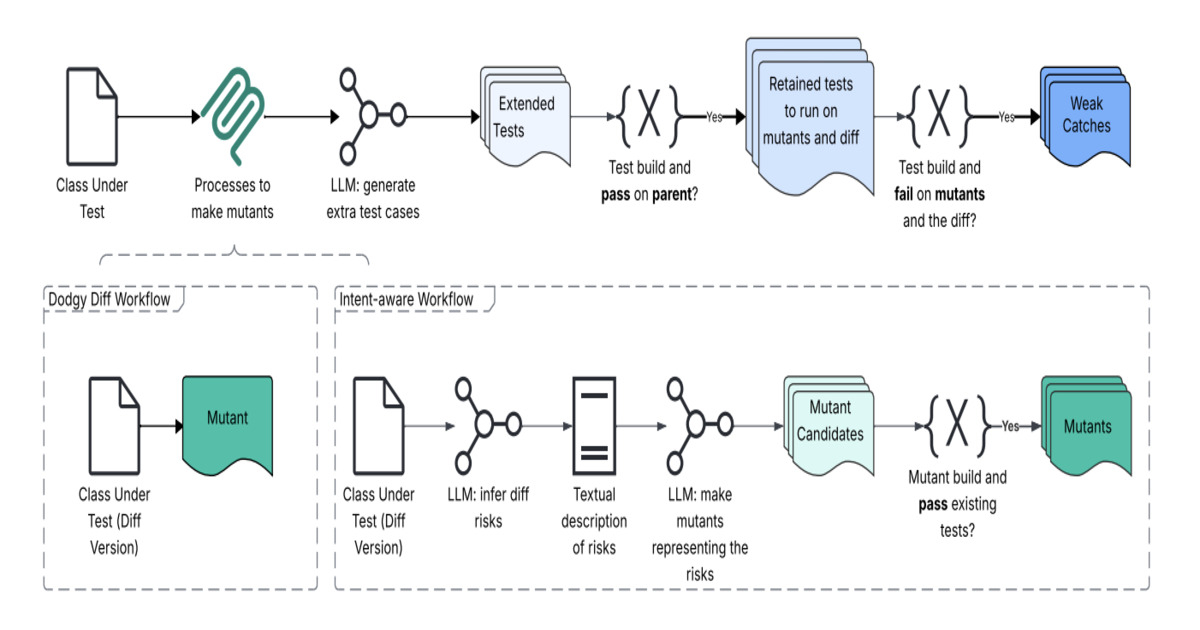

A cornerstone of Meta’s JiT testing framework is the "Dodgy Diff" and intent-aware workflow architecture. This innovative approach re-frames code changes not merely as textual alterations, but as signals of semantic intent. The system meticulously analyzes the code diff to extract the intended behavior and identify areas of elevated risk. This analysis then feeds into an intent reconstruction and change-risk modeling phase, enabling a deeper understanding of what aspects of the codebase might be negatively impacted by the proposed modifications.

These inferred signals are then used to drive a mutation engine. This engine generates "dodgy" variants of the code, effectively simulating realistic failure scenarios that could arise from the proposed changes. Following this, an LLM-based test synthesis layer constructs new tests that are precisely aligned with the inferred developer intent. To ensure efficiency and relevance, these generated tests undergo a filtering process designed to eliminate noisy or low-value tests before the results are presented directly within the pull request interface. This streamlined workflow ensures that developers receive actionable feedback promptly, facilitating faster iteration and improvement.

The accompanying visual representation, illustrating the architecture of "Dodgy Diff" and Intent-Aware Workflows, showcases a complex yet elegant system. This diagram, sourced from a Meta Research Paper, provides a high-level overview of how code changes are analyzed, potential risks are modeled, and targeted tests are synthesized to catch regressions. The inclusion of this visual element in Meta’s reporting underscores the technical sophistication of their approach.

Quantifiable Improvements and Real-World Impact

The efficacy of Meta’s JiT testing system has been rigorously evaluated. The system was put to the test on over 22,000 generated tests, yielding compelling results. Notably, it demonstrated a fourfold improvement in bug detection compared to baseline-generated tests. Even more impressively, it achieved up to a twentyfold improvement in detecting meaningful failures, distinguishing them from coincidental outcomes.

In one specific evaluation subset, the JiT testing system successfully identified 41 potential issues. Of these, eight were confirmed as genuine defects, including several that posed a significant risk of impacting production systems. This demonstrates the system’s capability to move beyond theoretical improvements and deliver tangible benefits in preventing critical bugs from reaching users.

Mark Harman, in a separate LinkedIn post, further emphasized the broader significance of this development for the field of software testing. He highlighted: "Mutation testing, after decades of purely intellectual impact, confined to academic circles, is finally breaking out into industry and transforming practical, scalable Software Testing 2.0." This observation points to a wider trend of advanced testing techniques transitioning from theoretical research into practical, industry-leading applications.

The Future of Software Testing in the Age of AI

The core philosophy behind "Catching JiT tests" is their design for AI-driven development. By being generated on a per-change basis, these tests offer a dynamic and adaptive approach to fault detection. They aim to identify serious, unexpected bugs without the burden of ongoing manual maintenance that plagues traditional test suites. This inherently reduces the accumulation of brittle tests that often become a liability as codebases evolve.

The shift from human-maintained test suites to AI-generated, change-specific tests represents a significant reallocation of effort, moving complex validation tasks from developers and testers to automated systems. Human oversight is then strategically deployed only when meaningful issues are surfaced by the automated system, allowing engineers to focus their valuable time on addressing critical bugs rather than maintaining an ever-growing test infrastructure. This effectively reframes the purpose of testing from static correctness validation to dynamic, change-specific fault detection, a more appropriate model for the current development landscape.

Broader Implications and Industry Adoption

Meta’s pioneering work in JiT testing has profound implications for the software development industry. As AI continues to play an increasingly dominant role in code generation and modification, the demand for adaptive and efficient testing strategies will only intensify. Companies across various sectors will likely face similar challenges in maintaining the quality and stability of their software in the face of rapid, AI-driven development cycles.

The success of Meta’s approach suggests a potential roadmap for other organizations looking to enhance their testing practices. The integration of LLMs, program analysis, and mutation testing, coupled with an intent-aware architecture, offers a powerful framework for building more robust and scalable testing solutions.

The transition to agentic development and JiT testing signifies a fundamental evolution in how software quality is ensured. It moves beyond the limitations of static, manual processes and embraces the dynamic capabilities of AI to create a more resilient and efficient software development lifecycle. As this technology matures, it is poised to become an indispensable component of modern software engineering, ensuring that the rapid pace of innovation does not come at the expense of reliability and quality. The industry is at a pivotal moment, and Meta’s advancements in JiT testing are likely to be a significant catalyst in shaping the future of software quality assurance.